#Anthropic

Total 158 articles

OpenAI's new Daybreak initiative uses the Codex AI agent to find and patch security vulnerabilities before attackers do—putting it in direct competition with Anthropic's secretive Claude Mythos.

Anthropic's Mythos AI found thousands of unknown software vulnerabilities. But cybersecurity experts say the same capability already exists in older, publicly available models — and defenses are nowhere near keeping up.

A closed Hormuz arrives at the kitchen table, Musk vs. Altman puts a trillion dollars on trial, the Big Four bet $650 billion on AI, and Trump sends 25% tariff invoices to long-time allies. K-pop's seventeen-year slave-contract era closes with a standard-contract reform.

PRISM by Liabooks

Place your ad in this space

[email protected]

Anthropic is closing what may be its final private fundraise at a $900B valuation, surpassing OpenAI. Investors have 48 hours to commit to the ~$50B round.

Tim Cook's 25-year goodbye, $40 billion poured into Anthropic, shots near the White House dinner, and TXT's first Grand Slam — a week of power handed over and crises distributed.

Anthropic's tightly restricted Mythos AI—designed to find security flaws—was accessed by Discord sleuths without a single line of exploit code. Meanwhile, North Korean hackers used AI to steal $12M in three months. The security paradox of 2026.

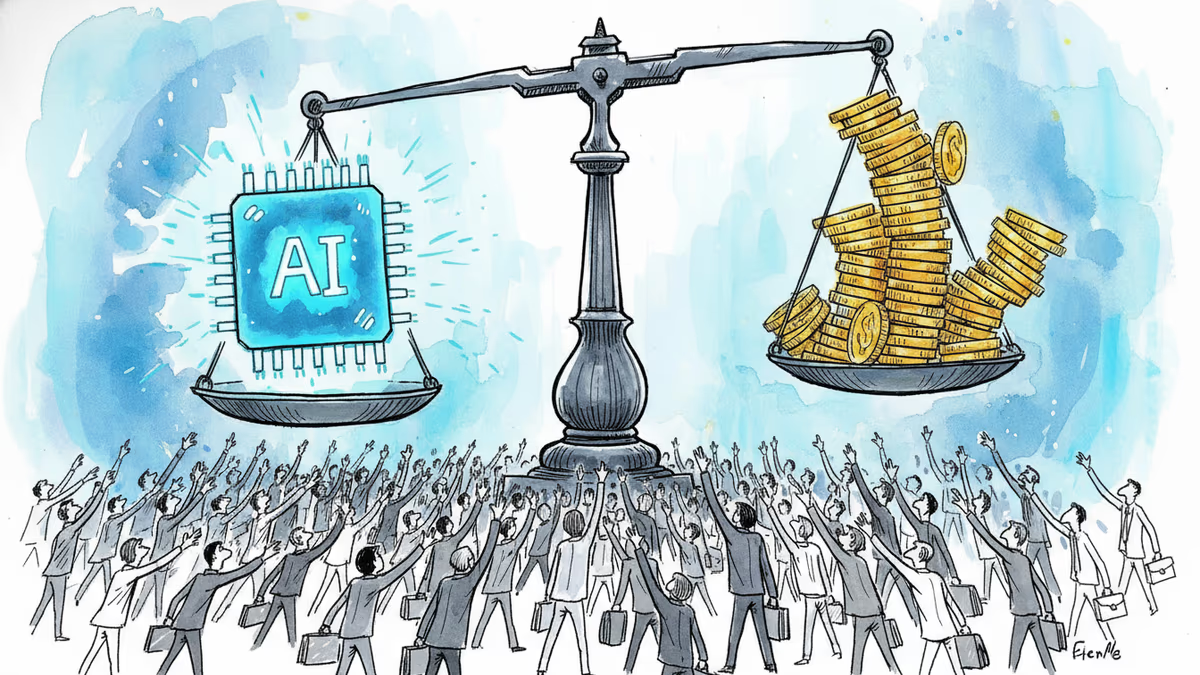

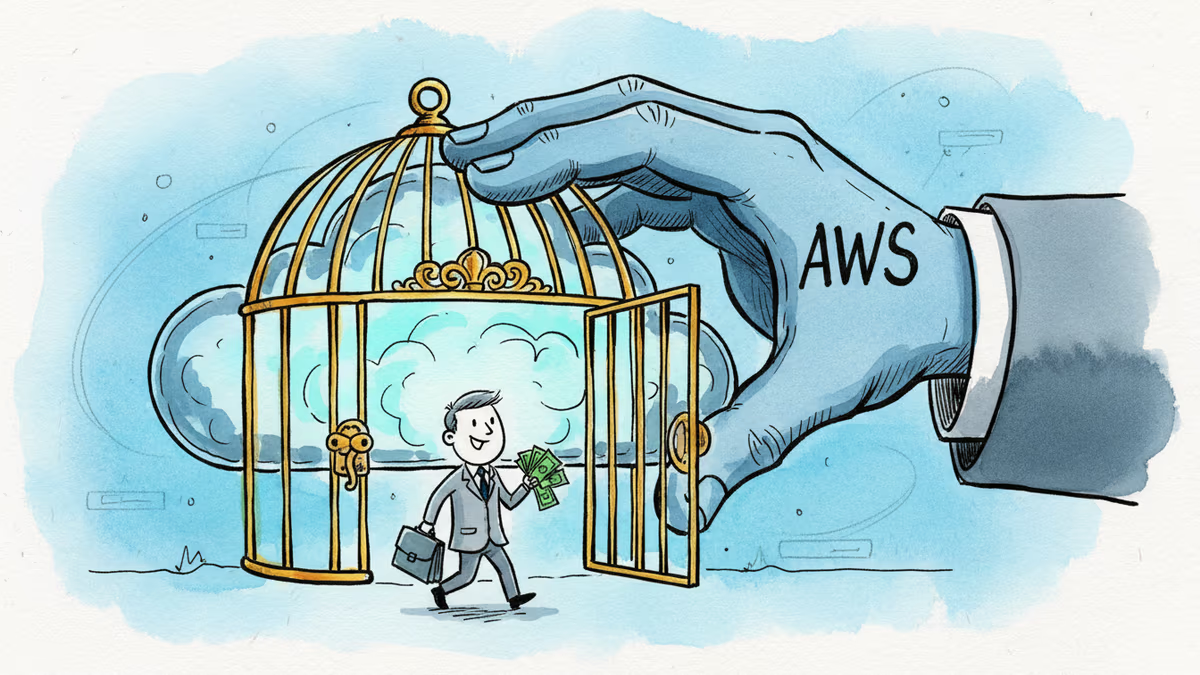

Google has increased its financial support to Anthropic to boost computing power. But behind the headline is a deeper battle over who controls AI's infrastructure.

PRISM by Liabooks

Place your ad in this space

[email protected]

Google is investing at least $10 billion in Anthropic, potentially up to $40 billion. With Amazon's $5B deal just days earlier, two tech giants are now backing the same AI startup — valued at $350 billion.

Google is committing up to $40 billion to Anthropic, a direct AI competitor. The deal reveals how the real AI arms race isn't about models — it's about who controls the infrastructure beneath them.

Anthropic's AI cybersecurity model is reportedly available to the NSA and Commerce Department—but not to CISA, the agency responsible for defending US federal infrastructure. What that gap reveals.

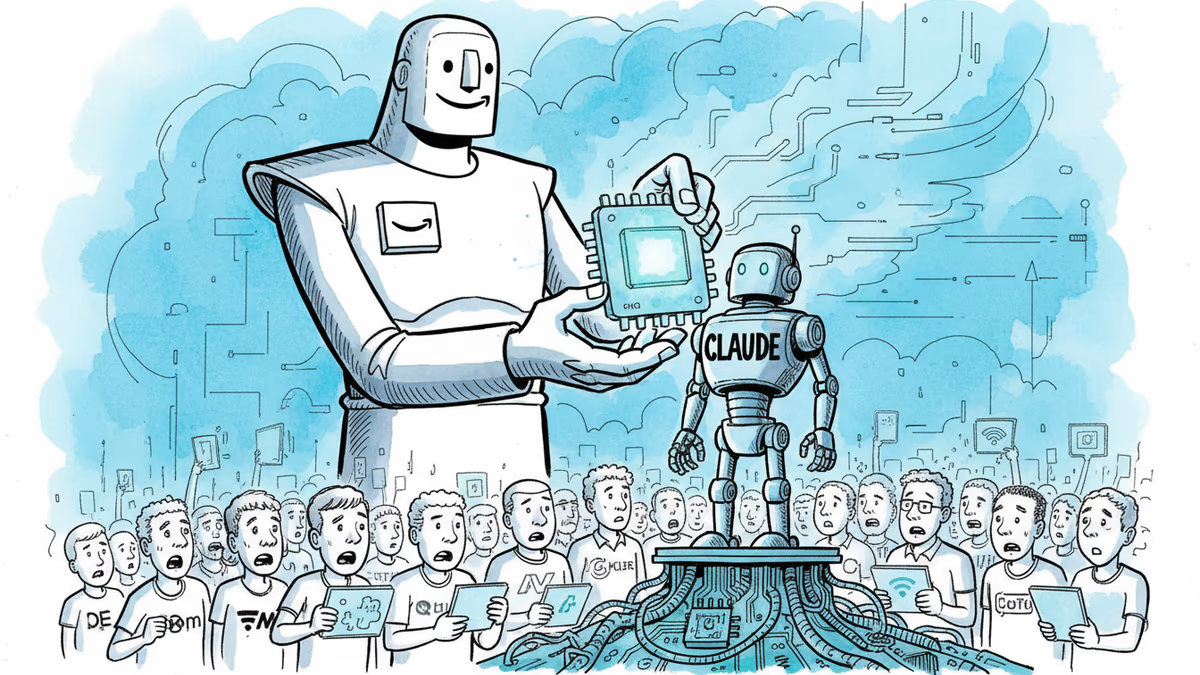

Amazon has poured an additional $5 billion into Anthropic, bringing its total stake to $13 billion—with up to $20 billion more on the table. Here's what the deal really signals about the AI infrastructure race.

PRISM by Liabooks

Place your ad in this space

[email protected]

Amazon's fresh $5B investment in Anthropic brings its total to $13B. But the real story is a $100B AWS spending pledge and a bet on Amazon's own AI chips over Nvidia.