Anthropic's $5B Deal Is Really About Amazon's Chip Ambitions

Amazon's fresh $5B investment in Anthropic brings its total to $13B. But the real story is a $100B AWS spending pledge and a bet on Amazon's own AI chips over Nvidia.

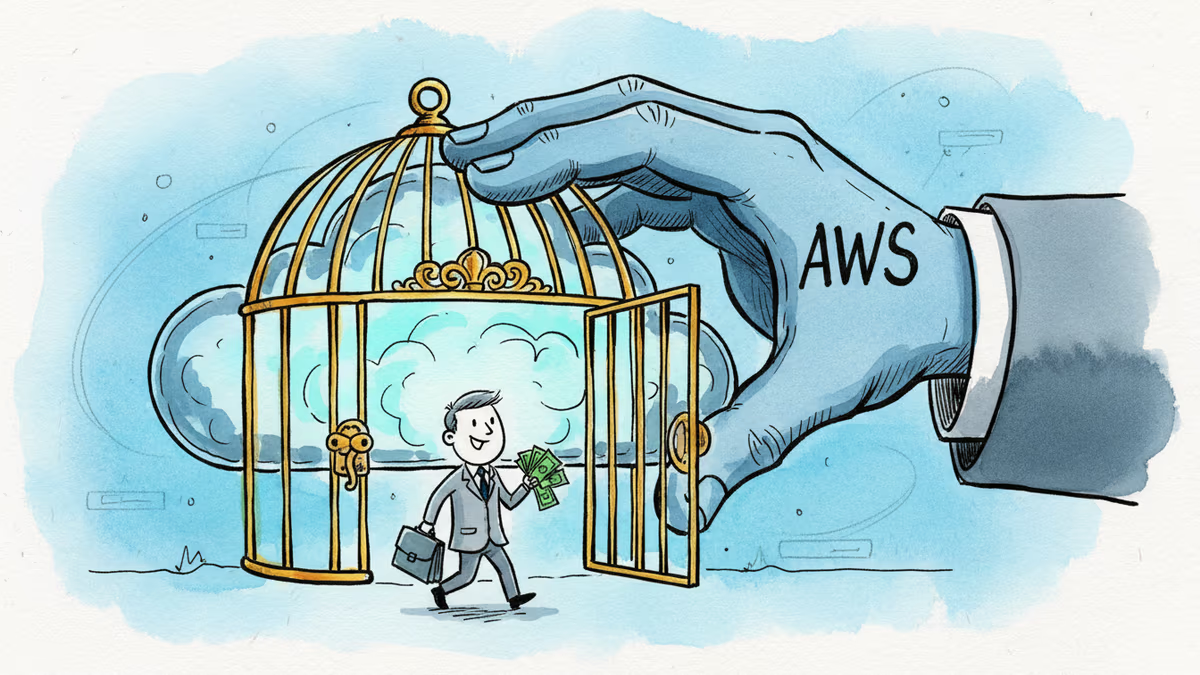

What kind of investment requires the recipient to spend 20 times more than they receive?

Anthropic announced Monday that Amazon has agreed to inject another $5 billion into the AI startup, lifting Amazon's total commitment to $13 billion. In return, Anthropic has pledged to spend over $100 billion on AWS over the next 10 years — securing up to 5 gigawatts of computing capacity to train and run its Claude models.

By any conventional measure, this isn't a typical venture investment. It's something closer to a decade-long infrastructure marriage, with Amazon as the landlord.

The Chips Are the Point

Bury the headline about the dollar figures. The more consequential detail sits in the contract's fine print: the deal specifically covers Amazon's custom AI accelerator chips — Trainium2 through Trainium4 — including chips that don't yet exist. Anthropic has also secured options to purchase capacity on future Amazon chips as they become available.

Amazon has been quietly building its own silicon stack for years, partly to reduce its dependence on Nvidia. Graviton handles general-purpose compute; Trainium targets AI training workloads directly. The problem with custom chips, however, is that hardware ecosystems live or die by adoption. Without marquee customers running real workloads on your silicon, the ecosystem stalls.

Enter Anthropic. One of the world's most computationally intensive AI labs committing to run Claude on Trainium chips is the kind of reference customer that money can't easily buy — except, apparently, it can.

Two months ago, Amazon joined OpenAI's $110 billion funding round, contributing $50 billion in a deal also structured partly as cloud infrastructure services. The pattern is unmistakable: Amazon is betting that whoever wins the AI model race, the models will run on AWS.

What This Means for the Market

For investors, the valuation trajectory is striking. VCs are reportedly circling Anthropic at a pre-money valuation of $800 billion or more — a figure that would make it one of the most valuable private companies in history, surpassing many established Fortune 500 firms.

For cloud competitors, the alarm bells are louder. Google was an early Anthropic investor and remains a backer. Watching a portfolio company commit $100 billion to a rival cloud platform is an uncomfortable position, even by Silicon Valley's famously tangled standards. Microsoft, which has its own deep OpenAI entanglement, is watching the same dynamic play out in parallel.

For Nvidia, the implications are more subtle but worth watching. If Anthropic — a bellwether AI lab — builds its training and inference pipelines around Trainium chips, it signals that the GPU monoculture has a credible challenger. That doesn't threaten Nvidia's dominance overnight, but it chips away at the assumption that H100s and B200s are the only viable path.

The Independence Question Nobody Wants to Ask

Anthropic was founded on a specific premise: that AI safety should drive development decisions, not commercial pressure. Its founders left OpenAI partly over concerns about that balance. The company has consistently positioned itself as the responsible adult in the AI room.

But a 10-year, $100 billion commitment to a single cloud vendor raises a structural question that doesn't go away just because the vendor is Amazon. When your infrastructure costs are this deeply tied to one provider, how independent can your research roadmap actually be? What happens if Amazon's commercial priorities and Anthropic's safety priorities diverge?

This isn't a hypothetical concern unique to Anthropic. It's the central tension of the current AI funding era: the capital required to compete at the frontier is so enormous that true independence may be structurally impossible.

Authors

Related Articles

Snowflake's new $6 billion AWS contract is about more than cloud spending. It signals a shift in AI infrastructure—away from Nvidia GPUs and toward cheaper, homegrown chips for the agent era.

A small but growing group of developers has gone all-in on AI coding agents like Claude Code and OpenClaw. History suggests the rest of us won't be far behind.

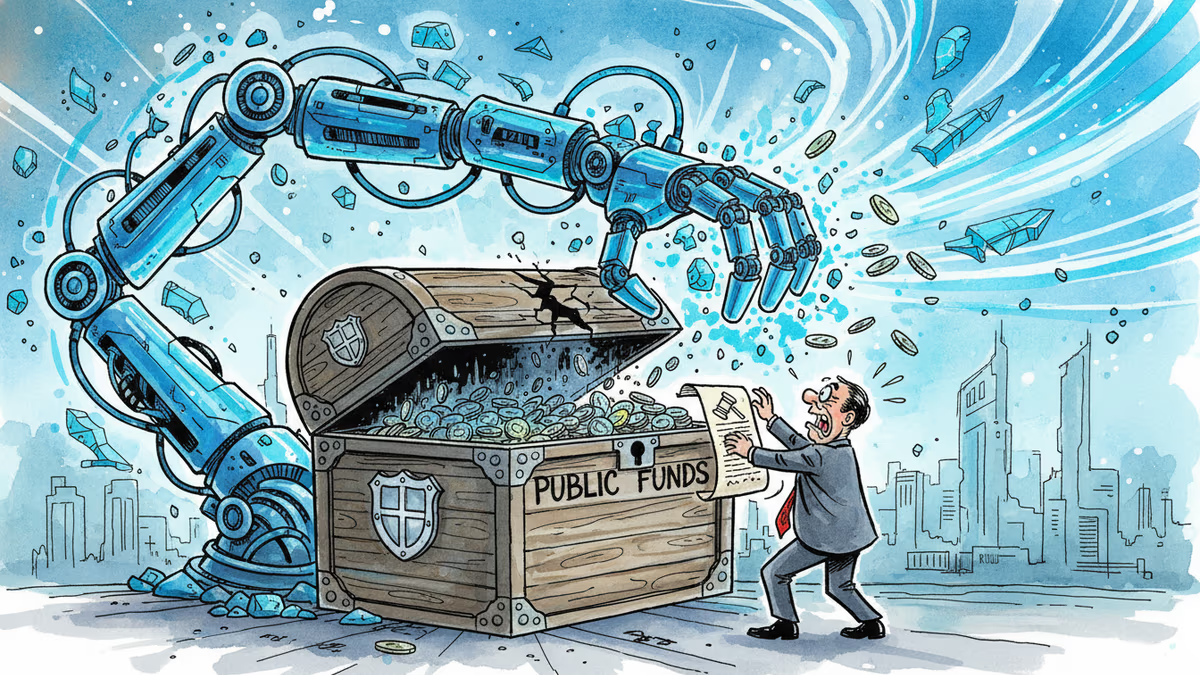

The US government invested $2 billion in quantum computing startups, but Congress says the money was never authorized for that purpose. The fate of IBM-backed Anderon hangs in the balance.

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Thoughts

Share your thoughts on this article

Sign in to join the conversation