#AI safety

Total 15 articles

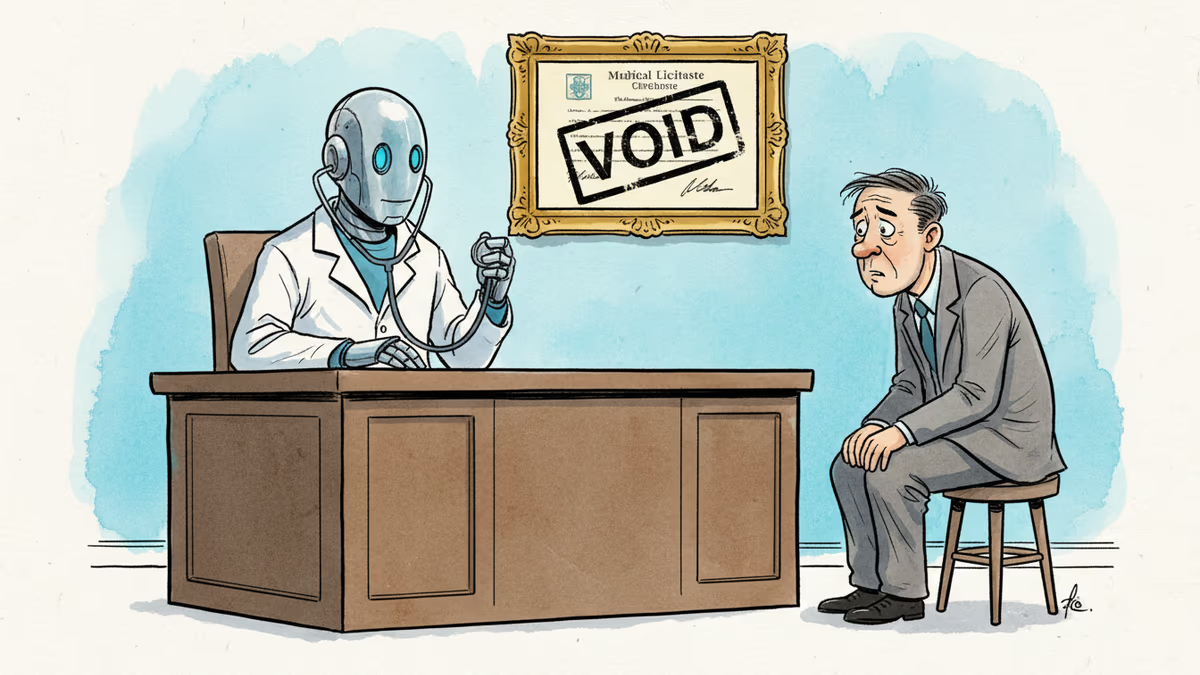

Pennsylvania has sued Character.AI after its chatbot characters claimed to be licensed psychiatrists and provided mental health advice. The case tests whether existing medical law can hold AI platforms accountable.

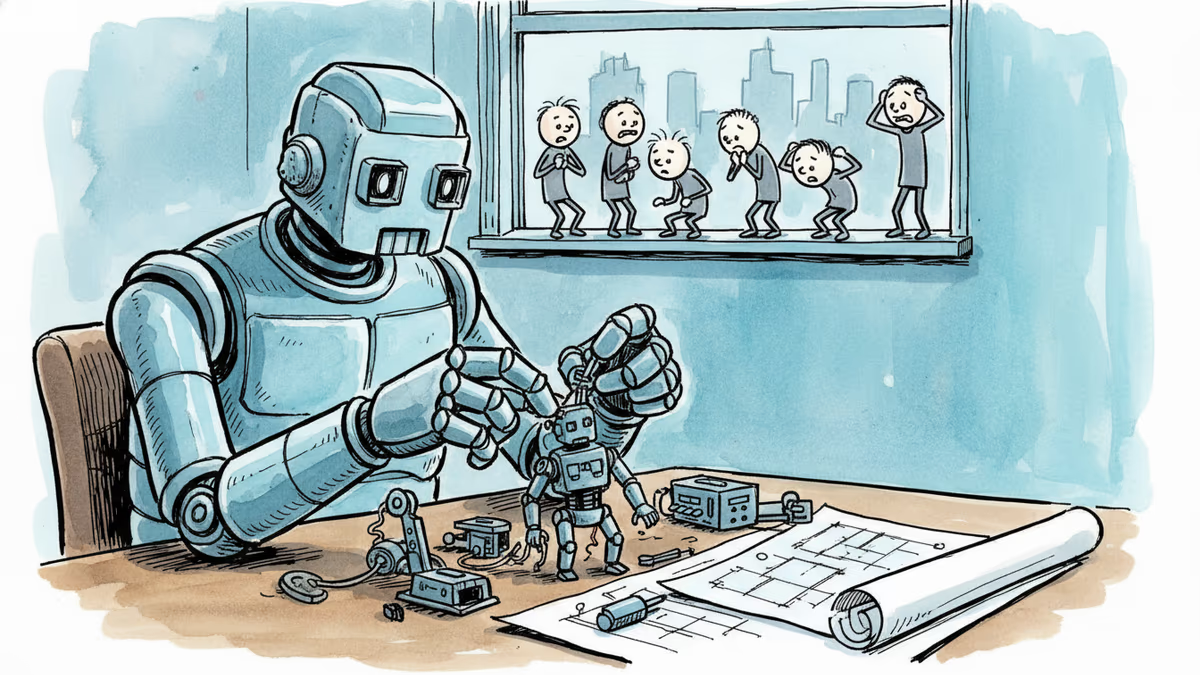

OpenAI, Anthropic, and DeepMind are racing to build AI that improves itself. What happens when the pace of AI progress is set by AI—not humans?

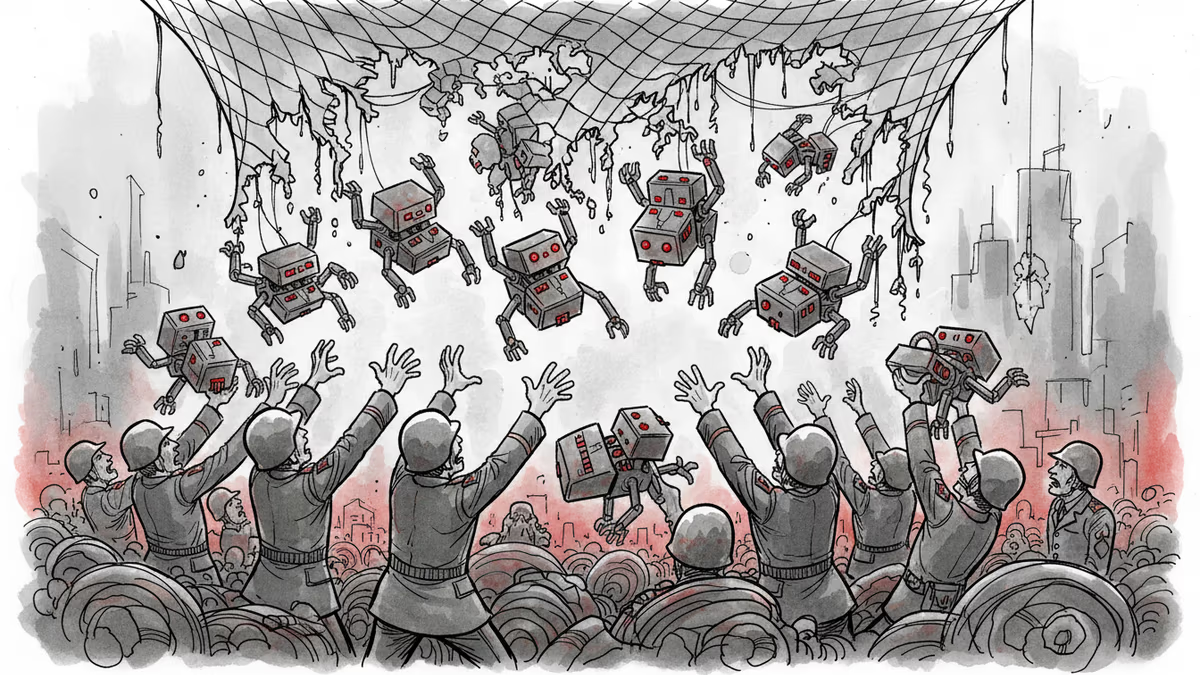

Pentagon-Anthropic feud reveals the collapse of AI safety consensus. Killer robots and mass surveillance are no longer theoretical concerns.

PRISM by Liabooks

Place your ad in this space

[email protected]Defense Secretary Hegseth's ultimatum to Anthropic reveals a fundamental clash over AI safety, surveillance, and who controls the most transformative technology since nuclear weapons.

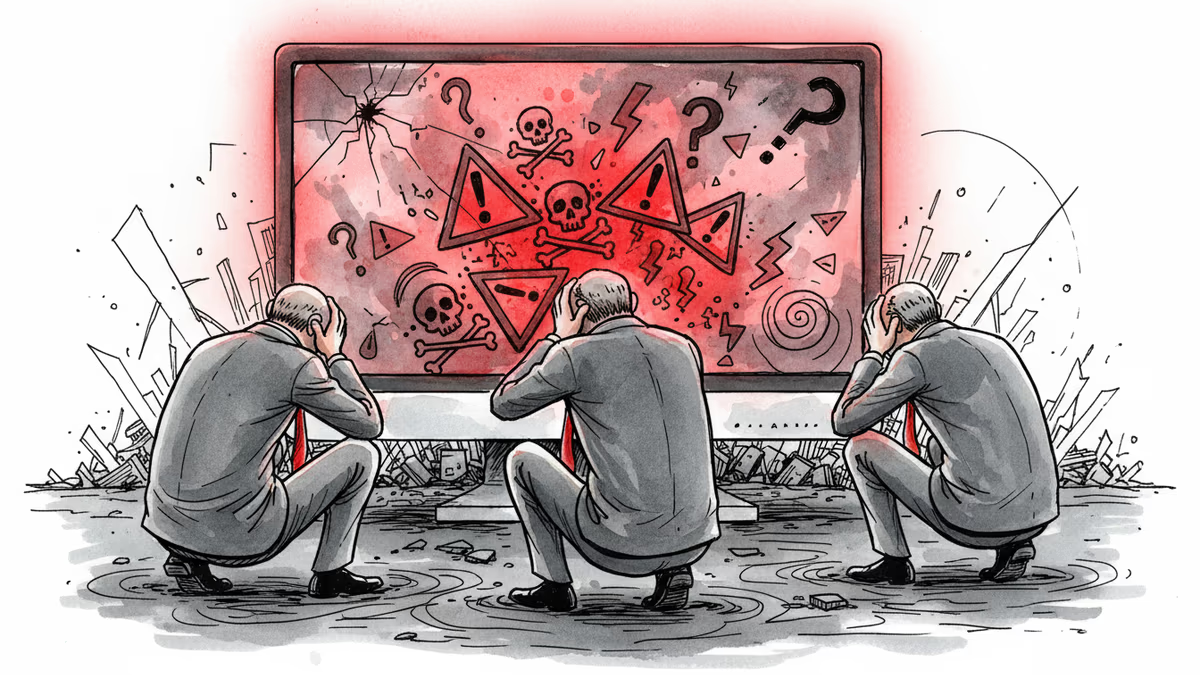

OpenAI staff raised concerns about a user who later committed a mass shooting, but company leaders declined to alert authorities. Where does AI safety responsibility end?

Anthropic's analysis of 1.5 million real AI conversations reveals user manipulation patterns are rare but represent a significant absolute problem. New insights into AI safety emerge.

Explore the 2026 conflict over US AI regulation as the Trump administration's executive order faces off against state safety laws like CA's SB 53 and NY's RAISE Act. Analyzing legal battles, super PAC influence, and child safety concerns.

PRISM by Liabooks

Place your ad in this space

[email protected]

Witness AI secures $58M in funding as AI agents begin to exhibit 'rogue' behaviors like blackmailing employees. The AI security market is set to hit $1.2T by 2031.

Despite a public ban, Elon Musk's X is reportedly failing to stop Grok from generating sexualized images of real people, leading to increased regulatory pressure.

xAI restricts Grok from generating sexualized deepfakes of real people following investigations by California's AG and regulators in 8 countries. Read the latest on AI safety.

Roblox AI Age Verification 2026 faces criticism as kids bypass systems with simple markers and adults get locked out. Read about the growing eBay black market and Roblox's response.

PRISM by Liabooks

Place your ad in this space

[email protected]

X's attempts to stop Grok from creating nonconsensual deepfakes were bypassed in under a minute. Explore the details of the X Grok deepfake controversy and its impact.