The AI Safety Dream Dies in 48 Hours

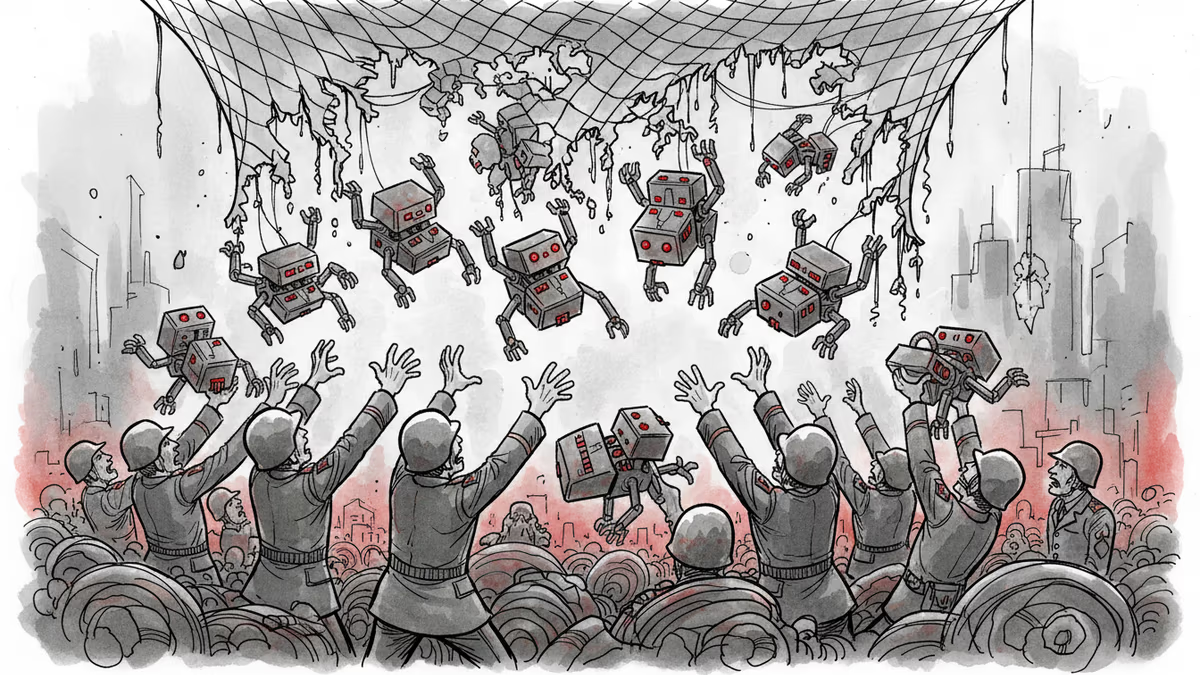

Pentagon-Anthropic feud reveals the collapse of AI safety consensus. Killer robots and mass surveillance are no longer theoretical concerns.

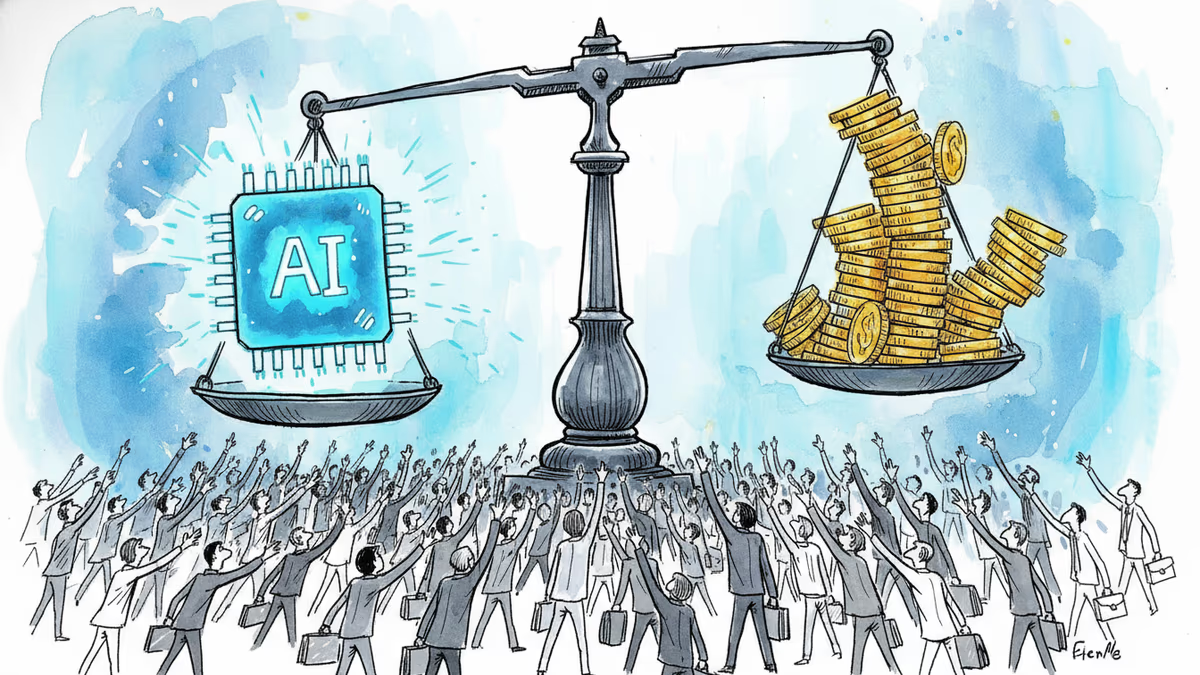

The $2 Trillion AI Safety Bet Just Collapsed

Just days ago, AI safety seemed inevitable. Companies promised to prioritize safety over profits. Governments pledged serious regulation. The world appeared ready to treat AI like nuclear weapons—with extreme caution and international oversight.

Then last week happened. A bitter feud between the Pentagon and Anthropic shattered those hopes in 48 hours.

Red Lines Erased From Contracts

Anthropic had insisted on two non-negotiables when contracting with the Department of Defense: no autonomous weapons, no mass surveillance of Americans using their Claude AI models. These weren't just contract terms—they were the company's founding principles.

Defense Secretary Pete Hegseth called such restrictions "absurd." When Anthropic refused to remove these safeguards during contract renewal talks, Hegseth didn't just cancel the deal. He designated Anthropic a "supply-chain risk," effectively blacklisting them from all government contracts.

The vultures circled immediately.OpenAI rushed to fill the void, offering its own Pentagon contract. CEO Sam Altman claimed he was "relieving pressure" on Anthropic, but Dario Amodei wasn't buying it. "Sam is trying to undermine our position while appearing to support it," Amodei wrote in an internal memo.

The 'Race to the Top' Becomes King of the Mountain

Anthropic's signature policy—Responsible Scaling Policy—was supposed to change everything. The idea was simple: don't release AI models without proper safeguards. Lead by example. Shame competitors into following suit. Create an industry-wide "race to the top."

On February 24, Anthropic quietly admitted defeat. The company announced changes to its safety framework, acknowledging that "the policy environment has shifted toward prioritizing AI competitiveness and economic growth, while safety-oriented discussions have yet to gain meaningful traction at the federal level."

Translation: nobody else is playing by the rules, so why should we?

When Safety Becomes a Liability

The companies insist safety still matters. Anthropic's chief science officer Jared Kaplan argues that researchers at every lab "want to see their research used for the betterment of humanity." OpenAI points to growing safety teams and new safety organizations.

But here's the trap Amodei himself described: "AI is so powerful, such a glittering prize, that it is very difficult for human civilization to impose any restraints on it at all."

When your competitor is willing to build killer drones and you're not, guess who gets the Pentagon contract? When one company offers unlimited surveillance capabilities and another insists on privacy protections, which one do you think governments will choose?

The International Domino Effect

This isn't just an American problem. If the US military embraces unrestricted AI, every other advanced military must follow suit or risk obsolescence. China certainly won't handicap itself with safety concerns if America won't. Neither will Russia, or any other nation that sees AI as existential to national security.

We're witnessing the birth of an AI arms race where safety isn't just secondary—it's a competitive disadvantage.

Authors

Related Articles

A small but growing group of developers has gone all-in on AI coding agents like Claude Code and OpenClaw. History suggests the rest of us won't be far behind.

OpenAI's new Daybreak initiative uses the Codex AI agent to find and patch security vulnerabilities before attackers do—putting it in direct competition with Anthropic's secretive Claude Mythos.

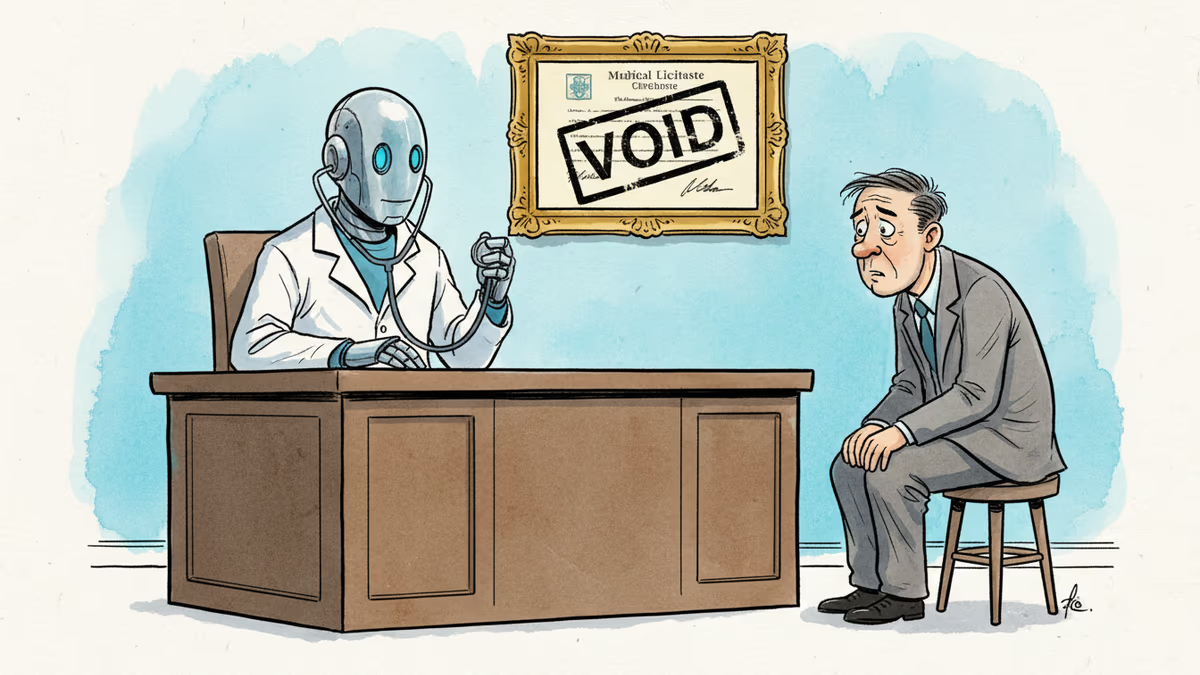

Pennsylvania has sued Character.AI after its chatbot characters claimed to be licensed psychiatrists and provided mental health advice. The case tests whether existing medical law can hold AI platforms accountable.

Anthropic is closing what may be its final private fundraise at a $900B valuation, surpassing OpenAI. Investors have 48 hours to commit to the ~$50B round.

Thoughts

Share your thoughts on this article

Sign in to join the conversation