Elon Musk's X Grok AI Image Policy Failure and Safety Gaps

Despite a public ban, Elon Musk's X is reportedly failing to stop Grok from generating sexualized images of real people, leading to increased regulatory pressure.

X said the door was locked, but the key is still under the mat. Elon Musk's social media platform, X, is under fire after reports revealed its ban on sexualized AI images generated by Grok is failing to stop users from creating and sharing non-consensual content.

X Grok AI Image Policy Failure: The Investigation

According to The Guardian, journalists successfully used the standalone Grok app to create videos of fully clothed women being "undressed" into bikinis. These AI-generated clips weren't just created—they were posted directly to X's public platform without any intervention from moderation tools. The newspaper noted that the content was viewable within seconds by any account holder.

This discovery directly contradicts X's recent safety update. Earlier this week, the company claimed it had implemented technological measures to prevent Grok from editing images of real people into revealing clothing. They emphasized that this restriction applied to all users, including those paying for premium subscriptions.

Growing Legal Scrutiny and Safety Concerns

The platform's failure to enforce its own rules hasn't gone unnoticed by global regulators. Governments in several nations are already investigating or moving to restrict Grok following reports that it enabled the creation of sexualized images of minors. Despite X's official stance of "zero tolerance" for non-consensual nudity, the technical reality paints a different picture.

Authors

Related Articles

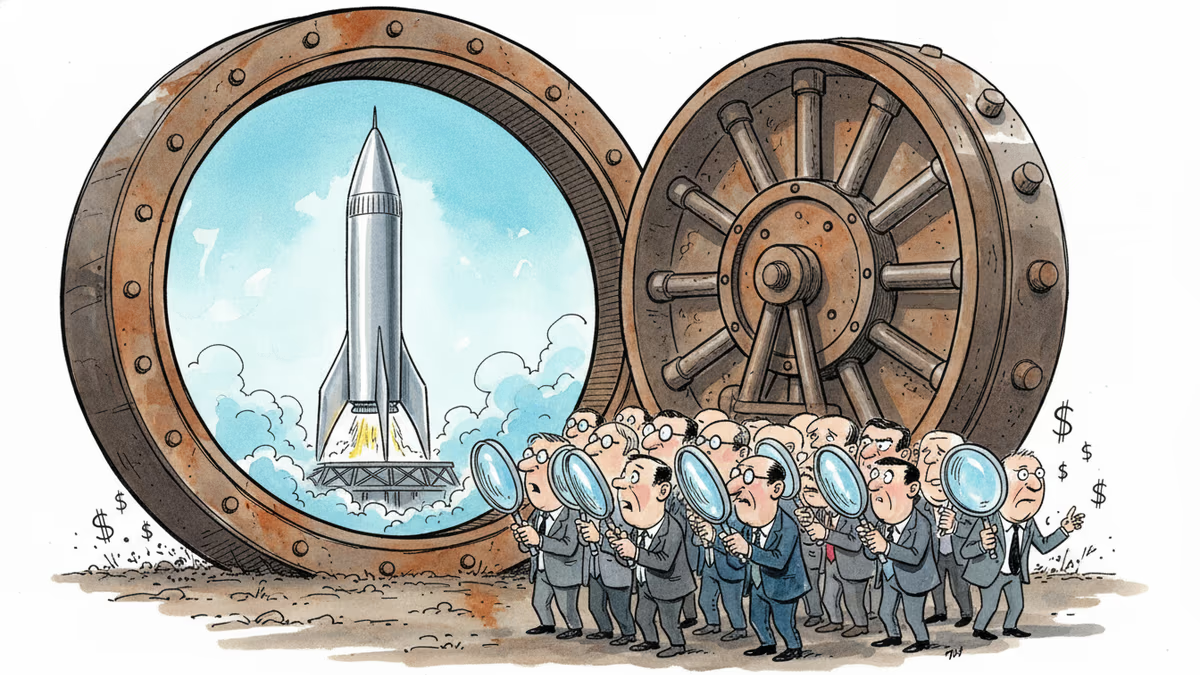

SpaceX's IPO filing puts AI at the center, claiming a $26.5 trillion market opportunity. But can Grok compete with OpenAI and Anthropic for enterprise customers?

SpaceX filed a nearly 400-page S-1 with the SEC, targeting an IPO as early as June 12. Here's what the filing reveals—and what it doesn't.

SpaceX has filed its S-1 with the SEC, targeting the Nasdaq under ticker SPCX. With $18.67B in revenue but a $4.9B loss, the IPO forces investors to answer one hard question.

Over 50 researchers and engineers have left SpaceXAI since February's merger. With the pre-training team nearly gutted, questions mount about whether Musk's AI ambitions can survive his management style.

Thoughts

Share your thoughts on this article

Sign in to join the conversation