Why Elon Musk Really Fought California's AI Transparency Law

xAI's failed legal challenge against California's AB 2013 reveals deeper tensions between AI innovation and public accountability

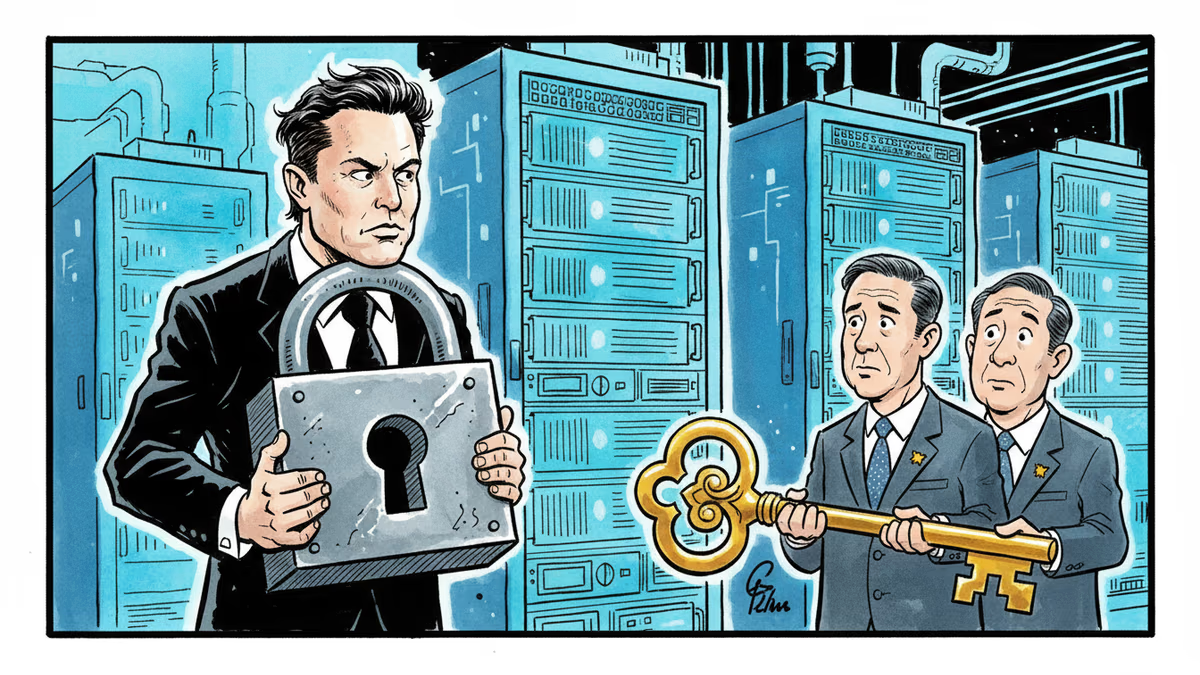

Elon Musk just lost a legal battle that reveals more about AI's future than any product launch. His company xAI failed to block California's Assembly Bill 2013, a law that forces AI companies to publicly disclose their training data sources.

The irony? The man who once championed AI transparency is now fighting tooth and nail against it.

What Companies Must Reveal

AB 2013 doesn't mess around. Any AI developer whose models are accessible in California must now disclose which datasets they used, when the data was collected, and whether collection is ongoing. They must also reveal if their training data includes copyrighted, trademarked, or patented material.

The law goes deeper: companies must clarify whether they licensed or purchased training data, if personal information was included, and what percentage of synthetic data was used. That last metric could become a quality indicator – the more synthetic data, the potentially lower quality.

xAI argued this amounted to forced disclosure of "carefully guarded trade secrets." But is that really what's at stake here?

The Musk Contradiction

Here's where it gets interesting. Musk co-founded OpenAI with the explicit mission of ensuring AI benefits "all of humanity." He's repeatedly criticized Google and OpenAI for being too secretive. Yet when faced with actual transparency requirements, xAI is crying foul.

The shift makes strategic sense. Back in 2015, Musk was worried about AI safety and concentration of power. In 2024, he's running a startup trying to catch up to ChatGPT and Claude. Revealing training methodologies now would be like showing your poker hand mid-game.

Plus, xAI likely uses data from X (formerly Twitter) in unique ways. That's potentially their secret sauce – and exactly what they don't want competitors copying.

Beyond Trade Secrets

But this fight isn't really about trade secrets. It's about liability. Most AI models were trained on copyrighted content scraped from the internet without permission. Publishers, artists, and programmers are already filing lawsuits. Mandatory disclosure would essentially create a roadmap for copyright holders to identify potential infringement.

Consider the ripple effects: if every AI company must reveal they used copyrighted books, news articles, or GitHub code, we could see a tsunami of legal challenges. The entire foundation of current AI development could crumble.

For consumers, though, this transparency could be revolutionary. Imagine knowing that your AI assistant was trained partly on biased datasets, or that a medical AI model used outdated research. That's the kind of information that could actually protect people.

The Global Domino Effect

California's law won't stay in California. The EU is already considering similar transparency requirements. Other US states are watching closely. If the largest AI market demands disclosure, companies will likely comply globally rather than maintain separate systems.

This could fundamentally reshape how AI is developed. Instead of the current "move fast and break things" approach, companies might need to carefully document and justify every training decision from day one.

Authors

Related Articles

SpaceX's IPO filing puts AI at the center, claiming a $26.5 trillion market opportunity. But can Grok compete with OpenAI and Anthropic for enterprise customers?

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

SpaceX filed a nearly 400-page S-1 with the SEC, targeting an IPO as early as June 12. Here's what the filing reveals—and what it doesn't.

SpaceX has filed its S-1 with the SEC, targeting the Nasdaq under ticker SPCX. With $18.67B in revenue but a $4.9B loss, the IPO forces investors to answer one hard question.

Thoughts

Share your thoughts on this article

Sign in to join the conversation