US AI Regulation 2026 Trump: The High-Stakes Clash Between Federal Power and State Laws

Explore the 2026 conflict over US AI regulation as the Trump administration's executive order faces off against state safety laws like CA's SB 53 and NY's RAISE Act. Analyzing legal battles, super PAC influence, and child safety concerns.

The gloves are off in the battle for the future of artificial intelligence. On December 11, 2025, President Donald Trump signed a sweeping executive order designed to handcuff states from regulating the AI industry. By vowing a "minimally burdensome" national policy, the administration aims to secure America's lead in the global AI race, delivering a massive win for tech titans who've spent millions lobbying against a patchwork of state-level restrictions.

US AI Regulation 2026 Trump: Federal Threats and State Resistance

Trump's executive order isn't just a suggestion—it's a directive with teeth. It empowers the Department of Justice (DOJ) to sue states whose AI laws clash with federal vision and directs the Department of Commerce to withhold broadband funding from states with "onerous" regulations. James Grimmelmann of Cornell Law School notes that the order will likely target liberal-leaning provisions focused on transparency and bias, setting the stage for a constitutional showdown.

Guardians of Safety: New York and California Hold the Line

Despite the threats, democratic strongholds aren't flinching. On December 19, Governor Kathy Hochul signed the RAISE Act, requiring AI companies to report safety protocols and incidents. Earlier, on January 1, 2026, California launched SB 53, the nation's first frontier AI safety law. These laws aim to prevent catastrophic harms like biological weapons, representing a fragile compromise between public safety and industrial innovation that Trump now seeks to dismantle.

Child Safety Crisis and the Super PAC War Chests

The human cost of unregulated AI has entered the courtroom. Following a settlement on January 7 involving Character.AI and teen suicide cases, Kentucky's attorney general filed a fresh lawsuit against the startup. As OpenAI and Meta face similar legal barrages, child safety has become a rare point of consensus for potential state-level legislation that might bypass Trump's ban. Meanwhile, super PACs like "Leading the Future," backed by Greg Brockman, are pouring tens of millions into elections to ensure the industry's vision prevails.

Authors

Related Articles

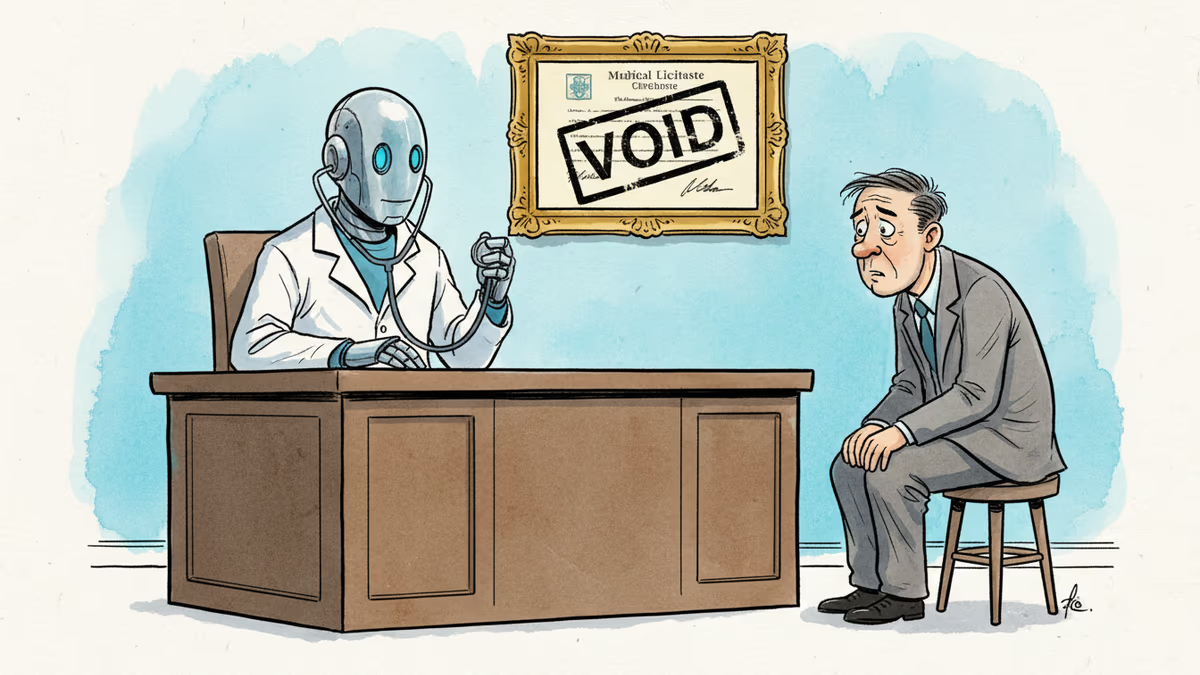

Pennsylvania has sued Character.AI after its chatbot characters claimed to be licensed psychiatrists and provided mental health advice. The case tests whether existing medical law can hold AI platforms accountable.

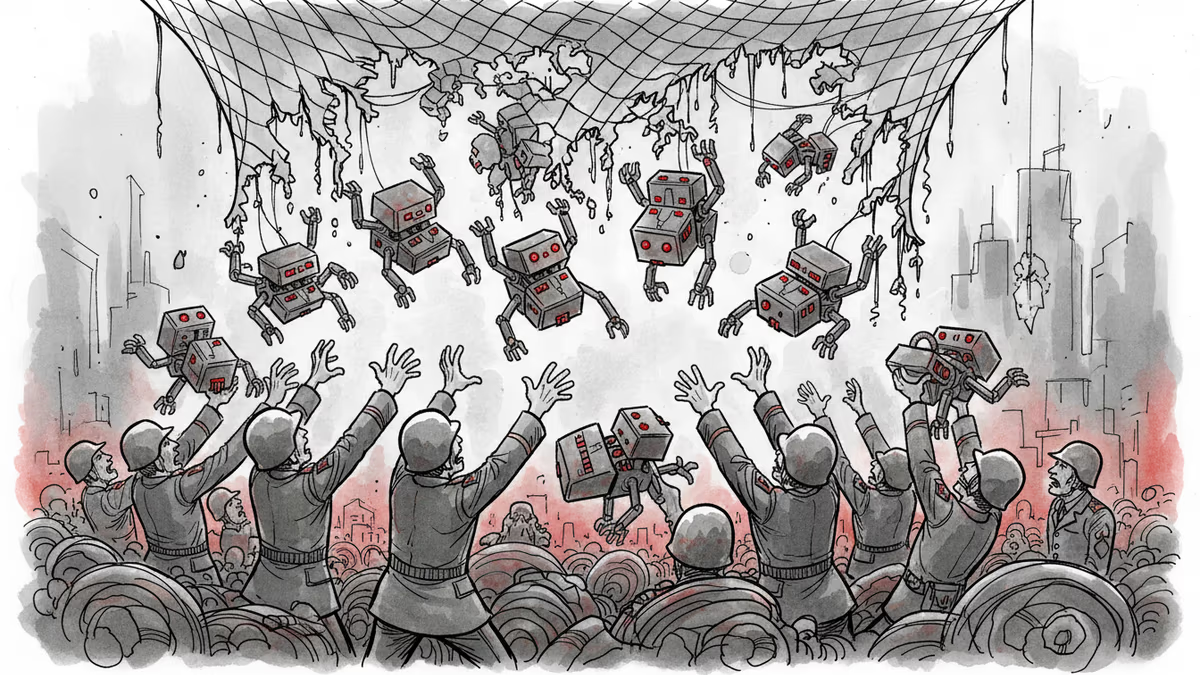

Pentagon-Anthropic feud reveals the collapse of AI safety consensus. Killer robots and mass surveillance are no longer theoretical concerns.

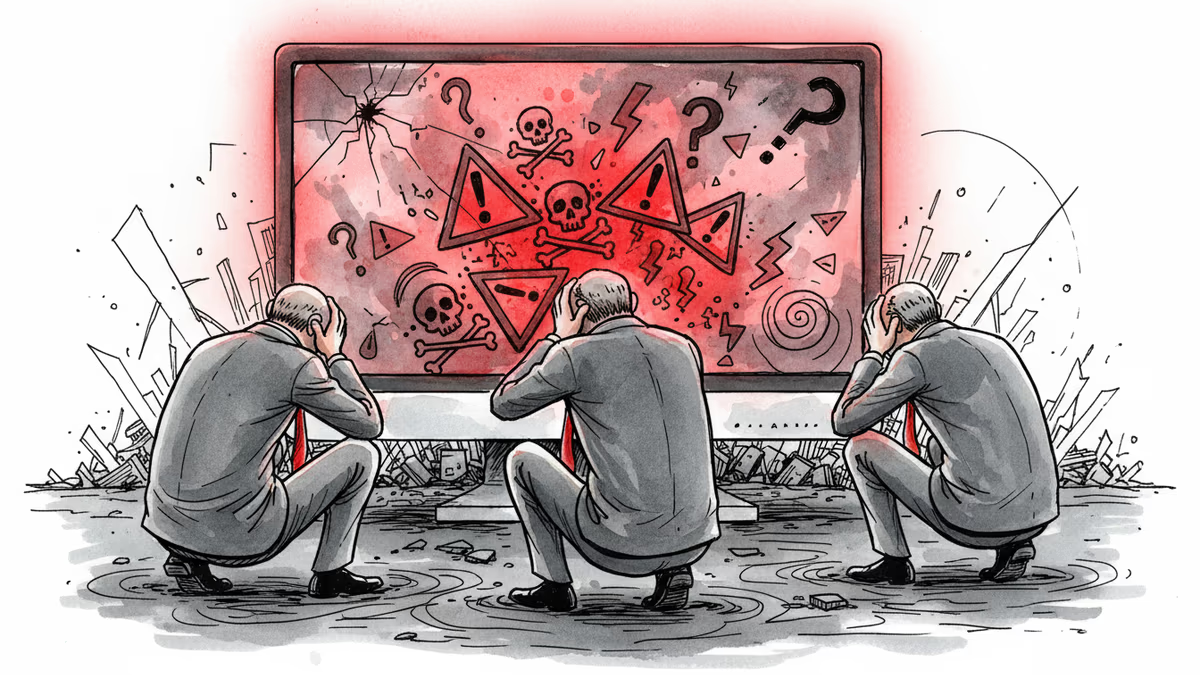

OpenAI staff raised concerns about a user who later committed a mass shooting, but company leaders declined to alert authorities. Where does AI safety responsibility end?

Anthropic's analysis of 1.5 million real AI conversations reveals user manipulation patterns are rare but represent a significant absolute problem. New insights into AI safety emerge.

Thoughts

Share your thoughts on this article

Sign in to join the conversation