Musk's xAI Grok Deepfake Restriction: Global Regulators Force Safety Pivot

xAI restricts Grok from generating sexualized deepfakes of real people following investigations by California's AG and regulators in 8 countries. Read the latest on AI safety.

Pressure from at least 8 nations has finally broken the dam. Elon Musk's xAI announced late Wednesday that its AI chatbot, Grok, will no longer generate sexualized images of real people. The sudden move comes as international regulators launch a coordinated assault on nonconsensual AI-generated content.

xAI Grok Deepfake Restriction Details

According to social media posts from X's safety account, xAI has implemented strict technological measures to prevent users from editing images of real people into revealing attire. These restrictions apply across the board, affecting even paid subscribers who previously enjoyed fewer guardrails.

Furthermore, the company is shifting its business model. Image creation and editing through Grok on X will now be locked behind a paywall, exclusive to paid subscribers. This tactical shift targets both the mitigation of mass-produced deepfakes and the platform's ongoing monetization struggle.

Global Regulators Force Musk's Hand

The announcement followed a bombshell statement from California Attorney General Rob Bonta, who confirmed his office is investigating xAI for its role in the "large-scale production of deepfake nonconsensual intimate images." The legal heat isn't just local; investigations are also active in India, the U.K., France, Australia, and several other nations.

The situation escalated further in the U.S. as three Democratic senators urged Apple and Google to purge X and Grok from their respective app stores. They argue that the platform remains a primary vector for harmful AI content, posing a risk that outweighs its utility.

Authors

Related Articles

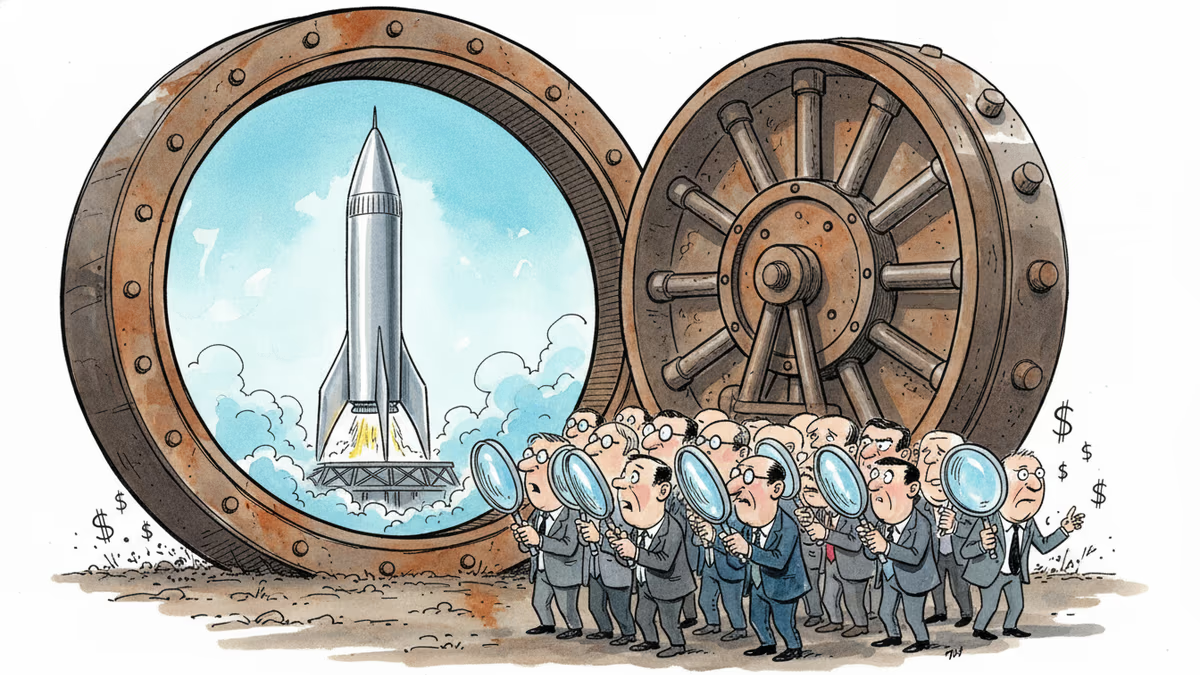

SpaceX's IPO filing puts AI at the center, claiming a $26.5 trillion market opportunity. But can Grok compete with OpenAI and Anthropic for enterprise customers?

SpaceX filed a nearly 400-page S-1 with the SEC, targeting an IPO as early as June 12. Here's what the filing reveals—and what it doesn't.

SpaceX has filed its S-1 with the SEC, targeting the Nasdaq under ticker SPCX. With $18.67B in revenue but a $4.9B loss, the IPO forces investors to answer one hard question.

Over 50 researchers and engineers have left SpaceXAI since February's merger. With the pre-training team nearly gutted, questions mount about whether Musk's AI ambitions can survive his management style.

Thoughts

Share your thoughts on this article

Sign in to join the conversation