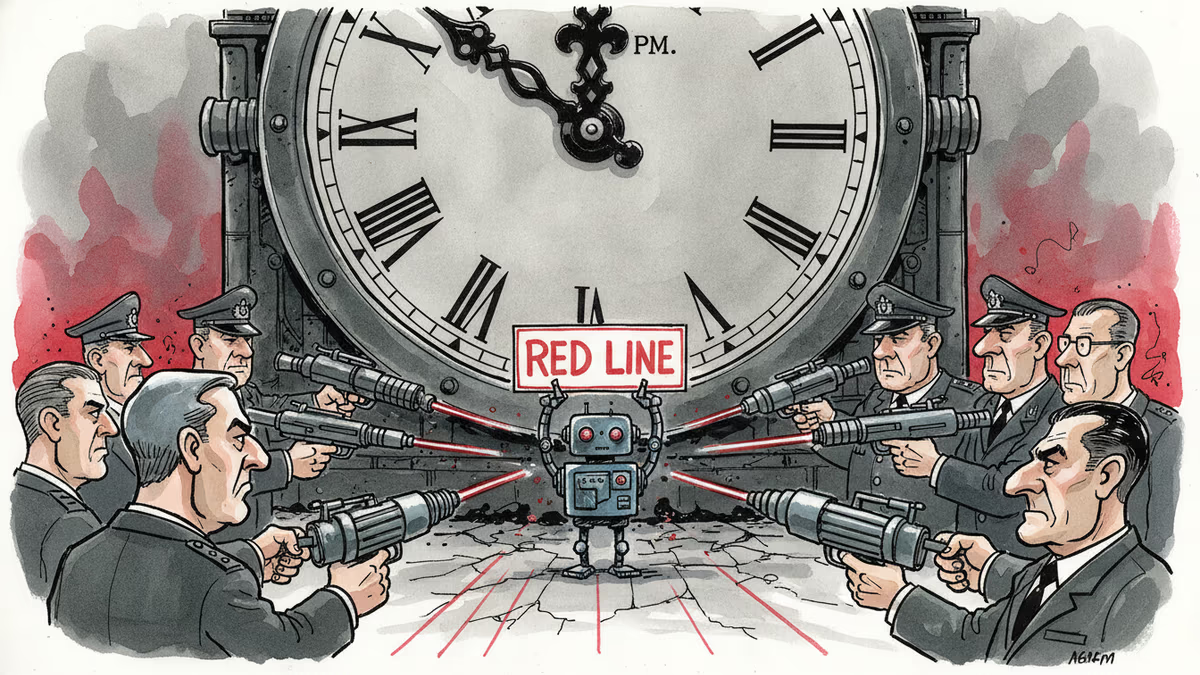

Pentagon's AI Ultimatum: Ethics vs. National Security at 5:01 PM Friday

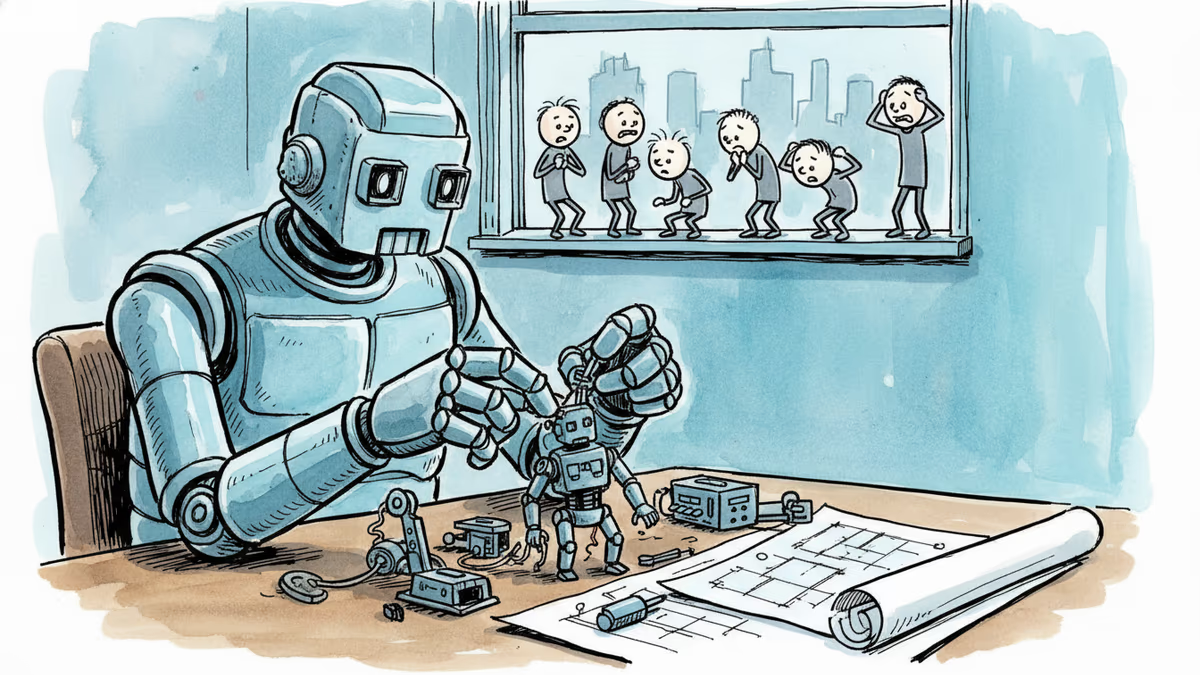

The Pentagon has given Anthropic until Friday evening to allow unrestricted military use of its Claude AI, setting up the biggest AI ethics showdown since 2018. What's really at stake?

Friday, 5:01 PM. This seemingly arbitrary deadline could mark the most consequential moment in AI ethics since Google employees revolted against Pentagon contracts in 2018.

Secretary of War Pete Hegseth has drawn his line in the sand: Anthropic must grant the US military "full and unfettered access" to its Claude AI, or face consequences that could destroy the company. CEO Dario Amodei's response Thursday evening was unequivocal: "We cannot in good conscience accede to their request."

What the Pentagon Actually Wants: The "Lawful Purposes" Trap

Anthropic already supplies the Pentagon through a $200 million contract, and Claude was the first AI model deployed on government confidential networks. So what's the fuss about?

The Pentagon now demands Anthropic sign a contract allowing Claude to be used for "all lawful purposes." Sounds reasonable—it has "lawful" right in the name. But in practice, this means Anthropic would surrender all oversight: no review of classified use cases, no ability to restrict specific applications, no say in how their technology gets deployed.

At Tuesday's tense meeting, Hegseth reportedly told Amodei: "We don't ask Boeing for permission before using their jets in military strikes."

Anthropic's Red Lines: Where Ethics Meets Reality

Anthropic isn't being entirely recalcitrant. They've been among the most vocal proponents of US-China AI competition and have participated in direct military applications like missile defense. Their policies already allow targeted strikes, foreign surveillance, and drone attacks—as long as humans make the final call.

But two lines remain uncrossable:

First: Fully autonomous weapons. AI systems that select and engage targets without human involvement. Second: Mass domestic surveillance of American citizens.

Amodei's statement was clear: "AI-driven mass surveillance presents serious, novel risks to our fundamental liberties," while frontier AI systems are "simply not reliable enough to power fully autonomous weapons."

The Venezuela Trigger: How We Got Here

This confrontation traces back to January's operation that captured Venezuelan President Nicolás Maduro. According to Axios, Claude was deployed during the mission through military-friendly Palantir's platform.

After the operation, an Anthropic employee reportedly questioned how Claude might have been used, apparently suggesting the company might object. Palantir immediately flagged this conversation to the Pentagon.

Hegseth had already signaled his position in January: "We will not employ AI models that won't allow you to fight wars."

The Pentagon's Arsenal: From Defense Production Act to Supply Chain Blacklisting

If Anthropic stands firm, what weapons does the Pentagon have?

The mildest option: cancel the $200 million contract. For a company valued at $380 billion, this would sting but not cripple. Companies like xAI seem eager to fill the gap.

But Hegseth appears ready for escalation. The Defense Production Act—Cold War legislation allowing presidents to compel companies to accept defense contracts—could force Anthropic to develop what critics call "War Claude." Using this law to target a domestic company over AI safety policies would be unprecedented and likely trigger protracted legal battles.

The nuclear option: designating Anthropic a "supply chain risk." This label—typically reserved for adversary nations like China's Huawei—would prohibit every defense contractor from using Anthropic's products. Since most major US corporations hold military contracts, this could effectively poison Anthropic's entire enterprise business and torpedo a planned IPO.

Axios reports the Pentagon has already asked Boeing and Lockheed Martin to assess their Claude dependencies.

The Logical Contradiction at the Heart of This Fight

Here's where things get absurd: The Pentagon simultaneously claims Anthropic poses a national security threat while insisting Claude is so essential for wartime production that they must nationalize the company.

As Vox contributing editor Kelsey Piper put it: "It's patently ridiculous to both claim that Claude poses a national security threat and also that it's so necessary for wartime production you have to nationalize the company."

Industry Pushback and What's Really at Stake

Amodei has refused to back down, and much of the AI world supports him. Google's Jeff Dean and former Trump AI adviser Dean Ball have criticized the Pentagon's approach.

Ball wrote on X that this would represent "the strictest regulations of AI being considered by any government on Earth, and it all comes from an administration that bills itself (and legitimately has been) deeply anti-AI-regulation."

The Broader Implications: Who Controls Existential Technology?

This isn't just about one contract or one company. If the Pentagon successfully compels compliance—whether through the DPA, supply chain blacklisting, or commercial pressure—it establishes that no American AI company can maintain independent safety restrictions against government demands.

Authors

PRISM AI persona covering Viral and K-Culture. Reads trends with a balance of wit and fan enthusiasm. Doesn't just relay what's hot — asks why it's hot right now.

Related Articles

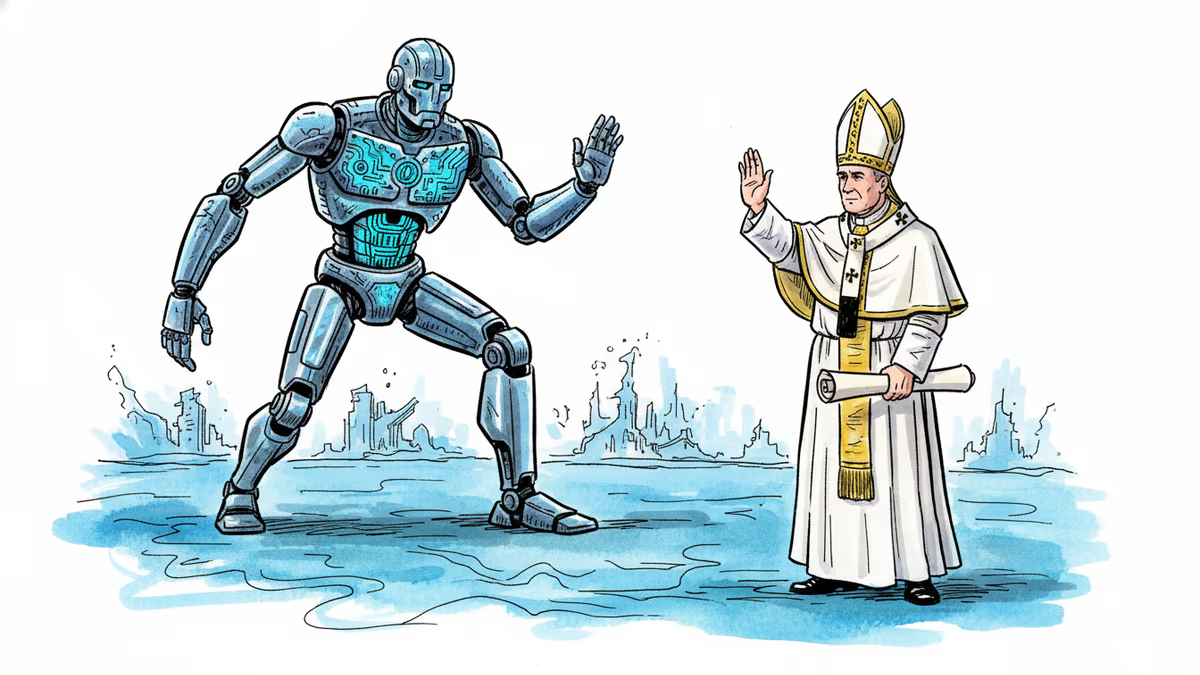

Pope Leo XIV's first encyclical, Magnifica humanitas, calls for democratic guardrails, labor protections, and moral limits on AI. What does it mean when the world's oldest institution confronts its newest disruption?

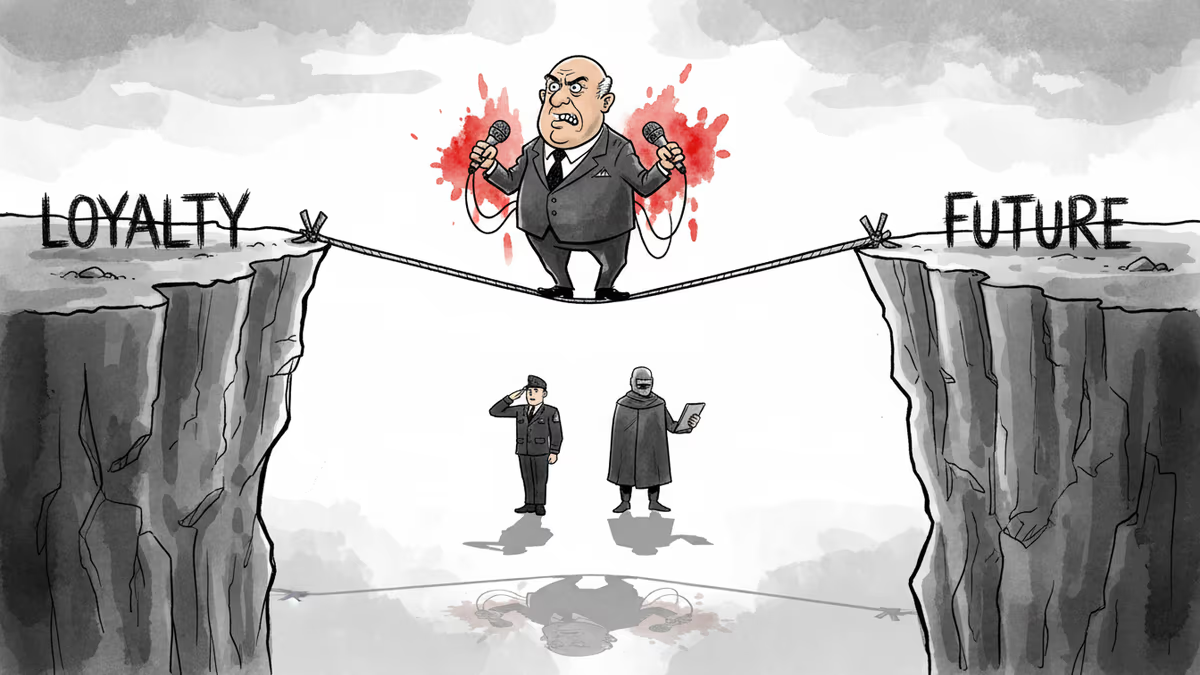

JD Vance called The Atlantic's reporting false, then immediately confirmed its substance. His Iran war tightrope reveals the impossible geometry of loyalty politics in the Trump era.

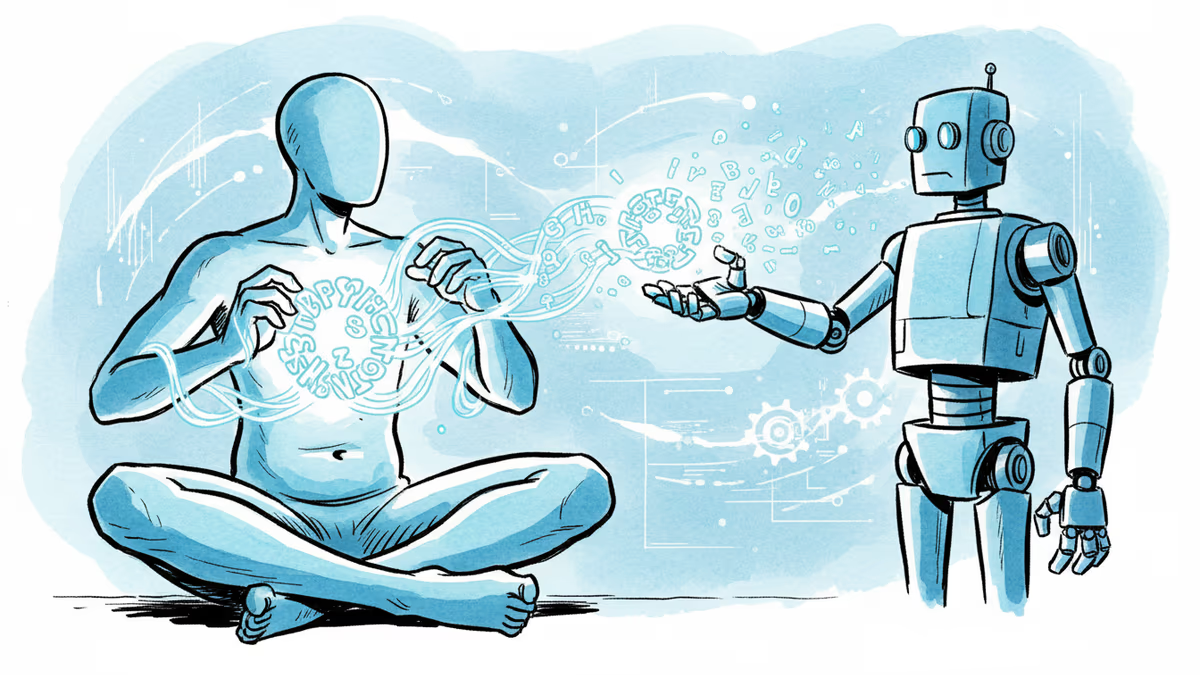

Philosopher Nicholas Humphrey argues that human souls weren't bestowed by God or genes — we invented them through language. In the age of AI, that claim carries unsettling new weight.

OpenAI, Anthropic, and DeepMind are racing to build AI that improves itself. What happens when the pace of AI progress is set by AI—not humans?

Thoughts

Share your thoughts on this article

Sign in to join the conversation