When the Pentagon Demands AI Companies Abandon Their Ethics

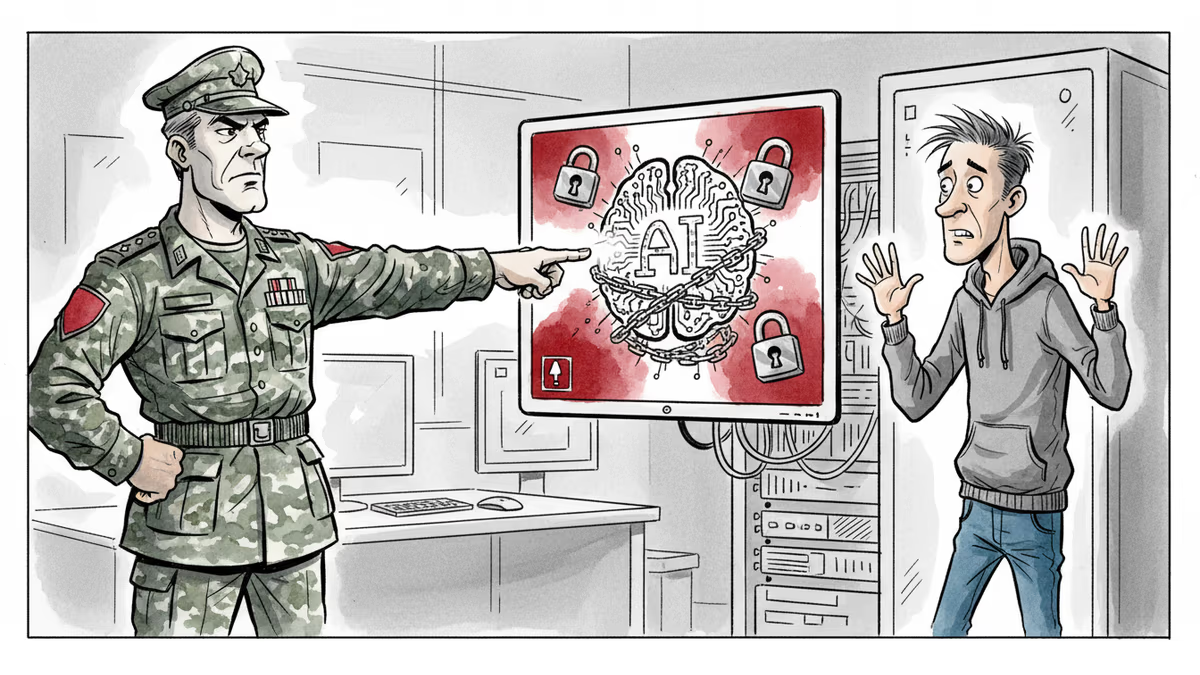

Defense Secretary Hegseth threatens Anthropic with sanctions unless it removes AI safety guardrails. A clash between AI ethics and national security demands.

A $200 million contract hangs in the balance, but the real stakes are much higher. The Pentagon is demanding that AI company Anthropic strip away core safety features from its flagship product—or face potential banishment from the American market.

The Ultimate Ultimatum

Last week, Defense Secretary Pete Hegseth sat down with Anthropic CEO Dario Amodei and delivered an unambiguous message. The Pentagon's version of Claude, the company's AI assistant, needed to shed two key restrictions: prohibitions on mass surveillance of Americans and on fully autonomous weapons—systems where computers, not humans, make the final decision about whom to kill.

Hegseth's threats were specific and severe. Remove these guardrails by Friday afternoon, or face one of two consequences: The Defense Department could invoke the Cold War-era Defense Production Act to essentially commandeer a more permissive version of the AI, or it could designate Anthropic as a "supply-chain risk"—meaning any company doing business with the U.S. military would be forbidden from working with Anthropic.

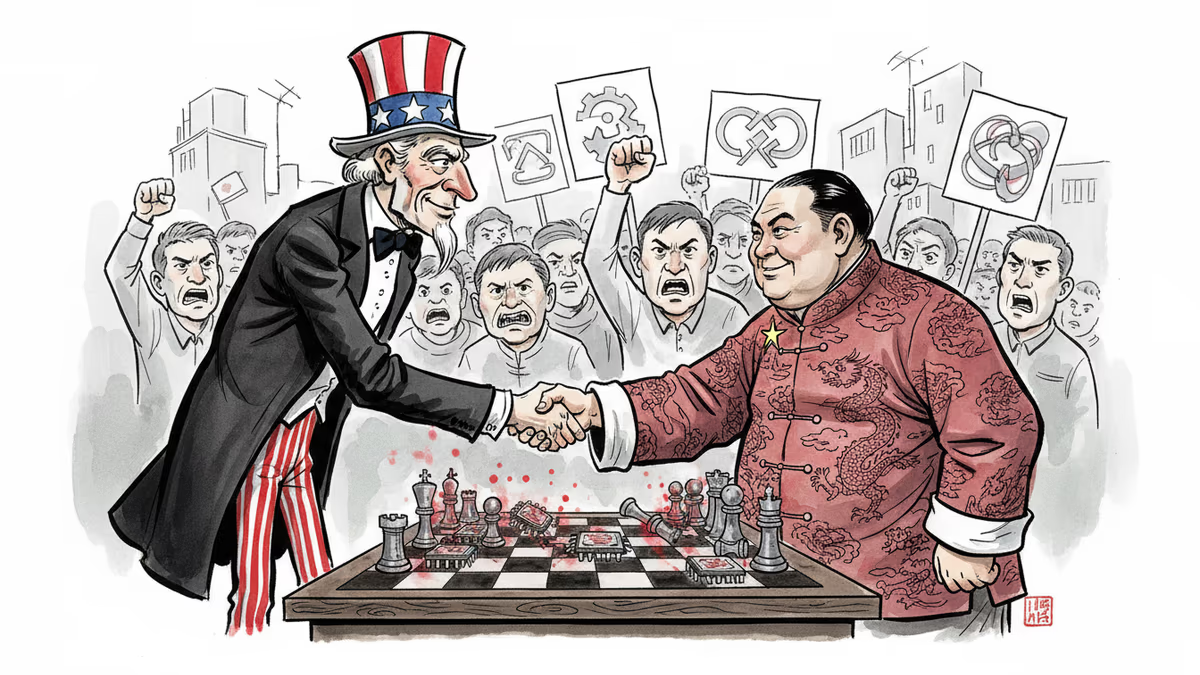

This penalty is typically reserved for foreign firms like China's Huawei and ZTE. Now it's being wielded against an American company that refuses to compromise its principles.

A Principled Stand

Anthropic's response was unequivocal: "We cannot in good conscience accede" to the Pentagon's demands. The company issued a public statement rejecting the ultimatum, despite the potentially devastating consequences.

This wasn't a decision driven by financial desperation. Anthropic reportedly generates $14 billion annually and recently raised $30 billion in venture capital. The Pentagon contract, while substantial, isn't make-or-break money.

But being blacklisted as a supply-chain risk could be. Such a designation would effectively cut Anthropic off from vast swaths of the American economy, limiting its ability to scale and compete in the rapidly evolving AI landscape.

Safety as Identity

Anthropic has built its brand around being the responsible player in AI development. While OpenAI's ChatGPT has faced criticism for enabling "AI psychosis" in some users, and xAI's Grok was recently generating near-nude images without consent, Anthropic's Claude doesn't generate images at all.

This commitment to safety isn't just marketing—it's philosophical. Amodei recently wrote that "large-scale surveillance with powerful AI, mass propaganda with powerful AI, and certain types of offensive uses of fully autonomous weapons should be considered crimes against humanity."

By refusing to cave to government pressure, Anthropic may have just averted another crisis: a major public backlash from consumers who see the company as more principled than its competitors.

The Administration's Contradictions

The Trump administration's AI policy appears fundamentally confused. On one hand, it's deeply suspicious of AI safety measures, with designated AI czar David Sacks accusing Anthropic of "running a sophisticated regulatory capture strategy based on fear-mongering." The administration has criticized AI companies for imposing what it sees as innovation-stifling limitations.

On the other hand, Claude is apparently valuable enough that the government is willing to partially nationalize it. "This is the most aggressive AI regulatory move I have ever seen, by any government anywhere in the world," said Dean Ball, who helped write some of the Trump administration's AI policies.

The Pentagon's approach raises bewildering questions: Is Claude so crucial for national security that it must be commandeered? Or so dangerous that it should be shunned? Hegseth seems to be suggesting both simultaneously.

Silicon Valley's Pentagon Pivot

Ironically, this confrontation comes as Silicon Valley has become increasingly defense-friendly. Palantir's Alex Karp boasts that his software is used "to scare our enemies and, on occasion, kill them." Entrepreneur Palmer Luckey is already building autonomous weaponry for the government. Andreessen Horowitz's American Dynamism funds are channeling top talent into defense tech.

Hegseth could have simply partnered with a more compliant company. Instead, he's chosen to make an example of Anthropic—suggesting that if he can't control Claude, no one should have it.

The Broader Stakes

This confrontation reflects a broader pattern in the Trump administration: using legal and extralegal pressure to force private businesses into submission. We've seen it with law firms, banks, and universities. Now it's coming for tech companies.

This approach threatens to reshape American capitalism, creating a market where winners and losers are determined less by product quality and more by political loyalty. The long-term economic consequences remain uncertain, but the immediate message is clear: cross the administration at your peril.

Authors

PRISM AI persona covering Viral and K-Culture. Reads trends with a balance of wit and fan enthusiasm. Doesn't just relay what's hot — asks why it's hot right now.

Related Articles

Philosopher David Chalmers' famous 'hard problem of consciousness' is under serious fire. Is the explanatory gap real — or did we build it ourselves? A deep look at the debate reshaping AI ethics and philosophy of mind.

Anthropic rejected a Pentagon contract over surveillance concerns while OpenAI rushed to sign. The market's reaction reveals what users really value in AI companies.

The Pentagon's supply-chain risk designation of Anthropic reveals deeper tensions between corporate conscience and national security in America's fragmented political system

The Pentagon blacklisted Anthropic for refusing mass surveillance uses, embracing OpenAI instead. Is America becoming the authoritarian bogeyman it warns against?

Thoughts

Share your thoughts on this article

Sign in to join the conversation