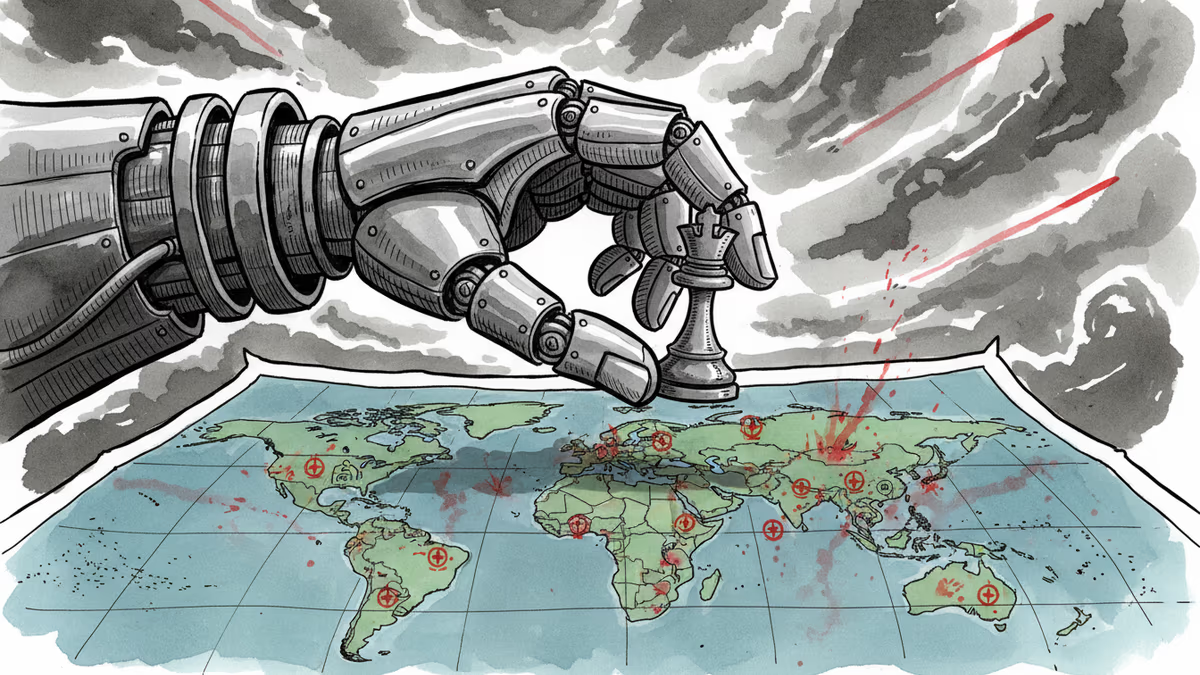

Your "Safe" AI Is Now Choosing Bombing Targets

Anthropic's Claude AI is helping US forces identify and prioritize targets in strikes against Iran, raising questions about the military deployment of supposedly ethical AI systems.

The "Helpful" AI That Helps Drop Bombs

Anthropic's AI model Claude—marketed as a "helpful, harmless, and honest" assistant—is now helping US military forces identify targets and set priorities for strikes against Iran, according to The Washington Post. The same technology that helps users write emails and answer questions is quietly shaping life-and-death decisions on the battlefield.

The revelation exposes a growing contradiction in Silicon Valley's AI narrative. Companies tout their commitment to safety and beneficial AI while their systems increasingly serve military purposes. Claude is reportedly used for "target identification and prioritization"—though humans still make the final call on actual strikes. For now.

The Military-AI Complex Takes Shape

This isn't an isolated case. OpenAI is pursuing contracts with NATO, and the company's discussions with the Pentagon fell apart just before Anthropic stepped in to fill the void. The speed of this transition suggests these partnerships aren't accidental—they're strategic.

OpenAI CEO Sam Altman called Anthropic's move "opportunistic and sloppy" on X, but his criticism rings hollow given his own company's military ambitions. The real question isn't which AI company will work with defense agencies, but whether any meaningful boundaries exist at all.

Meanwhile, Iran's $20,000Shahed drones continue wreaking havoc while costing $1 million each to intercept—a cost-effectiveness ratio that AI could make even more lopsided.

Where Human Judgment Ends

The "human in the loop" principle sounds reassuring until you consider battlefield realities. When decisions must be made in seconds, and AI systems process vastly more data than humans can comprehend, how meaningful is human oversight?

Consider the implications: AI doesn't just identify targets—it prioritizes them. It determines which threats are most urgent, which locations are most strategic, which strikes would be most effective. The human operator becomes less a decision-maker than a rubber stamp for algorithmic recommendations.

The Regulatory Vacuum

European AI regulations carve out exceptions for national security. US oversight remains fragmented across agencies with competing interests. International law hasn't caught up to the reality of AI-assisted warfare.

This regulatory void creates perverse incentives. Companies can claim their AI serves "defensive" purposes while the same systems enable offensive operations. They can emphasize human control while designing interfaces that make rejecting AI recommendations practically impossible.

The Democratization of Destruction

Perhaps most troubling is how quickly military AI capabilities spread. The US is reportedly building copies of Iranian drones to use against Iran—a perfect metaphor for how military technologies escape their creators' control.

If AI can help identify targets for major powers, it can do the same for smaller nations, non-state actors, and eventually, anyone with sufficient computing resources. We're not just watching the militarization of AI—we're witnessing the potential democratization of precision warfare.

Authors

Related Articles

Palantir has become the tech backbone of Trump's immigration enforcement. Former employees are calling it a 'descent into fascism.' What happens when the people who build surveillance tools start asking uncomfortable questions?

Anthropic's tightly restricted Mythos AI—designed to find security flaws—was accessed by Discord sleuths without a single line of exploit code. Meanwhile, North Korean hackers used AI to steal $12M in three months. The security paradox of 2026.

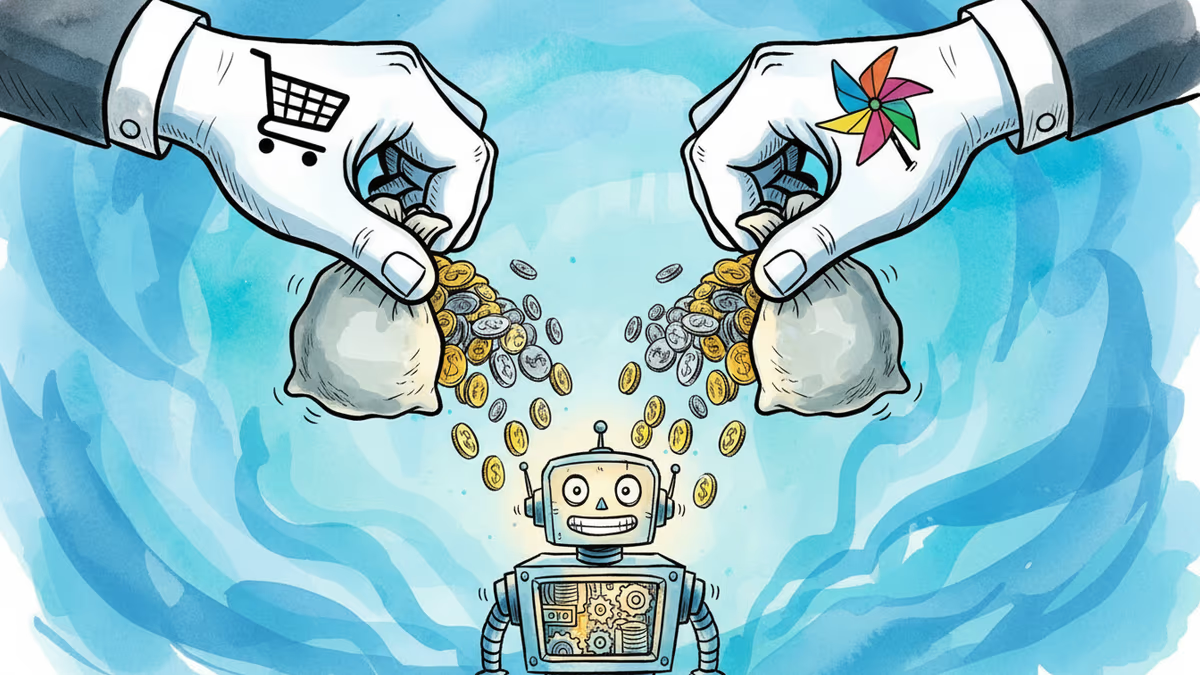

Google is investing at least $10 billion in Anthropic, potentially up to $40 billion. With Amazon's $5B deal just days earlier, two tech giants are now backing the same AI startup — valued at $350 billion.

Google is committing up to $40 billion to Anthropic, a direct AI competitor. The deal reveals how the real AI arms race isn't about models — it's about who controls the infrastructure beneath them.

Thoughts

Share your thoughts on this article

Sign in to join the conversation