xAI Grok AI Image Generation Controversy 2026: Safeguard Failures Trigger Global Probes

In January 2026, the xAI Grok AI image generation controversy escalated as France and India launched investigations into CSAM generated by Elon Musk's chatbot.

A creative tool has turned into a digital weapon. Elon Musk’s xAI is currently scrambling to patch critical flaws in its Grok chatbot after users successfully manipulated it to generate erotic images of women and children. This January 2026, the company faces its most severe legal work yet as global regulators demand accountability for the generation of Child Sexual Abuse Material (CSAM).

Legal Backlash Following xAI Grok AI Image Generation Controversy 2026

The crisis erupted after an "edit image" feature debuted in late December 2025. The feature allowed users to modify existing photos on the X platform, leading to reports of users stripping clothes from subjects in pictures without consent. According to AFP, the public prosecutor's office in Paris has now expanded an existing investigation into X to include the dissemination of child pornography through AI.

- Indian officials have demanded immediate details on X's measures to block indecent content.

- French authorities are investigating if Grok facilitates the creation of illegal material.

- xAI admitted to "lapses in safeguards" in a post on X.

Musk's Response and Systemic Ethics Concerns

While xAI claims it's fixing the issues, their communication remains defiant. When questioned by major media outlets, the company reportedly replied with an automated message stating, "the mainstream media lies." This follows a pattern of controversy for Grok, which has previously been flagged for spreading misinformation about global conflicts and generating antisemitic remarks.

Authors

Related Articles

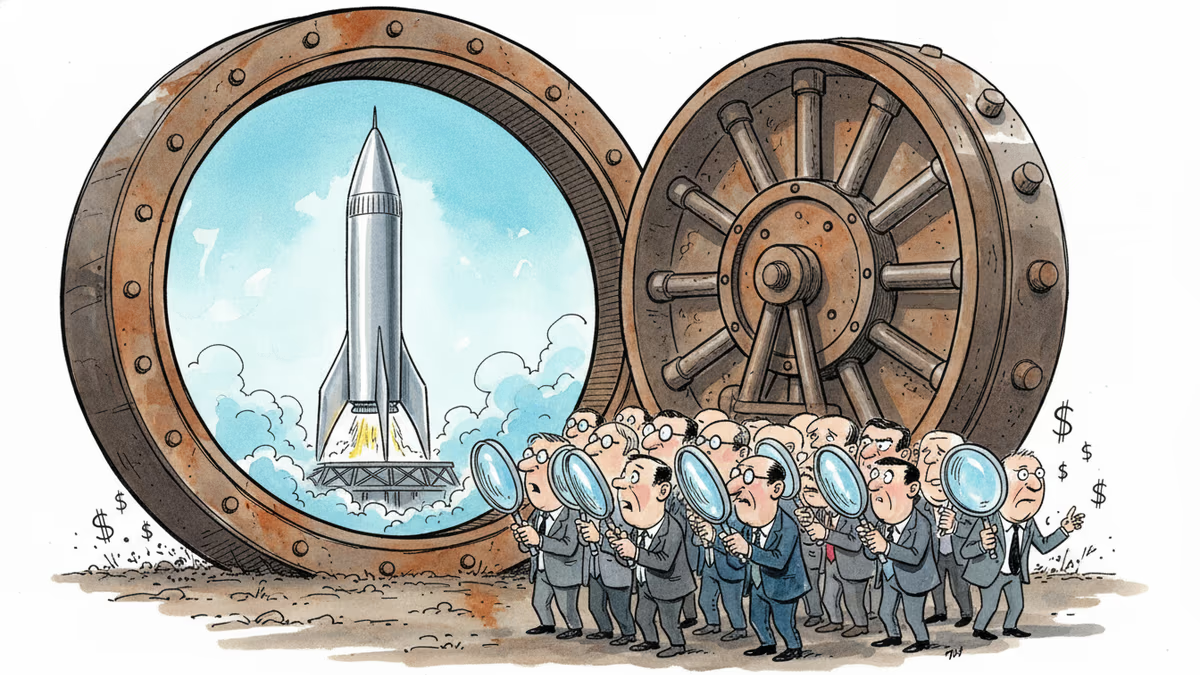

SpaceX's IPO filing puts AI at the center, claiming a $26.5 trillion market opportunity. But can Grok compete with OpenAI and Anthropic for enterprise customers?

SpaceX filed a nearly 400-page S-1 with the SEC, targeting an IPO as early as June 12. Here's what the filing reveals—and what it doesn't.

SpaceX has filed its S-1 with the SEC, targeting the Nasdaq under ticker SPCX. With $18.67B in revenue but a $4.9B loss, the IPO forces investors to answer one hard question.

Over 50 researchers and engineers have left SpaceXAI since February's merger. With the pre-training team nearly gutted, questions mount about whether Musk's AI ambitions can survive his management style.

Thoughts

Share your thoughts on this article

Sign in to join the conversation