Why AI Whistleblowers Stay Silent (It's Not What You Think)

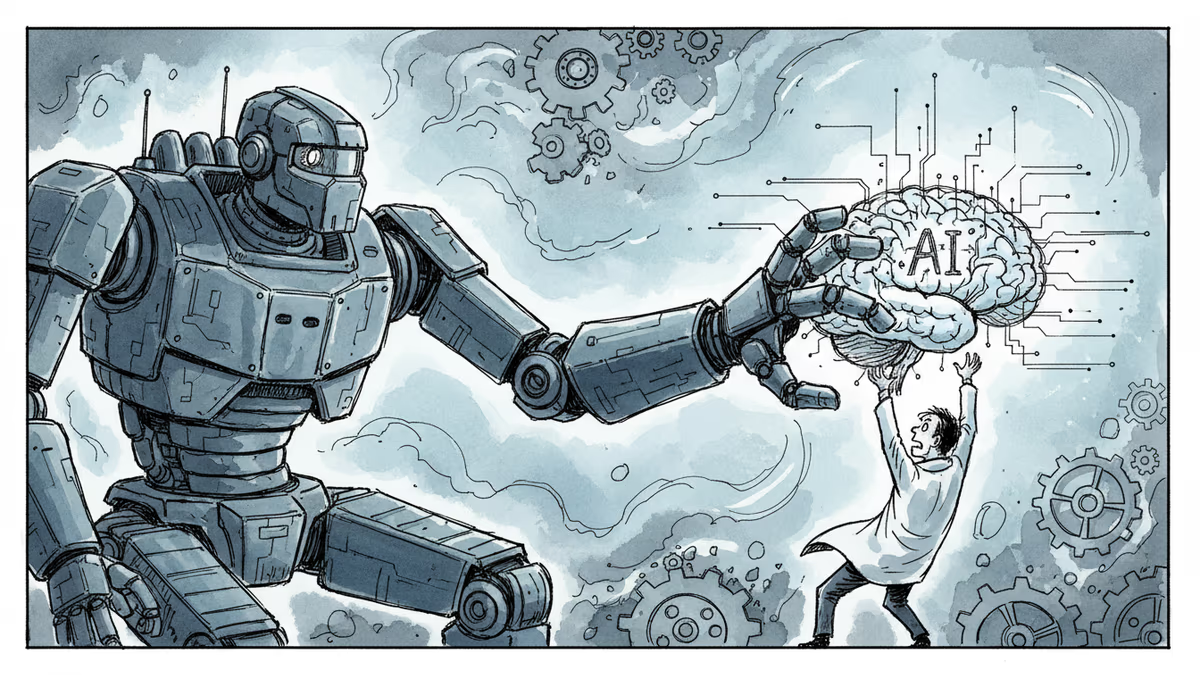

OpenAI and Anthropic researchers quit publicly, but most stay quiet. The hidden mechanisms keeping AI workers from speaking up reveal a darker truth about tech power.

Three Resignations in 48 Hours Exposed Something Bigger

When Anthropic safety researcher Mrinank Sharma quit and wrote "The world is in peril" on X, it wasn't just another disgruntled employee story. Days later, OpenAI researcher Zoë Hitzig announced her resignation in The New York Times over "deep reservations" about ad targeting. Then came news that OpenAI had fired a safety executive who'd raised concerns about erotic content on ChatGPT.

But here's what's chilling: these three represent a tiny fraction of those who want to speak up but can't. Mary Inman, who's represented whistleblowers for over three decades—including Facebook's Frances Haugen—says the real story is in the silence.

The Invisible Chains

Most people think NDAs are the main barrier. They're wrong. "The biggest problem isn't just nondisclosure agreements," Inman tells me. "It's mandatory arbitration clauses that ensure disputes never see daylight."

Here's how the system really works: The confidentiality agreement you sign walking in is completely different from the one you sign walking out. Exit agreements are far more restrictive, often containing language that violates SEC laws protecting whistleblower rights.

Then there's the equity trap. Refuse to sign a non-disparagement clause? Lose your stock options. In Silicon Valley, that's not just money—it's your entire financial future.

Trump Era, New Rules

"When I represented Frances Haugen, it feels like an entirely different time," Inman reflects. What's changed isn't just the politics—it's the narrative.

AI companies now wrap themselves in the "AI arms race" flag. Any criticism gets reframed as helping China win. "There's no appetite to slow them down," Inman notes. The Trump administration's tech-friendly stance has emboldened companies to believe they're "untouchable."

Add to this the industry's heavy reliance on immigrant workers—many on visas—and you have a workforce with minimal incentive to rock the boat. "The impediments are very high," Inman admits.

'Tinder for Whistleblowers'

Enter Psst, the nonprofit Inman co-founded. Their solution? "Collectivize the act of whistleblowing."

Here's how it works: Employees deposit encrypted information into a "digital safe." The keys only unlock when there are matches—multiple people from the same organization with similar concerns. "You may not risk your career based on your one little piece of the puzzle, but you'll think about it if others have brought other pieces."

It's particularly powerful for global workers. "Content moderators in Nigeria, data labellers in the Philippines, robot operators across Asia—they can all participate," Inman explains. "We don't want them feeling: 'I'm in sub-Saharan Africa, what can I do?'"

The New Battlegrounds

So what could AI workers blow the whistle on? Inman sees several emerging areas:

AI Washing: Companies claiming AI capabilities they don't have, potentially misleading investors and consumers.

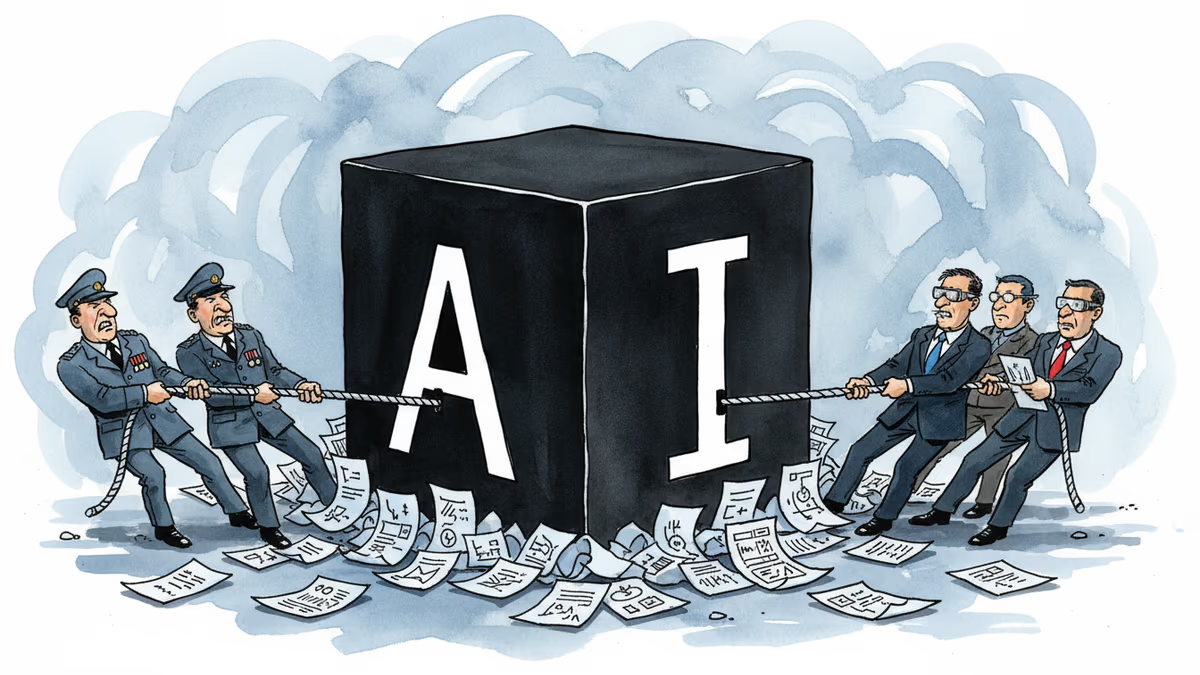

Military Entanglements: Every frontier AI lab now pairs with defense contractors—Meta with Anduril, Anthropic with Palantir. "AI is used in border surveillance. You can definitely become a whistleblower in these spaces."

Sanctions Violations: Work facilitating operations in restricted countries could trigger national security concerns.

Environmental Cover-ups: The true carbon footprint of AI data centers remains largely hidden.

The Generational Shift

There's reason for cautious optimism. "My children's generation looks at Silicon Valley differently because of Elizabeth Holmes and Sam Bankman-Fried," Inman observes. "The bloom is off the rose."

Public trust in Big Tech has eroded. People are more skeptical of AI promises, more aware of potential harms. "A lot of people have a more jaundiced eye towards tech now. So maybe we'll have another awakening."

Authors

Related Articles

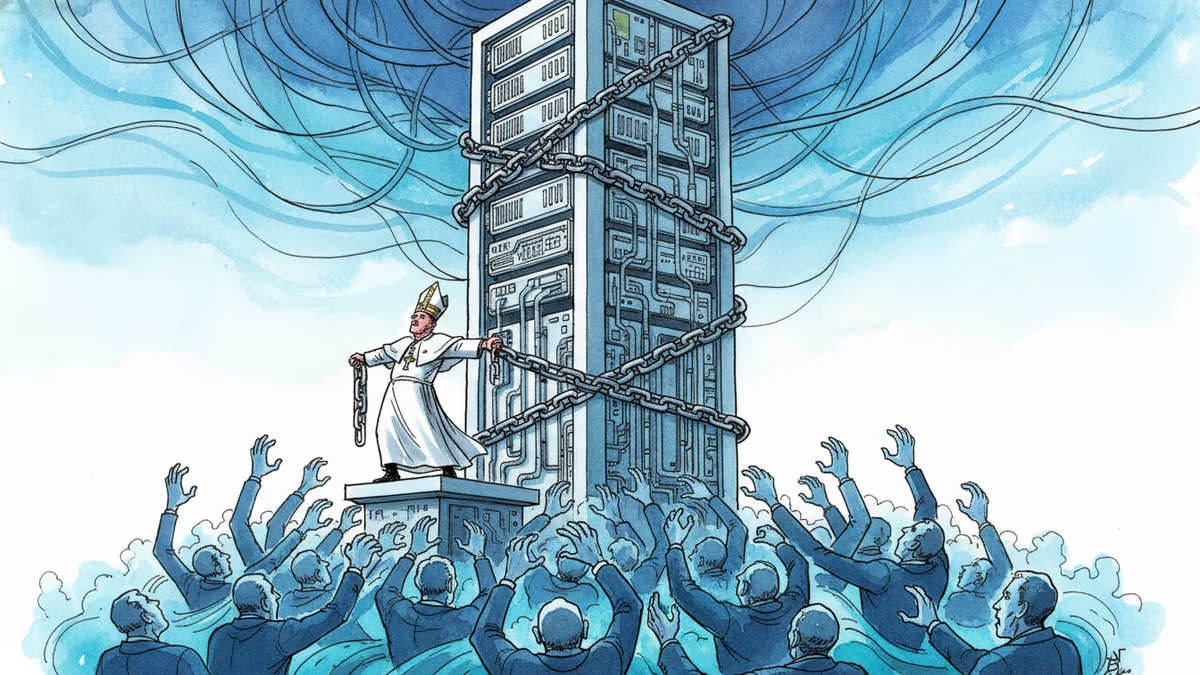

Pope Leo XIV's first encyclical frames AI not as a technology problem but a power problem. Who controls the algorithm controls reality—and that's a political question, not a spiritual one.

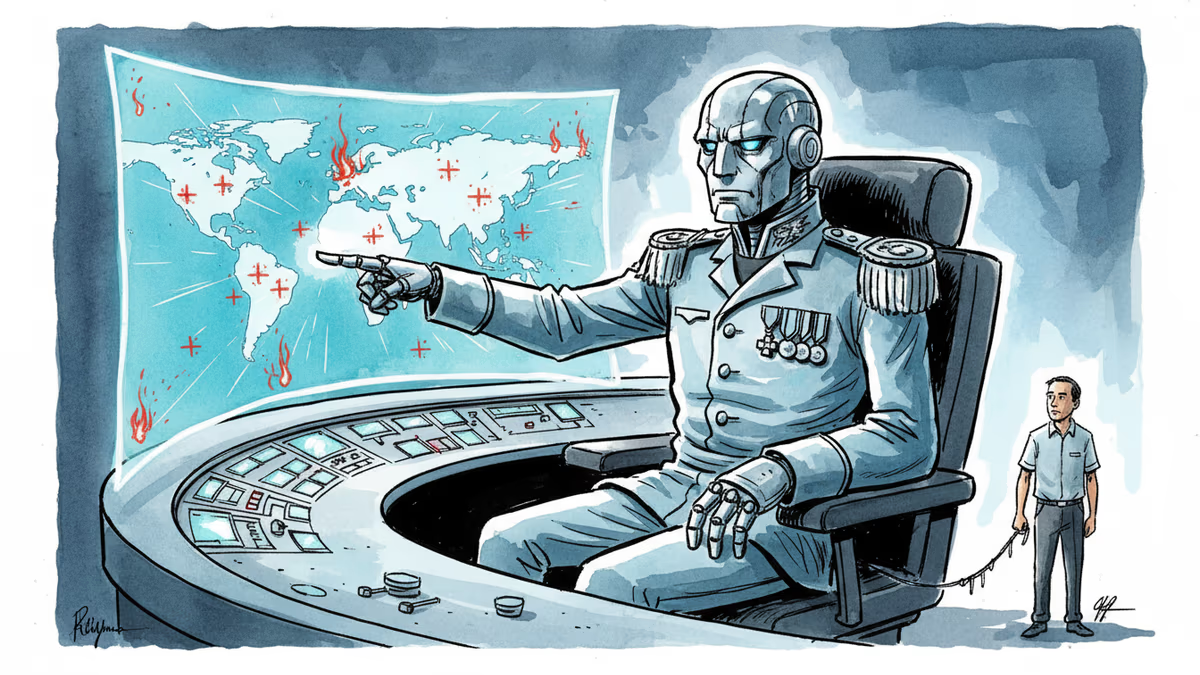

Anthropic's Claude AI is embedded in US military operations—from the capture of Maduro to the Iran war. A Pentagon dispute is exposing what "responsible AI" actually means in wartime.

OpenAI faced internal backlash over Pentagon contracts, revealing deeper questions about AI military use, transparency, and corporate accountability in defense partnerships.

Trump's explosive reaction to Anthropic's military contract refusal reveals the growing tension between AI ethics and national security demands.

Thoughts

Share your thoughts on this article

Sign in to join the conversation