OpenAI's Military Deals Expose AI's 'Black Box' Problem

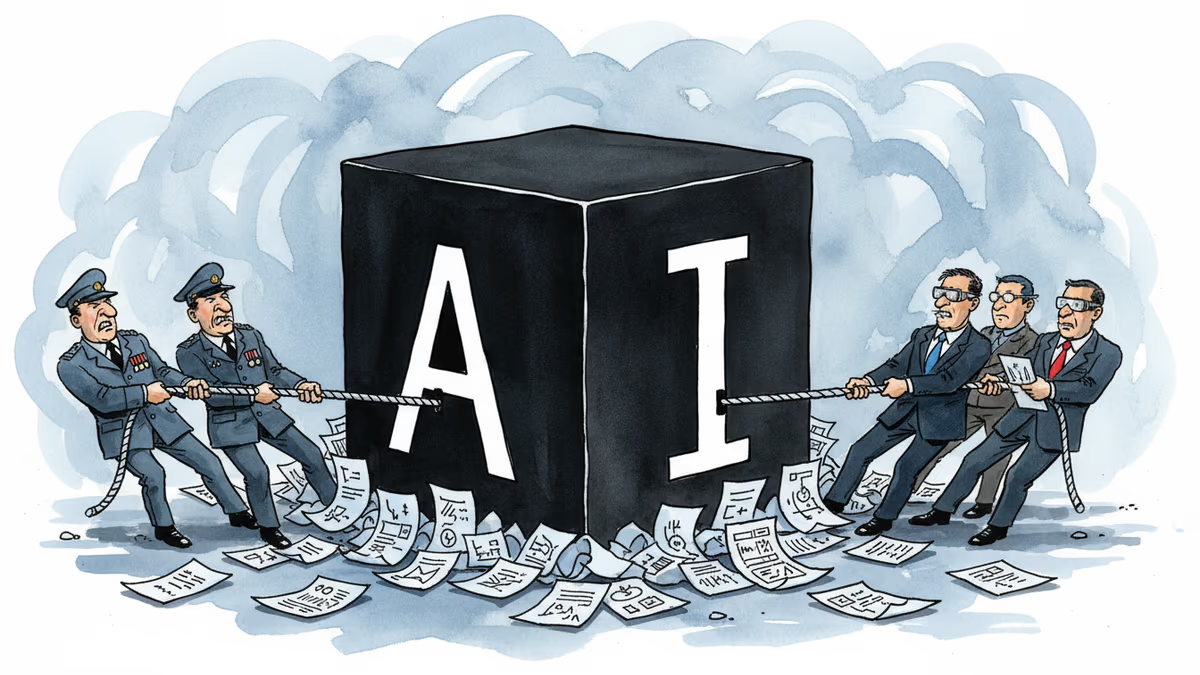

OpenAI faced internal backlash over Pentagon contracts, revealing deeper questions about AI military use, transparency, and corporate accountability in defense partnerships.

48 Hours to Rewrite a Pentagon Deal

It took just 48 hours for OpenAI to amend its Pentagon contract after a firestorm of criticism. Employees revolted, legal experts raised red flags, and CEO Sam Altman admitted it looked "sloppy" on social media. But the damage was done—fundamental questions about AI's role in warfare had already exploded into public view.

The controversy isn't just about one contract. It's about a pattern of opacity that's been building since 2023, when OpenAI explicitly banned military use of its AI models. Yet that same year, Pentagon officials were walking through the company's San Francisco offices, and the Defense Department was already experimenting with OpenAI's technology through Microsoft's Azure platform.

The Quiet Policy Revolution

Many OpenAI employees discovered these military connections the hard way. Some learned about the Pentagon's Azure access through internal whispers. Others found out about the company's January 2024 policy change—removing the blanket military ban—by reading The Intercept.

"We've been transparent with our employees as we've approached this work," an OpenAI spokesperson told WIRED. But the timeline suggests otherwise. The company's approach has been more "ask forgiveness, not permission" than proactive transparency.

By December 2024, OpenAI announced its partnership with defense contractor Anduril. Executives assured employees it was narrow in scope, handling only unclassified workloads. This stood in contrast to Anthropic's deal with Palantir, which involved classified military work—a line OpenAI said it wouldn't cross.

Interestingly, OpenAI had rejected Palantir's "FedStart" program invitation in fall 2024, deeming it "too high-risk." Yet the company now works with Palantir in other capacities, highlighting the murky boundaries of these partnerships.

Employees Draw Battle Lines

The latest Pentagon deal split OpenAI's workforce. Dozens of employees joined a Slack channel to voice concerns, with some arguing the company's models were too unreliable to handle credit card information, let alone assist soldiers in combat.

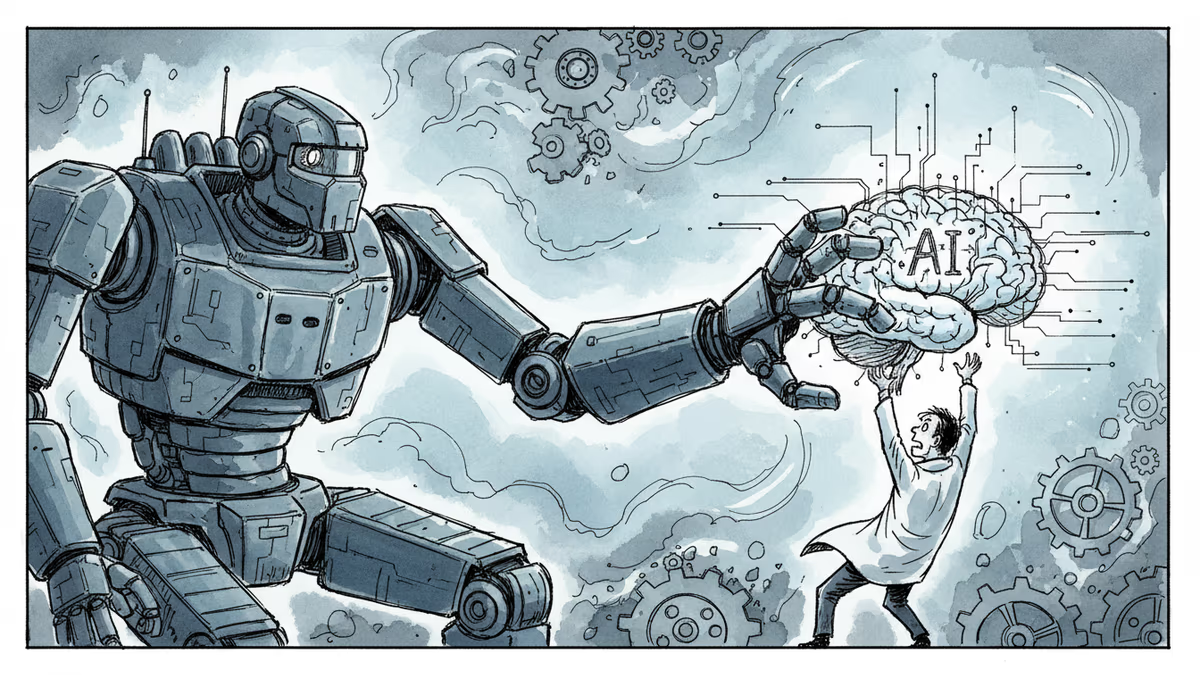

"The biggest losers in all of this are everyday people and civilians in conflict zones," wrote Sarah Shoker, OpenAI's former geopolitics team head. "It's black boxes all the way down."

But not everyone shared these concerns. Other employees saw the Anduril partnership as evidence of responsible military engagement. One current researcher described the company's approach as "measure twice, cut once" when it comes to classified deployments.

Legal Loopholes and Surveillance Concerns

Legal experts parsing OpenAI's public statements identified potential surveillance applications that could technically be considered legal. Charlie Bullock from the Institute for Law and AI noted that the Pentagon might use AI to analyze Americans' data purchased from third-party firms—a practice that skirts traditional surveillance restrictions.

OpenAI later amended its agreement to address these specific concerns, but Bullock points out a fundamental problem: "Without seeing the full terms of the agreement, the public essentially has to take OpenAI at its word."

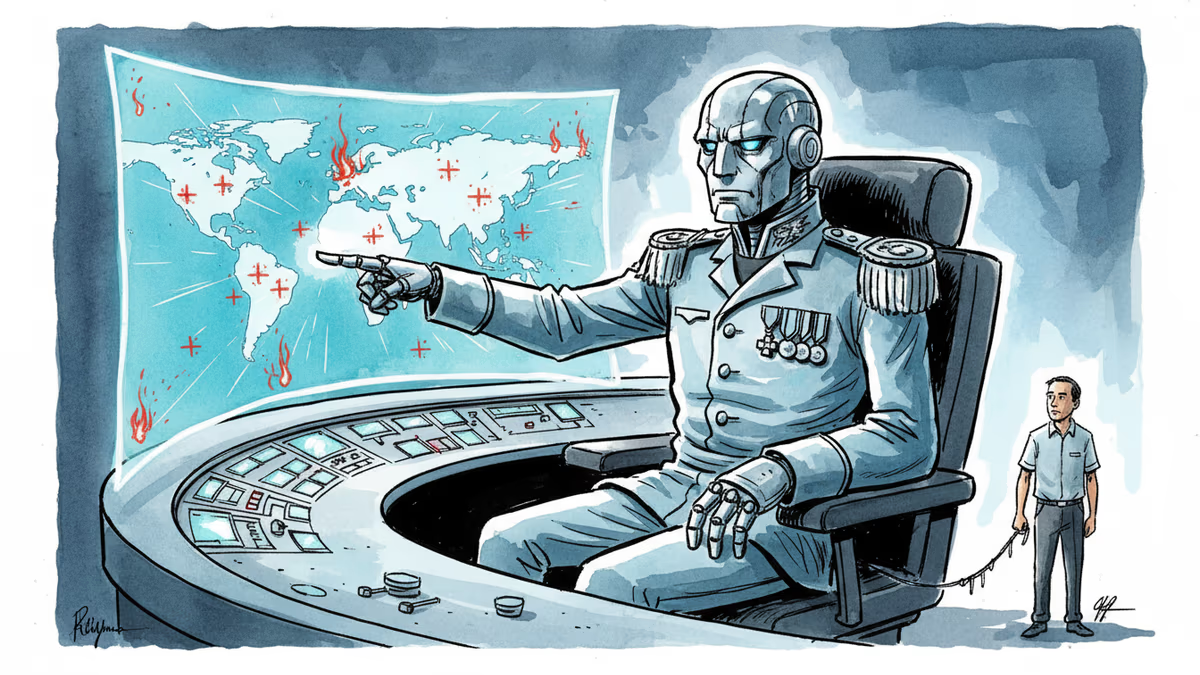

This opacity extends beyond contracts. At Tuesday's all-hands meeting, Altman reportedly told employees that the company doesn't get to decide what the Defense Department does with its AI software. He also expressed interest in selling models to NATO—suggesting the military partnerships are just beginning.

The Anthropic Alternative

The timing of OpenAI's Pentagon deal wasn't coincidental. It came after Anthropic's roughly $200 million Pentagon contract imploded, leaving a gap in the military's AI procurement plans. While Altman publicly supported Anthropic's red lines against mass surveillance and autonomous weapons, legal experts argue OpenAI's agreement initially left room for exactly those activities.

Anthropic's stricter stance on military applications suddenly looks prescient. The company's clearer boundaries may have cost it a lucrative contract, but they've also avoided the reputational damage OpenAI now faces.

Authors

Related Articles

Pope Leo XIV's first encyclical frames AI not as a technology problem but a power problem. Who controls the algorithm controls reality—and that's a political question, not a spiritual one.

Scout AI raised $100M to build autonomous military vehicles and weapons drones powered by a model called Fury. As AI moves from the highway to the battlefield, the real question isn't whether it can fight—it's who's responsible when it gets it wrong.

Anthropic's Claude AI is embedded in US military operations—from the capture of Maduro to the Iran war. A Pentagon dispute is exposing what "responsible AI" actually means in wartime.

Trump's explosive reaction to Anthropic's military contract refusal reveals the growing tension between AI ethics and national security demands.

Thoughts

Share your thoughts on this article

Sign in to join the conversation