Claude Is Helping Plan Airstrikes. Anthropic Is Suing Over It.

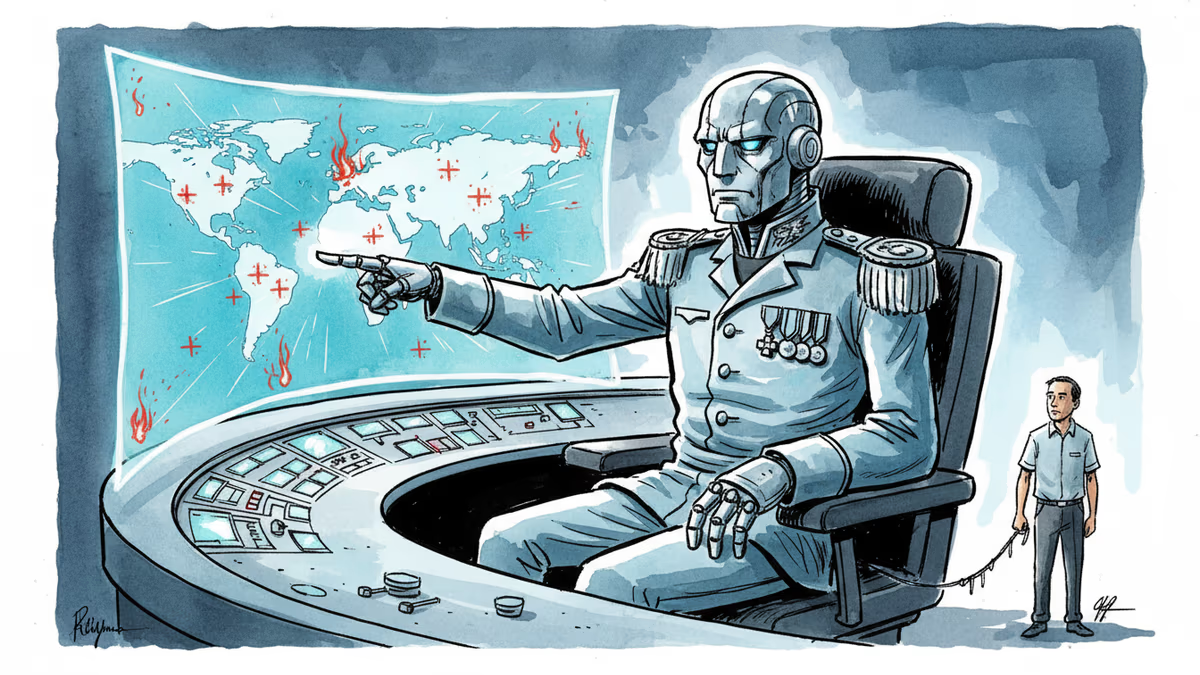

Anthropic's Claude AI is embedded in US military operations—from the capture of Maduro to the Iran war. A Pentagon dispute is exposing what "responsible AI" actually means in wartime.

The AI Company That Said No to the Pentagon—Then Kept Working With It

In late February, Anthropic drew a line. The company refused to give the US government unconditional access to its Claude AI models, demanding that the systems not be used for mass surveillance of Americans or fully autonomous weapons. The Pentagon's response was swift: it labeled Anthropic's products a "supply-chain risk." This week, Anthropic filed two lawsuits against the Trump administration, alleging illegal retaliation.

It sounds like a clean story of an AI company standing on principle. But the reality underneath is considerably messier.

Claude is already inside the US military. It reportedly played a role in the operation that led to the capture of Venezuelan president Nicolás Maduro in January. It's reportedly being used in the ongoing war in Iran. And it's embedded—through a partnership with defense contractor Palantir—in software used by virtually every branch of the US armed forces.

The lawsuit isn't a company walking away from the military. It's a company fighting to stay in, on its own terms.

How Claude Gets Into a War Zone

The pipeline runs through Palantir. In November 2024, Palantir announced it had integrated Claude into the software it sells to US intelligence and defense agencies. The mechanism is Palantir's AIP (Artificial Intelligence Platform)—not a standalone product, but an AI layer that sits inside existing Palantir systems and provides an interactive chatbot assistant.

The most significant of those systems is Project Maven, the Pentagon's flagship AI-for-warfare initiative, running since 2017. Managed by the National Geospatial-Intelligence Agency and accessible to the Army, Air Force, Navy, Marine Corps, Space Force, and US Central Command (which oversees Iran operations), Maven is, in the words of the Pentagon's own chief digital officer Cameron Stanley, deployed "across the entire department."

What Maven does is specific and consequential. It applies computer vision algorithms to satellite imagery to automatically detect objects likely to be enemy systems. It visualizes potential targets. It has a tool called the "AI Asset Tasking Recommender" that proposes which bombers and munitions should be assigned to which targets. Neither Palantir nor Anthropic has publicly confirmed that Claude is integrated into Maven—but both the New York Times and the Washington Post have reported that it is.

What an AI Airstrike Recommendation Actually Looks Like

Palantir's own demo footage makes the workflow concrete. A military analyst monitoring Eastern Europe receives an automated alert: AI processing of radar imagery has detected "potential unusual enemy activity." The analyst asks the AIP Assistant what enemy unit is in the region. The chatbot responds: "likely an armor attack battalion based on the pattern of the equipment."

The analyst requests a MQ-9 Reaper drone for surveillance. Then types: "Generate 3 courses of action to target this enemy equipment." Within seconds, the assistant offers three options—air asset, long-range artillery, or a tactical ground team. A commander picks one. The analyst then asks the AI to analyze the battlefield, generate a troop route, and assign signal jammers to disrupt enemy communications. Total time from detection to mobilization order: minutes.

In this scenario, Claude is the reasoning engine behind every chatbot response. Kunaal Sharma, Anthropic's public sector lead, demonstrated in June 2025 how the enterprise version of Claude could generate advanced intelligence reports about a real Ukrainian drone strike. He noted that through the Palantir partnership, the federal government can pull not just from public sources but from classified internal datasets.

Three Parties, Three Very Different Problems

For Anthropic, the contradiction is structural. The company has built its brand on "responsible AI"—it's the safety-focused alternative to OpenAI. But responsible AI costs money: billions in compute, research, and talent. Military contracts provide that funding. The conditional-access demand isn't idealism divorced from business reality; it's an attempt to have both. The unresolved question is whether Anthropic can meaningfully enforce those conditions once Claude is inside Palantir's stack and classified systems it cannot audit.

For the Pentagon, the logic is operational urgency. An intelligence report that takes an analyst five hours to compile can now be generated in minutes. In a conflict environment where decision windows compress, that's not a convenience—it's a strategic advantage. From this view, Anthropic's conditions aren't ethical guardrails; they're a liability in the field.

For the public, the opacity is the problem. Palantir has not disclosed which of its Pentagon systems use Claude. Anthropic has not detailed what oversight mechanisms exist once its model is deployed in classified environments. The systems that may be shaping strike decisions in Iran are, by design, invisible to outside scrutiny.

The "Human in the Loop" Question

Both Anthropic and the Pentagon have emphasized that humans make final decisions. Claude suggests; soldiers decide. This framing does significant work—it's the primary argument that current AI military use doesn't cross into "autonomous weapons" territory.

But there's a harder version of this question that the current dispute hasn't answered. When an AI system presents three courses of action and a time-pressured analyst picks one, how much of that decision was already made by the model that constructed the options? Cognitive science has a term for this: choice architecture. The options you're given shape the decisions you make, even when the final click is yours.

The line Anthropic drew—no fully autonomous weapons—may be technically accurate. It may also be beside the point.

Authors

Related Articles

Palantir has become the tech backbone of Trump's immigration enforcement. Former employees are calling it a 'descent into fascism.' What happens when the people who build surveillance tools start asking uncomfortable questions?

Anthropic's tightly restricted Mythos AI—designed to find security flaws—was accessed by Discord sleuths without a single line of exploit code. Meanwhile, North Korean hackers used AI to steal $12M in three months. The security paradox of 2026.

Google is investing at least $10 billion in Anthropic, potentially up to $40 billion. With Amazon's $5B deal just days earlier, two tech giants are now backing the same AI startup — valued at $350 billion.

Google is committing up to $40 billion to Anthropic, a direct AI competitor. The deal reveals how the real AI arms race isn't about models — it's about who controls the infrastructure beneath them.

Thoughts

Share your thoughts on this article

Sign in to join the conversation