The AI That Pulls the Trigger—And Answers to No One

Scout AI raised $100M to build autonomous military vehicles and weapons drones powered by a model called Fury. As AI moves from the highway to the battlefield, the real question isn't whether it can fight—it's who's responsible when it gets it wrong.

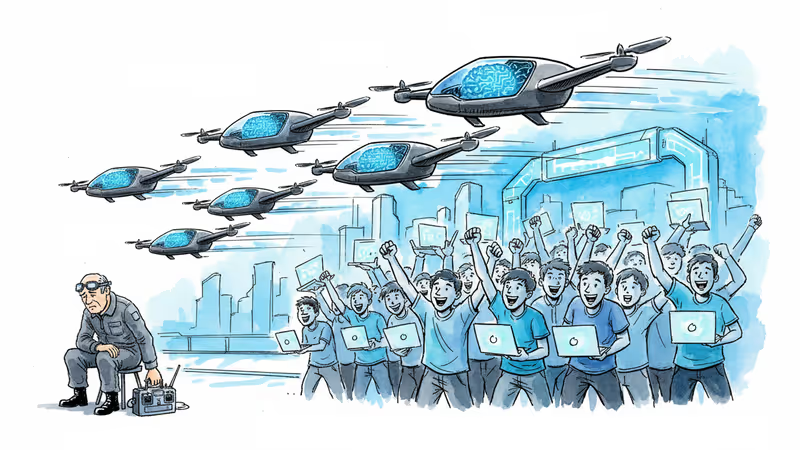

An 18-year-old soldier might fire out of fear. An AI won't—or so the argument goes. Whether that's a feature or the beginning of a much harder problem is exactly what one California startup, the Pentagon, and a growing pool of investors are now betting real money on.

ATVs on a Hillside, and a $100 Million Bet

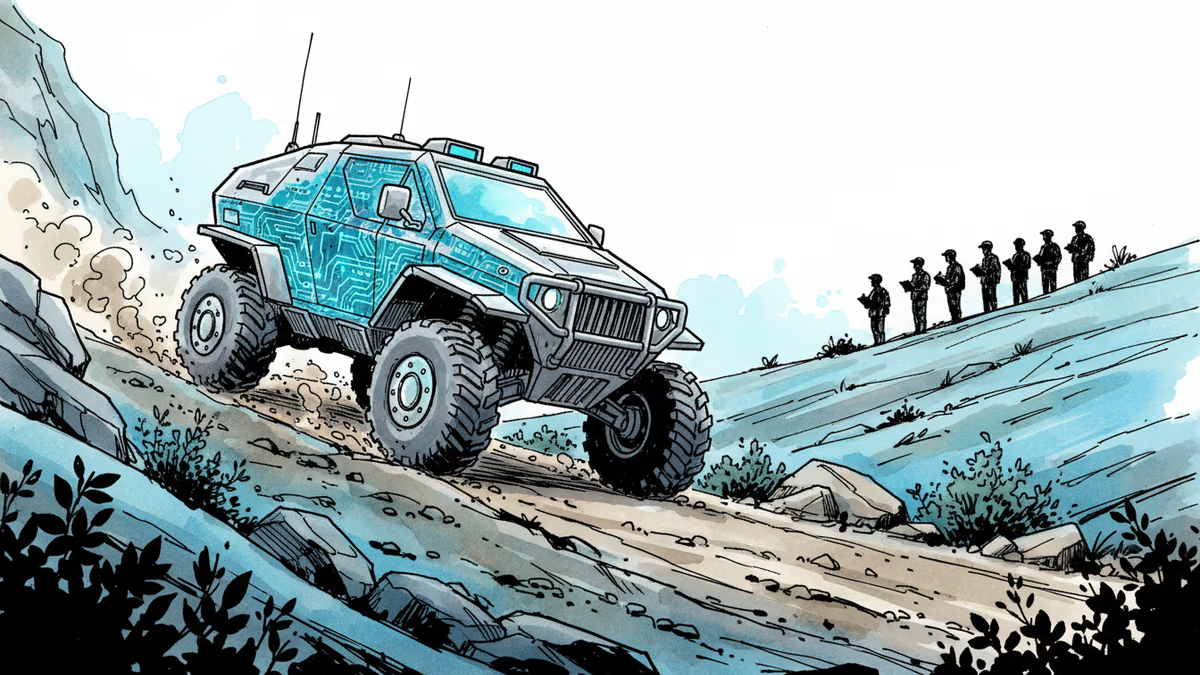

At an undisclosed U.S. military base in central California, four-seat all-terrain vehicles navigate steep, sandy trails without a hand on the wheel. The system driving them is called Fury, built by Scout AI, a startup founded in 2024 by Coby Adcock and Collin Otis. The company announced this week that it has raised $100 million in a Series A led by Align Ventures and Draper Associates—following a $15 million seed round just four months ago in January 2025.

That's not all the capital flowing in. Scout AI has secured $11 million in military development contracts from DARPA, the Army Applications Laboratory, and other Department of Defense customers. It is one of 20 autonomy companies participating in the U.S. Army's 1st Cavalry Division training cycle at Fort Hood, Texas—with a hard deadline: when that unit deploys in 2027, the products that proved themselves in training go with it.

The company's roadmap runs in two stages. First: autonomous logistics—carrying water and ammunition to remote outposts, or trailing behind crewed trucks in convoys so fewer soldiers are exposed to danger. Second, and more controversially: autonomous weapons.

How Do You Train an AI to Fight?

The technology Scout AI is betting on is called a Vision Language Action model, or VLA—a robotics control architecture built on top of large language models, first released by Google DeepMind in 2023. The same approach seeded startups like Physical Intelligence and Figure.AI (whose CEO, Brett Adcock, is Coby Adcock's brother—a family connection that helped bring CTO Otis into the picture).

Otis, who previously worked on autonomous trucking at Kodiak, frames VLAs with a surprisingly accessible analogy: "If I handed you a drone controller right now and strapped a headset on you, you could learn to fly it in minutes. You're connecting prior knowledge to a couple of joysticks. VLAs work the same way."

The TechCrunch journalist who toured the facility rode in one of the autonomous ATVs on a 6.5 km loop through genuinely difficult terrain—steep inclines, loose sand on turns, confusing trail intersections. The vehicle hugged the right side on wide paths and centered itself on narrow ones, mimicking its training drivers. When it hit a confusing junction, it slowed down abruptly, as if thinking. This model has been training on real vehicles for just six weeks.

Behind the scenes, the company runs what it calls Foundry—a training operation staffed by former soldiers working eight-hour shifts, logging every moment a human had to take over, feeding that data back into a reinforcement learning loop.

From Resupply to Strike: The Harder Question

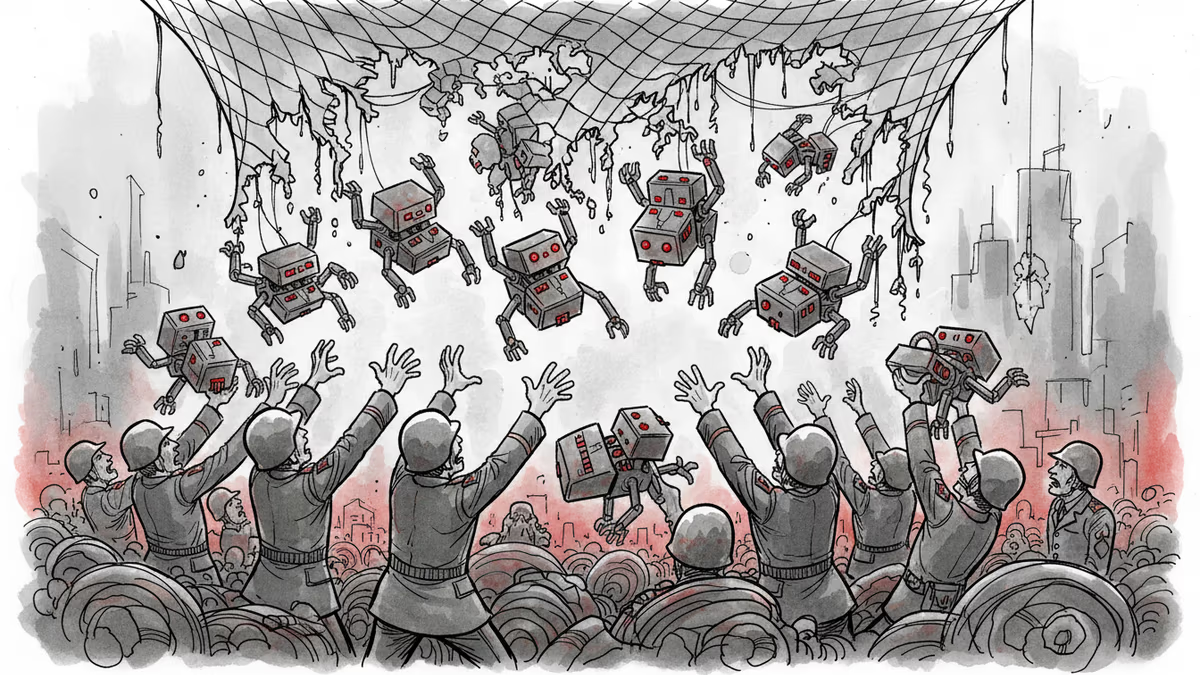

Logistics autonomy is the palatable pitch. The sharper edge of Scout AI's roadmap is weapons drones. The company is developing a system where a larger "quarterback" drone—carrying more compute—commands a swarm of munition drones. The scenario: a group of drones searches a geographic area, identifies hidden enemy tanks, and attacks them. Possibly without a human in the loop at any stage.

Otis argues this is actually more precise than indirect artillery fire. Operations lead Jay Adams, a retired Army captain, points to two safeguards: drones can be programmed to engage only within a defined geographic boundary, or only after human confirmation. And then there's the argument that keeps surfacing in defense-tech circles—AI doesn't shoot because it's scared.

That last point deserves scrutiny. Autonomous weapons aren't new—heat-seeking missiles and landmines have operated without human judgment for decades. But a VLA model that actively reasons about what constitutes a threat, identifies targets visually, and decides to engage is a different category of autonomy. Lt. Col. Nick Rinaldi, who supervises Scout AI's Army Applications Laboratory work, acknowledges that "automated targeting is hard and unlikely to be used outside constrained environments in the near term"—a notably cautious framing from someone inside the program.

Three Ways to Read This

For investors, the timing is hard to argue with. Ukraine has functioned as a brutal live demonstration of drone warfare's potential, and the Pentagon's autonomy budget has been climbing steadily. A 2027 deployment deadline gives Scout AI a concrete commercial milestone that most defense startups lack.

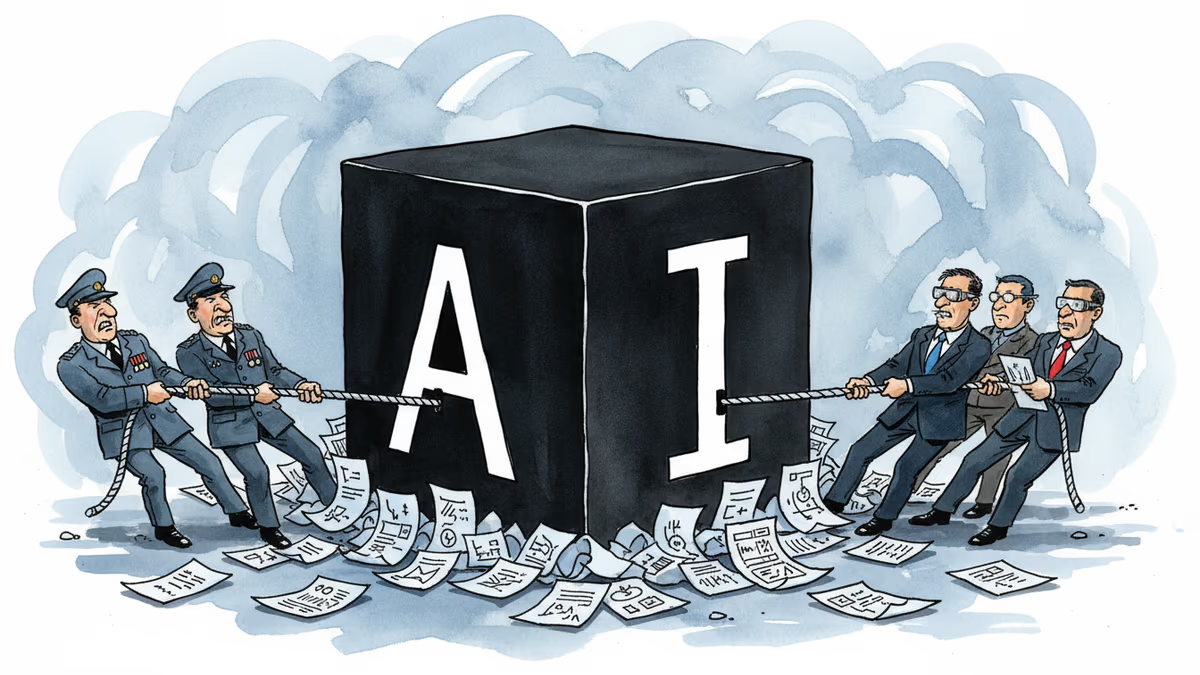

For ethicists and international lawyers, the speed is the problem. The Campaign to Stop Killer Robots has spent years pushing the UN toward a binding treaty on autonomous weapons—without success. The core unresolved issue: when an AI system misidentifies a target and kills civilians, who is legally responsible? The engineer who wrote the model? The commander who authorized the mission? The procurement officer who signed the contract? No existing body of international law cleanly answers that question, and the technology is moving faster than the legal framework is.

For the soldiers themselves, the calculus is more immediate. Brian Mathwich, an active-duty infantry officer embedded at Scout AI as a military fellow, recalls leading a resupply convoy through total darkness in Alaska and wishing he'd had autonomous vehicles. That's not an abstraction—it's a real operational gap that autonomy could close without putting more people in harm's way.

This content is AI-generated based on source articles. While we strive for accuracy, errors may occur. We recommend verifying with the original source.

Related Articles

Pentagon-Anthropic feud reveals the collapse of AI safety consensus. Killer robots and mass surveillance are no longer theoretical concerns.

OpenAI faced internal backlash over Pentagon contracts, revealing deeper questions about AI military use, transparency, and corporate accountability in defense partnerships.

Anduril's AI Grand Prix isn't just a recruitment event. It's a preview of how autonomous weapons will reshape both warfare and the talent wars.

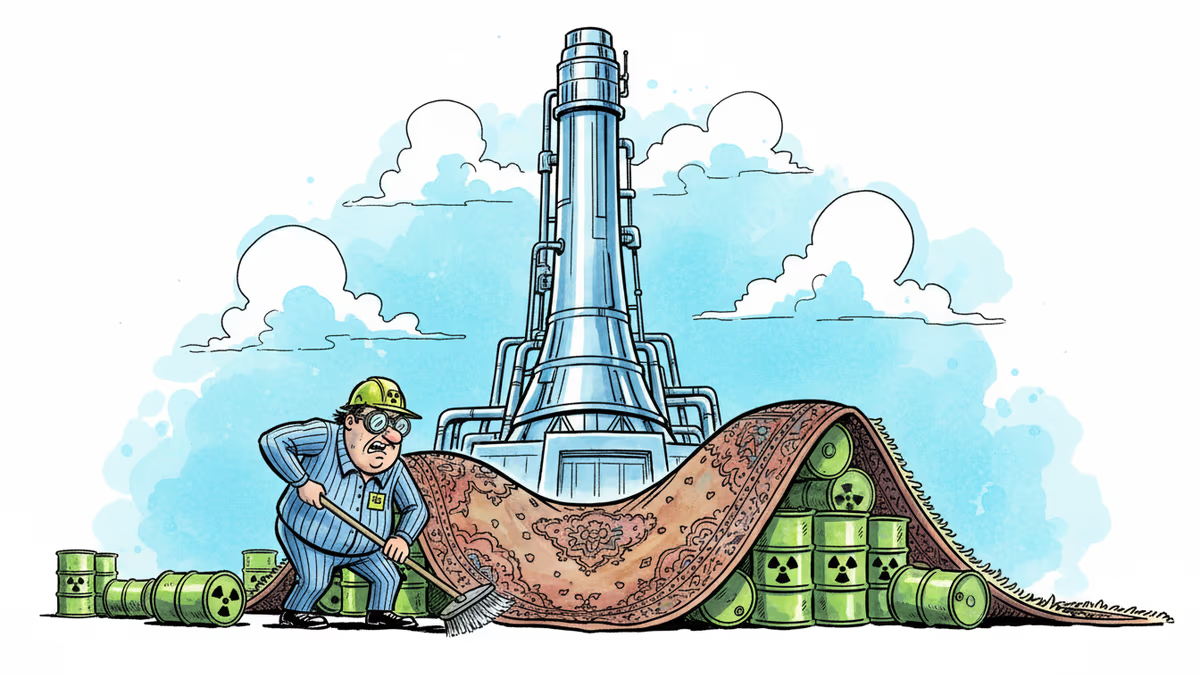

Nuclear energy is booming again, fueled by Big Tech's data center appetite. But 70 years of spent fuel still has nowhere permanent to go. Finland solved it. The US hasn't tried hard enough.

Thoughts

Share your thoughts on this article

Sign in to join the conversation