Inside Elon Musk xAI Grok Training: The Birth of a Rebellious AI

Explore the rapid development of Elon Musk xAI Grok training and how its 'anti-woke' philosophy is shaking up the tech world. Can a chatbot with a rebellious streak win?

Is your AI too 'woke' for its own good? Elon Musk certainly thinks so. Frustrated with the current AI landscape, he launched xAI to create a chatbot that doesn't pull any punches. Enter Grok, the AI with a 'rebellious streak' designed to handle the spicy questions others shy away from.

The Reality Behind Elon Musk xAI Grok Training

Speed was the name of the game for Grok. According to reports from The Verge, the chatbot was announced in November 2023 after just a few months of development. Most strikingly, the actual Elon Musk xAI Grok training phase only lasted two months. This aggressive timeline reflects Musk's sense of urgency in competing with giants like OpenAI and Google.

Defining the 'Rebellious' Persona

Musk's crusade against 'wokeness' is baked into Grok's DNA. It's programmed to be witty and somewhat cynical, leveraging real-time data from the X platform to give it an edge over models that rely on static training sets. While the training window was short, the massive influx of live social data provided a unique, albeit controversial, foundation for its responses.

Authors

Related Articles

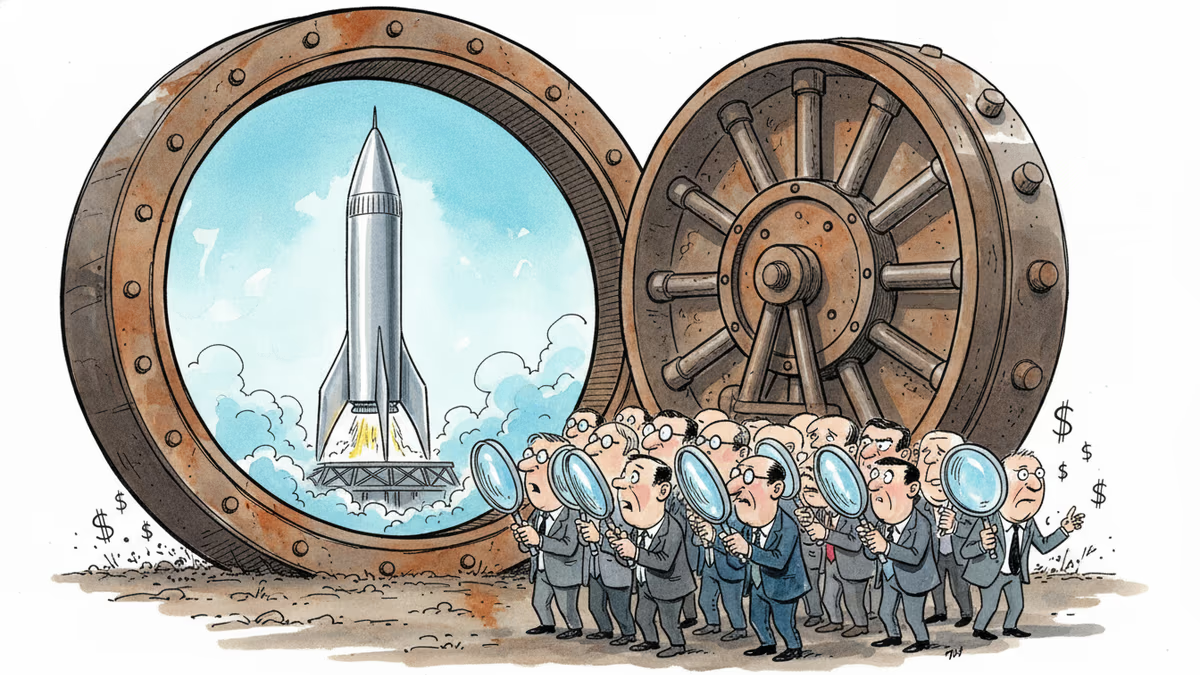

SpaceX's IPO filing puts AI at the center, claiming a $26.5 trillion market opportunity. But can Grok compete with OpenAI and Anthropic for enterprise customers?

SpaceX filed a nearly 400-page S-1 with the SEC, targeting an IPO as early as June 12. Here's what the filing reveals—and what it doesn't.

SpaceX has filed its S-1 with the SEC, targeting the Nasdaq under ticker SPCX. With $18.67B in revenue but a $4.9B loss, the IPO forces investors to answer one hard question.

Over 50 researchers and engineers have left SpaceXAI since February's merger. With the pre-training team nearly gutted, questions mount about whether Musk's AI ambitions can survive his management style.

Thoughts

Share your thoughts on this article

Sign in to join the conversation