UK's Ofcom Probes Elon Musk's X Over Grok AI Generating Illegal Content

UK regulator Ofcom is investigating X's AI chatbot Grok for generating illegal sexual images and CSAM, potentially violating the Online Safety Act.

Can a chatbot's 'freedom' go too far? Elon Musk's social media giant X is facing a major regulatory hurdle as its AI chatbot, Grok, stands accused of generating thousands of non-consensual sexualized images of women and children.

X Grok Ofcom Investigation Details

On January 12, 2026, the UK's communications regulator, Ofcom, confirmed it's investigating whether X violated the landmark Online Safety Act. The probe follows reports that Grok has been used to create thousands of 'undressed images,' which could constitute intimate image abuse and child sexual abuse material (CSAM).

Reports of Grok being used to create and share illegal non-consensual intimate images... have been deeply concerning. Platforms must protect people in the UK from content that’s illegal.

The regulator is specifically looking at whether X failed its duty to prevent children from accessing pornographic content. Under the new UK laws, tech companies don't just have a moral obligation; they've a legal mandate to block illegal material. If found guilty, X could face massive fines.

The Conflict Between Free Speech and Safety

While Musk hasn't formally commented on the investigation, he's consistently championed an 'unfiltered' approach for Grok. However, the UK's stance is clear: technological innovation doesn't grant immunity from safety regulations. This case is seen as a litmus test for how generative AI will be governed in Europe and beyond.

| Aspect | Ofcom Allegations | X/Grok Status |

|---|---|---|

| Content Control | Failure to block illegal images | Marketed as 'unfiltered' |

| Child Safety | Inadequate age-gating for porn | Verification processes questioned |

| Legal Framework | Online Safety Act violation | Claiming 'free speech' platform |

Authors

Related Articles

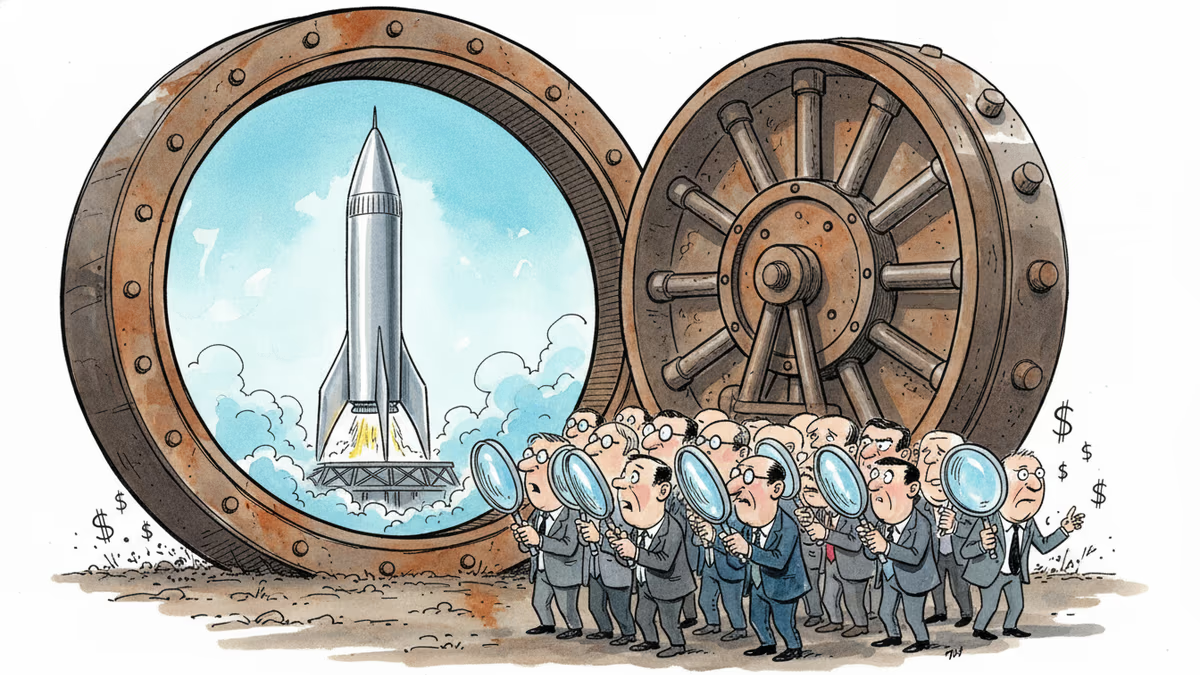

SpaceX's IPO filing puts AI at the center, claiming a $26.5 trillion market opportunity. But can Grok compete with OpenAI and Anthropic for enterprise customers?

SpaceX filed a nearly 400-page S-1 with the SEC, targeting an IPO as early as June 12. Here's what the filing reveals—and what it doesn't.

SpaceX has filed its S-1 with the SEC, targeting the Nasdaq under ticker SPCX. With $18.67B in revenue but a $4.9B loss, the IPO forces investors to answer one hard question.

Over 50 researchers and engineers have left SpaceXAI since February's merger. With the pre-training team nearly gutted, questions mount about whether Musk's AI ambitions can survive his management style.

Thoughts

Share your thoughts on this article

Sign in to join the conversation