$53.6 Billion for Drones: The Pentagon's Biggest Bet Yet

The US defense budget request for FY2027 includes $53.6 billion for drone and autonomous warfare—more than most nations spend on their entire military. What does this mean for global security and the future of war?

For $53.6 billion, you could fund Ukraine's entire defense budget—twice.

That's what the Pentagon is asking Congress to approve for drones and autonomous warfare systems in its FY2027 budget request, tucked inside a record $1.5 trillion defense spending proposal. The figure isn't just large in isolation. It would rank among the top 10 military budgets in the world if it were a country's total defense spend—ahead of Israel, South Korea, and Ukraine.

This isn't an incremental upgrade. It's a declaration that the United States intends to industrialize drone warfare at a scale no nation has attempted before.

From $226 Million to $53.6 Billion in One Year

The agency tasked with managing this money is the Defense Autonomous Warfare Group (DAWG), stood up in late 2025. In FY2026, it received $226 million—a modest pilot budget for a new organization. The FY2027 request represents a 237-fold increase in a single budget cycle.

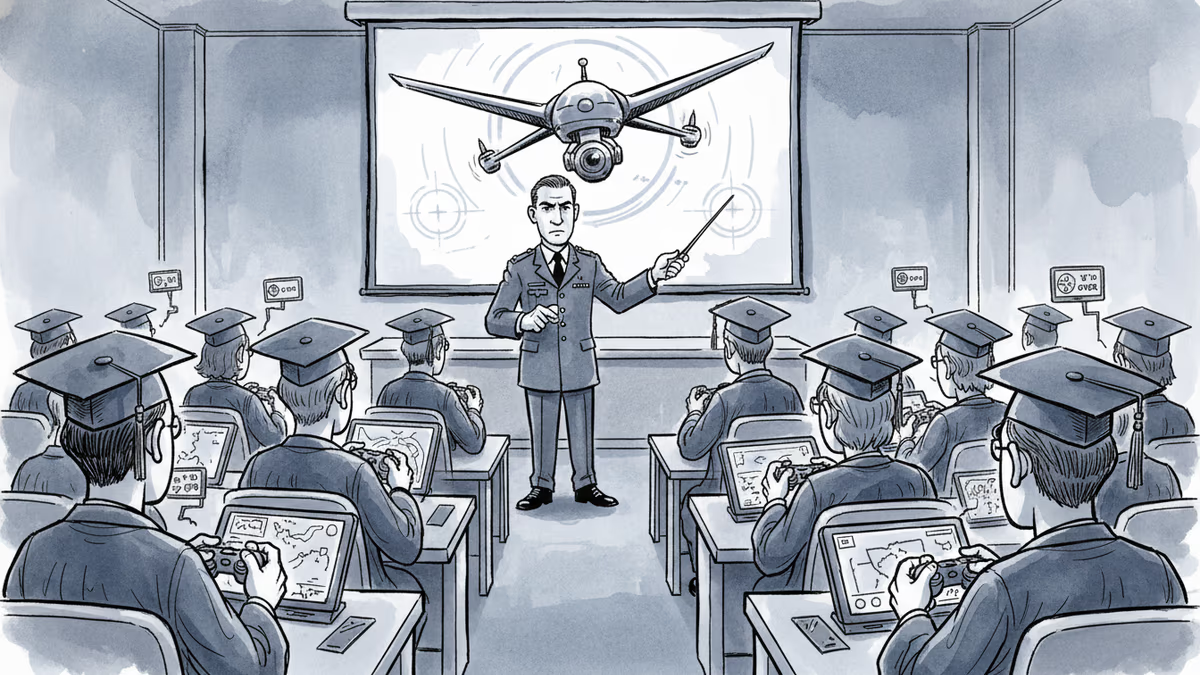

The spending breaks into four broad categories: scaling domestic drone production and procurement, training operators, building logistics infrastructure to sustain drone deployments in the field, and expanding counter-drone systems to protect US military installations. Offense and defense, simultaneously.

The strategic logic traces directly to Ukraine. Since 2022, the battlefield has become a live laboratory for drone warfare. Cheap, commercially derived drones have destroyed tanks worth millions, disrupted supply lines, and conducted reconnaissance that once required satellites or manned aircraft. The lesson the Pentagon drew was blunt: the era of expensive, low-volume precision platforms has a serious competitor, and the US is not yet winning the production race.

Who Cheers, Who Worries

For US defense contractors, the numbers are straightforwardly exciting. Companies like AeroVironment, Shield AI, and Joby Aviation—along with legacy primes pivoting toward autonomy—are watching a procurement pipeline materialize in real time. The question is execution speed: Pentagon budget requests and actual contracts are separated by months of congressional approval, acquisition bureaucracy, and capability verification.

For US allies, the picture is more complicated. Partners who want interoperability with American drone systems will face pressure to adopt compatible standards, potentially crowding out domestic drone industries in countries that have invested heavily in their own programs. For nations like South Korea, Australia, and Poland—all of which have growing defense tech sectors—this is as much a market-shaping moment as a security one.

For AI ethics researchers and legal scholars, the budget raises questions that dollars can't answer. When an autonomous system identifies and engages a target without a human pulling the trigger, who bears legal responsibility under international humanitarian law? The DAWG framework doesn't resolve that question—it scales it.

And within Congress, debate has already surfaced over what level of human oversight will be mandated for these systems. The Pentagon's track record on defining "meaningful human control" has been inconsistent, and critics argue that a $53.6 billion commitment locks in architectural decisions before the ethical framework is settled.

The Paradox Nobody Wants to Say Out Loud

There's a tension at the center of this investment that defense officials rarely address directly. Drone warfare reduces the human cost of conflict for the side deploying them. Fewer body bags means less domestic political resistance. And historically, when the cost of war falls, the threshold for initiating it tends to fall with it.

This isn't an argument against the technology. It's an observation about incentive structures. The same logic that makes drones attractive—they spare American lives—may quietly make the decision to use force easier to justify politically. Whether that's a feature or a bug depends entirely on who's making the decision, and why.

Authors

Related Articles

Over 270 Russian universities are offering students free tuition and up to $70,000 to serve as military drone pilots. Recruiters promise no frontline risk. The reality is more complicated.

Palantir has become the tech backbone of Trump's immigration enforcement. Former employees are calling it a 'descent into fascism.' What happens when the people who build surveillance tools start asking uncomfortable questions?

US Space Command confirms Russia has deployed operational anti-satellite weapons tracking American spy satellites in low-Earth orbit. What does this mean for space security?

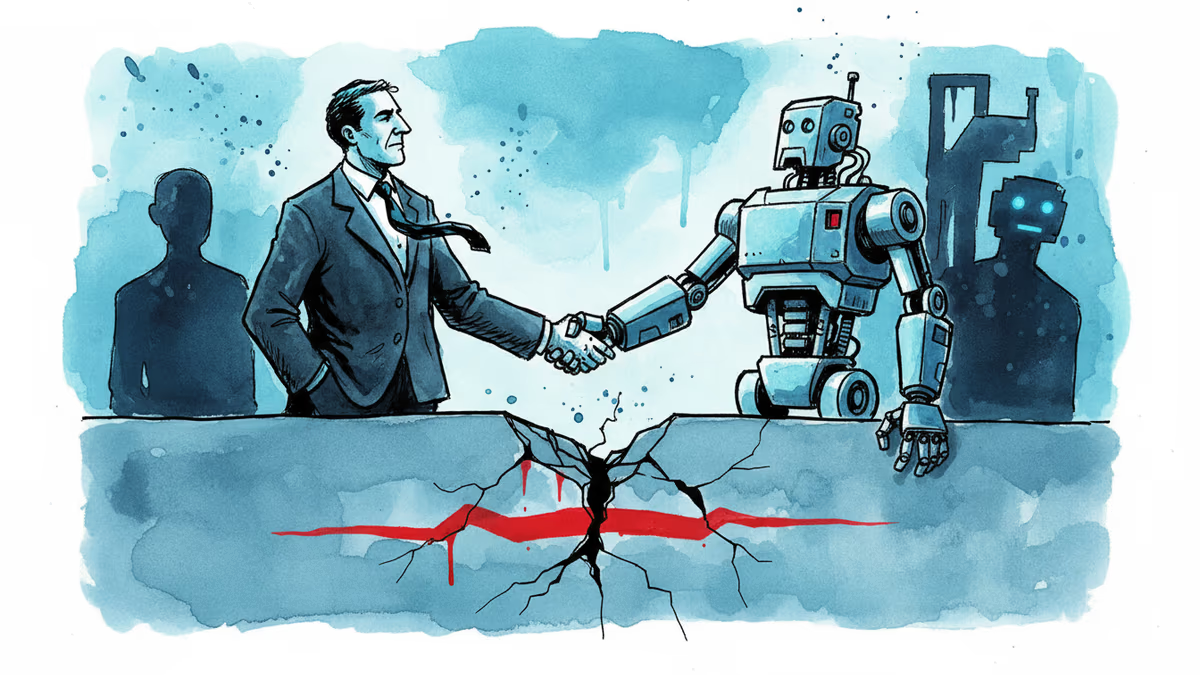

After two months of bitter conflict, Anthropic and the Trump administration may be thawing—thanks to a new cybersecurity AI model. What does it mean when principle meets political pressure?

Thoughts

Share your thoughts on this article

Sign in to join the conversation