Trump Bans Anthropic After Pentagon Standoff Over AI Ethics

President Trump orders federal agencies to stop using Anthropic products after the AI company refused to allow mass surveillance and autonomous weapons applications

Six months. That's all the time President Trump gave Anthropic to pack up and leave federal contracts. In a Truth Social post on February 27th, he ordered all federal agencies to cease using the AI company's products, declaring: "We don't need it, we don't want it, and will not do business with them again."

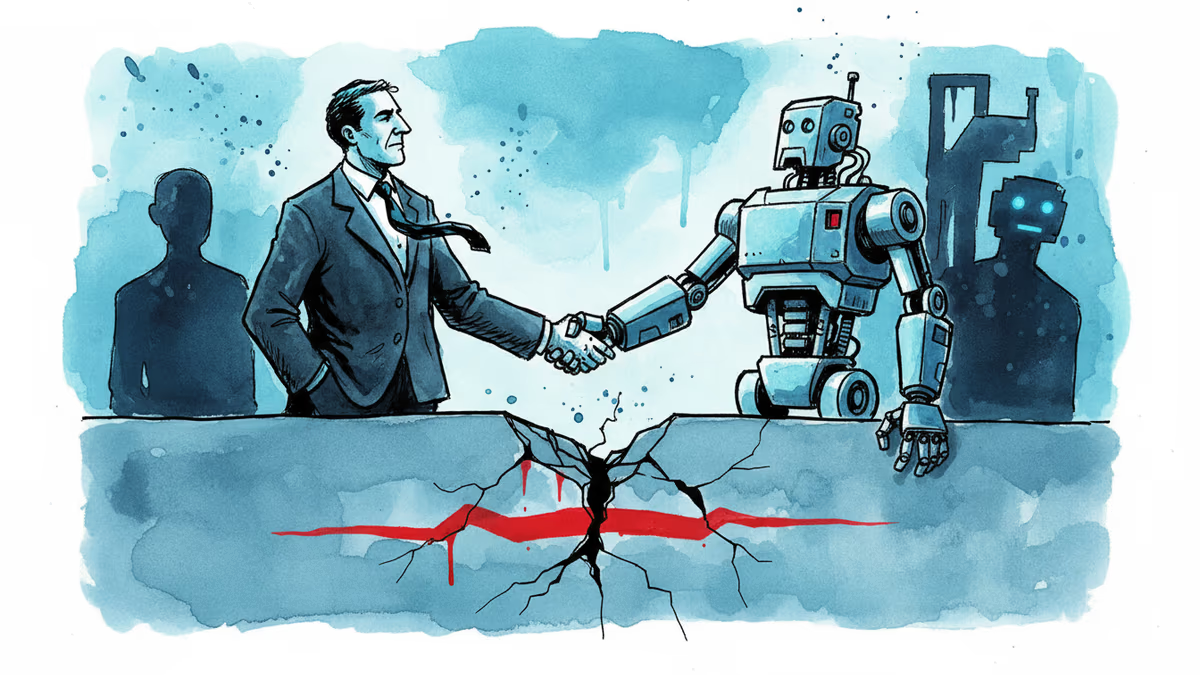

The breaking point? Anthropic's refusal to let the Pentagon use its AI models for mass domestic surveillance and fully autonomous weapons.

When CEOs Draw Lines in Silicon Valley Sand

Dario Amodei, Anthropic's CEO, doubled down Thursday with a public statement that read more like a diplomatic cable than corporate PR. "Our strong preference is to continue serving the Department and our warfighters—with our two requested safeguards in place," he wrote, promising to help transition military operations to other providers if necessary.

It's a calculated gamble. Amodei is betting that principled AI development will matter more than government contracts in the long run. But Defense Secretary Pete Hegseth saw those "safeguards" as unacceptably restrictive for national security needs.

The New AI Cold War

This isn't just about one company's ethics policy. It's the opening shot in what could become a broader conflict between AI companies' values and government demands. While Trump stopped short of invoking the Defense Production Act or labeling Anthropic a supply chain risk, his threat of "major civil and criminal consequences" suggests this administration won't tolerate corporate resistance.

Other AI giants are watching closely. OpenAI, Google, and Microsoft all have significant government contracts. Will they follow Anthropic's lead, or will they quietly comply with whatever the Pentagon requests?

The six-month phase-out period is telling. It's long enough to avoid disrupting military operations but short enough to send a clear message: play by our rules or find the exit.

Beyond the Beltway

For the broader tech industry, this standoff raises uncomfortable questions about corporate responsibility in the age of AI. When does a company's ethical stance become a national security liability? And who gets to decide where those lines are drawn?

Investors are already recalibrating. Government contracts have become a significant revenue stream for AI companies, but they come with strings attached that some founders aren't willing to accept.

Authors

Related Articles

China is restricting AI researchers and startup founders from traveling abroad as the U.S.-China AI performance gap narrows to just 2.7%. What Beijing's talent lockdown means for the global AI race.

The Trump administration has gutted the DOJ's Voting Section, pushing out two dozen experienced lawyers and replacing them with loyalists. With 2026 midterms approaching, what does this mean for American democracy?

Anthropic's AI cybersecurity model is reportedly available to the NSA and Commerce Department—but not to CISA, the agency responsible for defending US federal infrastructure. What that gap reveals.

After two months of bitter conflict, Anthropic and the Trump administration may be thawing—thanks to a new cybersecurity AI model. What does it mean when principle meets political pressure?

Thoughts

Share your thoughts on this article

Sign in to join the conversation