Sora Inside ChatGPT: Convenience or Pandora's Box?

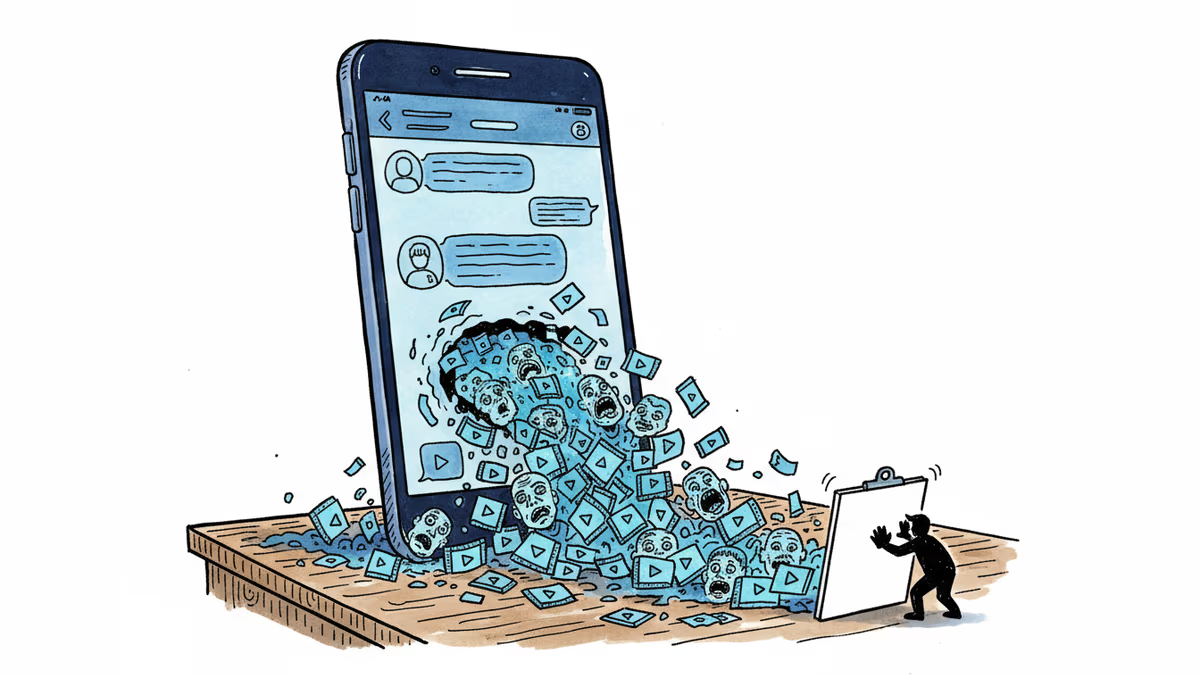

OpenAI plans to embed its Sora video generator directly into ChatGPT. The move could supercharge adoption—but also flood the internet with AI-generated deepfakes at unprecedented scale.

Right now, typing into ChatGPT can produce a poem, write code, or generate an image. Soon, it may hand you a video.

The Information reports that OpenAI is actively exploring embedding its Sora video generator directly into ChatGPT—mirroring how image generation was quietly folded into the chatbot last year. The logic is straightforward: Sora launched as a standalone app and website, and it never caught fire the way ChatGPT did. Putting it where 500 million monthly users already live changes the math entirely.

How We Got Here

Sora made waves when OpenAI first previewed it in early 2024. The demos were striking—cinematic, fluid, eerily convincing. But when the actual product launched, it landed quietly. Accessing it required a separate subscription, a separate login, a separate mental model. In the attention economy, that friction is lethal.

OpenAI watched what happened when DALL-E image generation was baked into ChatGPT: usage spiked. The playbook is the same this time. Remove the detour, and millions of people who never sought out a video tool will suddenly have one in their hands—whether they went looking for it or not.

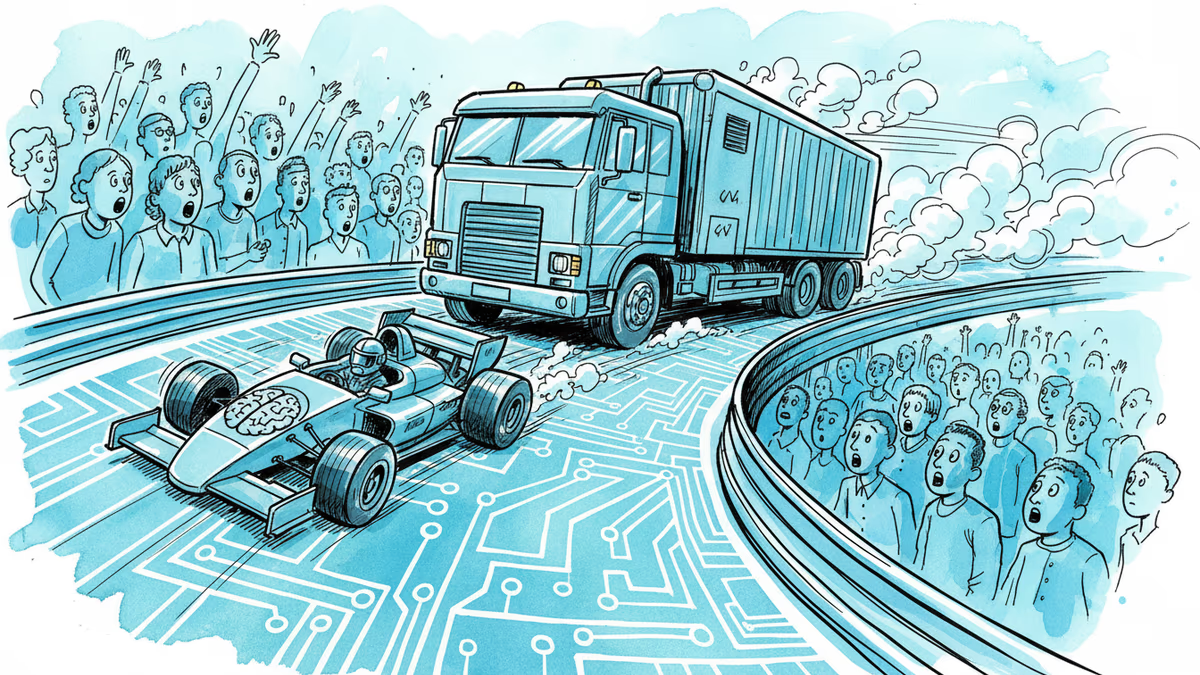

The competitive pressure is real. Google's Gemini is expanding its multimodal capabilities. Chinese AI labs are releasing video models at a pace that has caught Western observers off guard. For OpenAI, keeping ChatGPT as the default AI interface means continuously raising the ceiling of what that interface can do.

The Problem That Was Always There

Here's the uncomfortable part: Sora's deepfake problem didn't start with this integration. It started at launch.

Within days of Sora's debut, users were generating videos that looked disturbingly real—faces, voices, scenarios that never happened. OpenAI has safety filters in place, and the company says it watermarks AI-generated content. But researchers tracking synthetic media have noted consistently that generation capabilities outpace detection tools. The gap isn't closing.

What the standalone app provided, almost accidentally, was friction as a filter. A paywall. A separate sign-up. Steps that slowed casual misuse without stopping determined bad actors. ChatGPT integration removes those steps for 500 million people simultaneously.

That's not a theoretical risk. It's a scaling problem with a very concrete timeline.

Who Wins, Who Worries

The reactions split cleanly along lines of interest.

Content creators see opportunity and threat in the same breath. Producing short-form video for social platforms—the kind that currently requires cameras, editors, and hours—could compress into a single text prompt. That's genuinely useful. But the same tool makes it trivially easy to fabricate someone's likeness. Creators who built audiences on authenticity now have to compete with synthetic versions of themselves.

Advertisers and marketing teams are doing the math on production costs. A 30-second ad that once required a crew, a shoot day, and post-production could, in principle, be generated in minutes. Agencies that haven't reckoned with this are already behind.

Regulators are watching, but struggling to keep pace. The EU's AI Act mandates disclosure for AI-generated content, but enforcement at the scale of hundreds of millions of generated videos per day is an unsolved problem. In the US, there's no federal framework at all—just a patchwork of state laws and platform policies that vary wildly.

Competitors—Google, Meta, Stability AI, and a growing list of startups—will feel the pressure. When the world's most-used AI interface adds video generation, the bar for what a standalone video tool needs to offer rises sharply.

What Comes Next

The integration hasn't been officially confirmed by OpenAI. Timelines are unclear. But the direction of travel is not. The question isn't really whether video generation becomes a default feature of AI assistants—it's when, and under what conditions.

For users, the near-term implication is simple: the tools for creating convincing synthetic video are about to become as accessible as sending a text message. That has genuine creative potential. It also has genuine potential for harm that no company has fully solved.

Authors

Related Articles

From hyper-personalized phishing to deepfake video calls, AI has turbocharged cybercrime. Meanwhile, hospitals adopt AI tools whose patient benefits remain unproven. What does this mean for trust?

Cerebras Systems has refiled for an IPO targeting mid-May, backed by a $23B valuation, a reported $10B OpenAI deal, and an AWS partnership. What does this mean for Nvidia's dominance and the AI chip landscape?

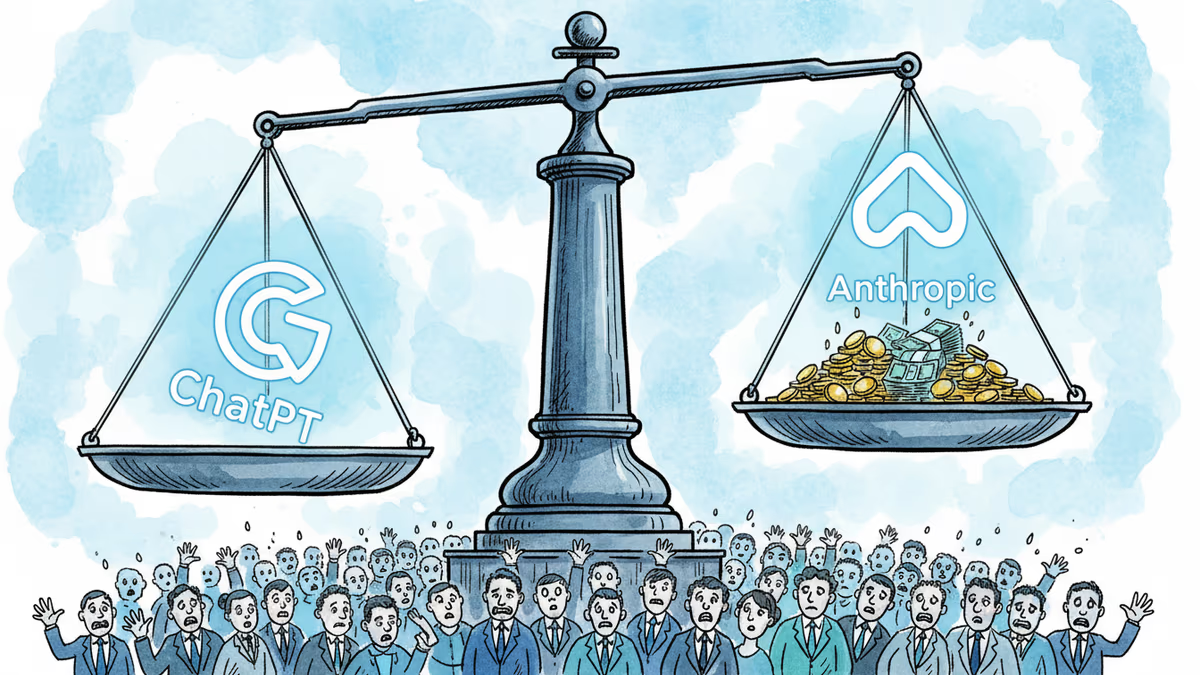

OpenAI's $852B valuation is drawing skepticism from its own backers as Anthropic's ARR tripled in three months. The secondary market is already voting with its feet.

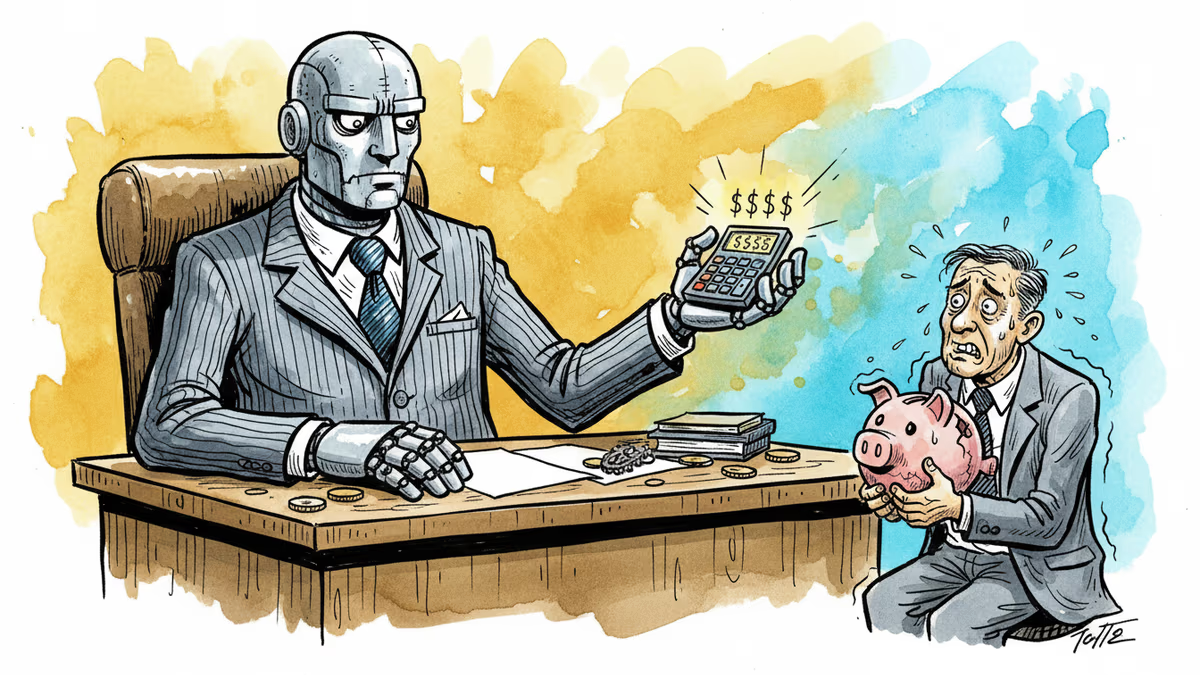

OpenAI acquired Hiro Finance, an AI-powered personal finance startup. Is this just a talent grab, or is the ChatGPT maker quietly building a financial services empire?

Thoughts

Share your thoughts on this article

Sign in to join the conversation