The Startup That Poached OpenAI From Nvidia Is Going Public

Cerebras Systems has refiled for an IPO targeting mid-May, backed by a $23B valuation, a reported $10B OpenAI deal, and an AWS partnership. What does this mean for Nvidia's dominance and the AI chip landscape?

"Obviously, Nvidia didn't want to lose the fast inference business at OpenAI — and we took that from them."

That's Cerebras Systems CEO Andrew Feldman, speaking to the Wall Street Journal. It's the kind of line that either ages brilliantly or becomes an embarrassing footnote. Right now, the numbers are starting to back it up.

On April 18, Cerebras refiled for an IPO, targeting a mid-May debut. The company hasn't disclosed how much it hopes to raise, but the backdrop is hard to ignore: a $23 billion valuation, $510 million in 2025 revenue, a reported $10 billion+ deal with OpenAI, and a freshly inked agreement to supply chips to Amazon Web Services data centers.

A Second Shot at the Public Markets

This isn't Cerebras' first attempt at going public. In 2024, the company filed for an IPO but was forced to withdraw after U.S. federal regulators launched a national security review of an investment from Abu Dhabi-based G42. The process stalled, and the filing was pulled.

The 2026 version of Cerebras looks different on paper. Last year, it closed a $1.1 billion Series G. In February, it followed with a $1 billion Series H at that $23 billion valuation. The customer roster has grown. The revenue is real.

But the financials deserve a closer read. The $237.8 million net income figure in the IPO filing looks strong — until you note that on a non-GAAP basis, excluding certain one-time items, the company ran a $75.7 million net loss in 2025. Profitable or not, depending on which accounting lens you use. That ambiguity will be one of the first things institutional investors stress-test.

What Cerebras Actually Sells — And Why It Matters

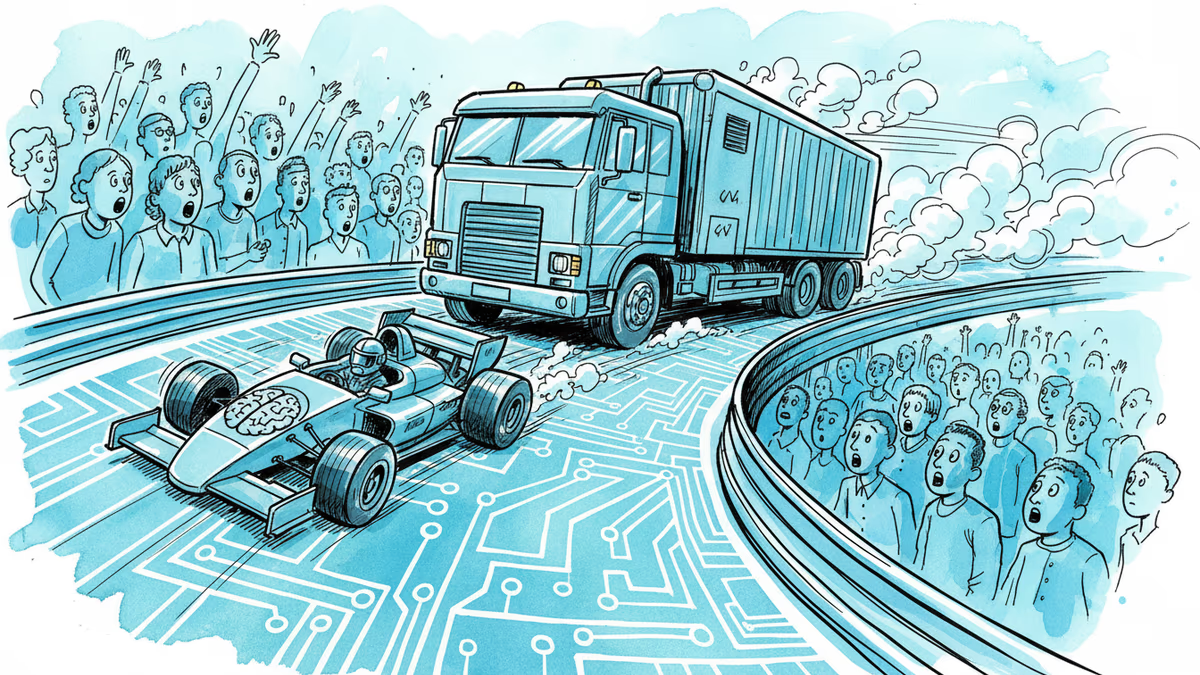

Cerebras builds what it calls the Wafer Scale Engine (WSE): a single chip the size of an entire silicon wafer. The idea is to eliminate the communication bottlenecks that arise when you stitch together hundreds of conventional GPUs. The result, the company claims, is dramatically faster performance on AI inference — the process of generating responses after a model has already been trained.

This is the key distinction. Nvidia dominates the broader AI chip market, holding an estimated 70–80% share. But Cerebras isn't trying to beat Nvidia at everything. It's betting that for a specific, high-value workload — fast inference at scale — its architecture wins. And OpenAI, the most prominent AI lab in the world, apparently agreed.

Feldman's boast about taking inference business from Nvidia is notable not just as competitive posturing. It signals that even Nvidia's most loyal customers are willing to diversify when a compelling alternative exists.

The Timing Isn't Accidental

Spring 2026 may be the most favorable window for an AI infrastructure IPO in recent memory. Microsoft, Google, Amazon, and Meta have collectively announced capital expenditure plans exceeding $300 billion for AI data centers this year. That capital has to flow somewhere — into chips, cooling systems, power infrastructure, and the companies that supply them.

Cerebras is filing into that tailwind deliberately. An IPO now locks in a valuation shaped by peak AI infrastructure enthusiasm. The proceeds, in turn, fund the manufacturing scale needed to compete for more data center contracts. It's a bet that the AI buildout has enough runway to justify the growth story.

The risk is that the window closes. AI infrastructure spending is cyclical in ways that aren't yet fully visible. If hyperscaler capex moderates — or if Nvidia responds with a competing inference-optimized product — the narrative gets harder to sustain.

Three Ways to Read This

For investors, the question is whether Cerebras is a durable platform or a well-timed niche play. The OpenAI deal is impressive, but customer concentration at this scale is a risk factor. How sticky are these contracts if Nvidia cuts prices or launches a faster inference chip?

For the AI industry, this IPO is a data point in a larger story: the GPU monoculture is cracking. Custom silicon, ASICs, and wafer-scale architectures are each carving out territory. The era of one chip architecture ruling every AI workload may be ending faster than most expected.

For regulators, the 2024 withdrawal over the G42 national security review hasn't been forgotten. The geopolitics of AI chip supply chains — who owns the investors, who controls the manufacturing, who gets access — will shadow this offering through its roadshow.

Authors

Related Articles

Snowflake's new $6 billion AWS contract is about more than cloud spending. It signals a shift in AI infrastructure—away from Nvidia GPUs and toward cheaper, homegrown chips for the agent era.

American Airlines just signed Starlink for 500+ aircraft. It's not just about faster inflight internet — it's a calculated move in the run-up to what could be the largest IPO in history.

SpaceX's upgraded Starship V3 completed its first test flight, deploying 20 Starlink simulators but losing the Super Heavy booster. With an IPO weeks away, the stakes just got higher.

SpaceX's IPO filing puts AI at the center, claiming a $26.5 trillion market opportunity. But can Grok compete with OpenAI and Anthropic for enterprise customers?

Thoughts

Share your thoughts on this article

Sign in to join the conversation