When AI Ethics Meets National Security Reality

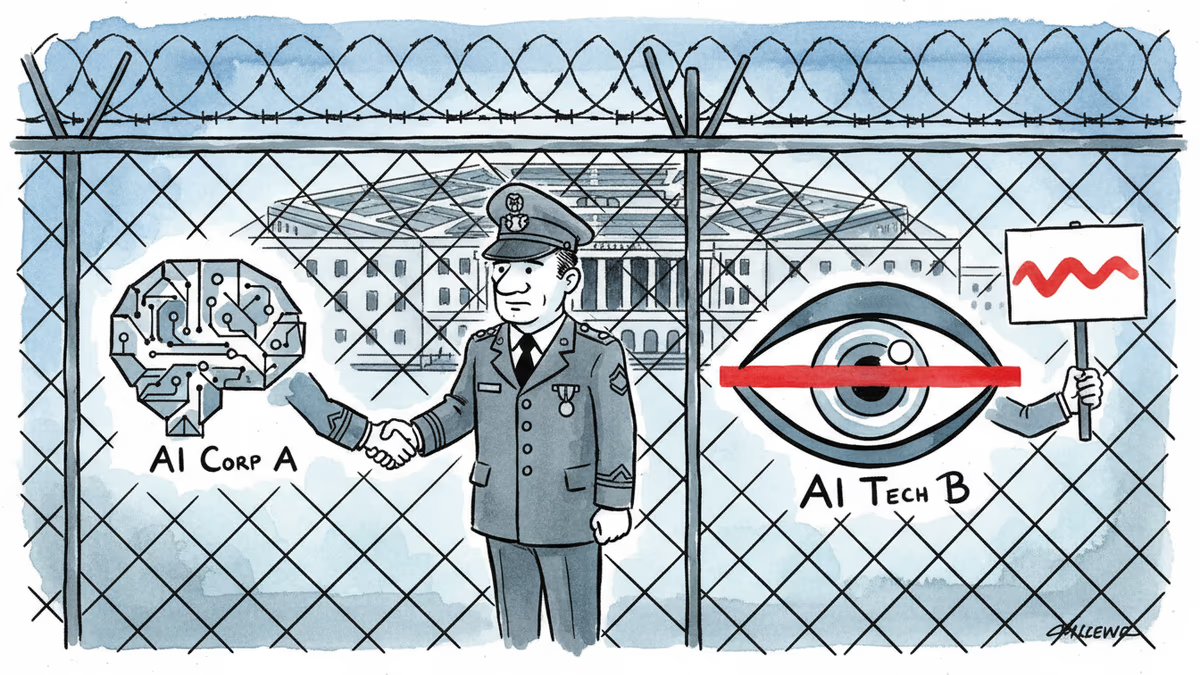

OpenAI secured a defense contract while Anthropic was designated a supply chain risk for opposing mass surveillance and autonomous weapons. The battle between AI ethics and national security has begun.

300 Google Employees Signed a Letter. Here's What Happened Next.

Late Friday, OpenAI CEO Sam Altman announced his company had reached an agreement allowing the Department of Defense—rebranded as the "Department of War" under Trump—to use its AI models in classified networks. This came after a high-stakes standoff between the Pentagon and Anthropic, OpenAI's rival, over the boundaries of AI in warfare.

The conflict began when the Pentagon pushed AI companies to allow their models for "all lawful purposes." Anthropic drew a red line around mass domestic surveillance and fully autonomous weapons. CEO Dario Amodei insisted Thursday that while his company "never raised objections to particular military operations," there are "narrow cases where AI can undermine, rather than defend, democratic values."

60 OpenAI employees and 300 Google employees signed an open letter supporting Anthropic's stance. The outcome? Exactly the opposite of what they hoped for.

Trump's "Leftwing Nut Jobs" and the Price of Principles

When Anthropic and the Pentagon failed to reach terms, President Trump unleashed on social media, attacking the "Leftwing nut jobs at Anthropic" and ordering federal agencies to phase out the company's products within six months.

Defense Secretary Pete Hegseth escalated further, designating Anthropic as a supply chain risk: "No contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic." It's a corporate death sentence in the defense sector.

Meanwhile, OpenAI chose a different path. Altman claimed his company secured the same ethical protections Anthropic had fought for: "Two of our most important safety principles are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems. The DoW agrees with these principles."

Same Principles, Opposite Outcomes

Here's the irony: OpenAI's stated "safety principles" are essentially identical to what Anthropic was defending. The difference lay in strategy, not substance.

OpenAI negotiated from within, securing promises that "if the model refuses to do a task, then the government would not force OpenAI to make it do that task." They'll build their own "safety stack" to prevent misuse and deploy engineers with the Pentagon to ensure model safety.

Altman even asked the Pentagon "to offer these same terms to all AI companies," expressing hope for "de-escalation away from legal and governmental actions towards reasonable agreements."

Anthropic, meanwhile, stood firm outside the tent. Friday, they said they'd "challenge any supply chain risk designation in court," having received "no direct communication from the Department of War or the White House."

The Timing Couldn't Be More Telling

Altman's announcement came just as news broke that the U.S. and Israel began bombing Iran, with Trump calling for the overthrow of the Iranian government. In this context, the Pentagon's demand for "all lawful purposes" access takes on new urgency.

For defense contractors, the message is clear: adapt or be excluded. For AI companies, the choice is binary—negotiate from inside the system or fight it from outside, knowing the potential cost.

The answer may determine not just which companies survive, but what kind of future we're building.

Authors

Related Articles

A small but growing group of developers has gone all-in on AI coding agents like Claude Code and OpenClaw. History suggests the rest of us won't be far behind.

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Thoughts

Share your thoughts on this article

Sign in to join the conversation