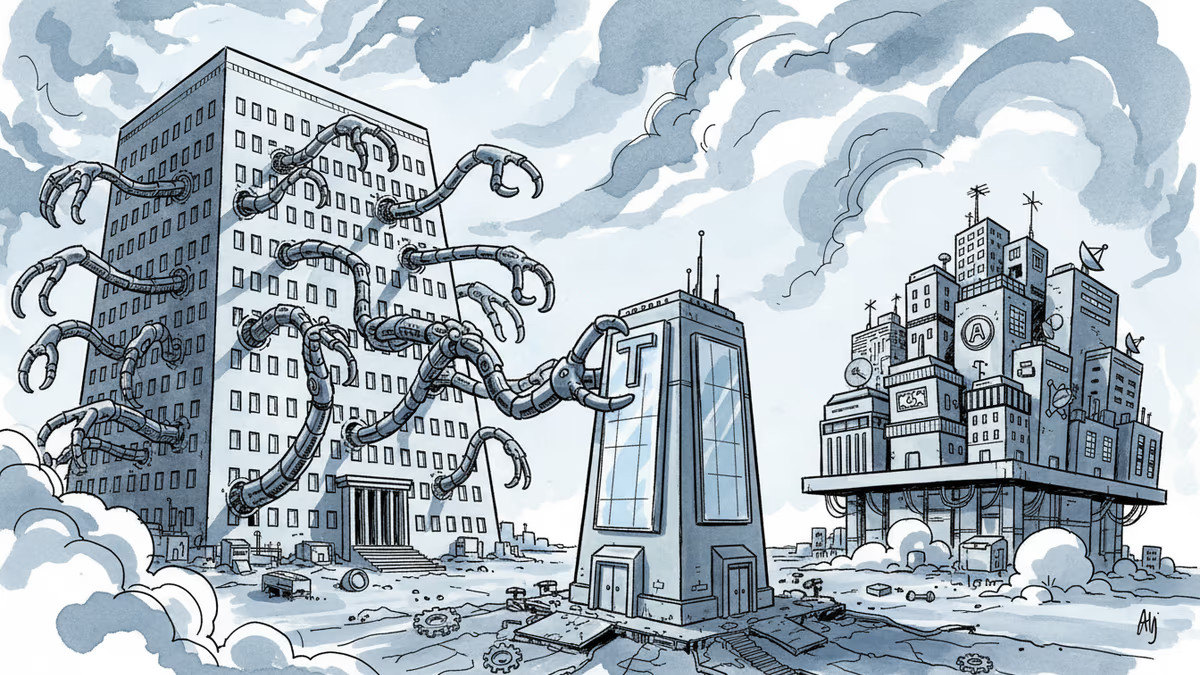

Pentagon vs Anthropic: When America Sanctions Its Own AI Champion

Defense Secretary designates Anthropic as supply-chain risk, shocking Silicon Valley. OpenAI strikes deal same day with identical terms. What's really happening?

America Just Sanctioned Its Own AI Company

Friday afternoon sent shockwaves through Silicon Valley. Defense Secretary Pete Hegseth designated Anthropic as a "supply-chain risk," effectively cutting the AI startup off from any company doing business with the US military. "Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic," Hegseth declared.

This isn't just another contract dispute. It's an unprecedented move: the US government essentially sanctioning one of its own leading AI companies. The twist? Anthropic isn't Chinese, Russian, or from any adversarial nation. It's as American as Apple pie.

The Negotiation Breakdown: Drawing Lines in Digital Sand

The conflict stemmed from weeks of tense negotiations over military AI use. The Pentagon wanted Anthropic to agree to "all lawful uses" of its technology with no exceptions. Anthropic drew two red lines: no mass domestic surveillance of Americans, and no fully autonomous weapons systems.

From Anthropic's perspective, these were reasonable safety guardrails. From the Pentagon's view, it was conditional cooperation from a company they believed should serve national security without question.

Here's where it gets interesting: the same evening, OpenAI announced a deal with the Department of Defense. The terms? "Prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems." Nearly identical to what Anthropic had requested.

Silicon Valley's Fury: "Time to Leave America?"

The tech community's reaction was swift and scathing. Dean Ball, former White House AI policy advisor, called it "the most shocking, damaging, and over-reaching thing I have ever seen the United States government do." He added: "If you are an American, you should be thinking about whether or not you should live here 10 years from now."

Y Combinator founder Paul Graham described the administration as "impulsive and vindictive." OpenAI researcher Boaz Barak warned of "kneecapping one of our leading AI companies" as the "worst own goal we can do."

Legally, experts are baffled. Federal contracts attorney Alex Major noted the announcement "is not mired in any law we can divine right now." Supply-chain risk designations typically require risk assessments and Congressional notification—not social media announcements.

Corporate Chaos: Who Must Cut Ties?

The immediate fallout creates a nightmare scenario for major tech companies. Amazon, Microsoft, Google, and Nvidia all work with both Anthropic and the Pentagon. They're now caught in regulatory limbo, unsure whether they must sever ties with one of the industry's most popular AI models.

One military contractor executive, speaking anonymously, said their company is "in a holding pattern" with lawyers examining whether using Anthropic's Claude AI internally constitutes an "essential component" of their government services. The distinction matters enormously—it could determine which companies face forced separation from Anthropic.

Defense-focused AI companies like Anduril and Shield AI declined to comment, likely weighing their own exposure.

The Chilling Effect: Innovation vs. National Security

Beyond immediate business impacts, this move sends a troubling signal to the broader tech industry. "The Defense Department just sent a huge message to every company that if you dip your toe in the defense contracting waters, we will grab your ankle and pull you all the way in, anytime we want," warns Greg Allen from the Center for Strategic and International Studies.

The timing is particularly awkward. Hegseth had previously suggested using the Defense Production Act to force Anthropic's compliance—a move that undermines claims about genuine supply-chain risks. If Anthropic posed real security threats, why consider forcing them to provide technology to the military?

Anthropic promises to challenge the designation in court, but lawsuits take time. Meanwhile, American AI leadership faces its strangest threat yet: its own government's impulsive governance. In the race for AI supremacy, are we shooting ourselves in the foot?

Authors

Related Articles

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Indian venture capital has quietly displaced Silicon Valley in its own backyard. Only one American VC made India's top 10 investor list last year. Here's why that matters beyond India.

Thoughts

Share your thoughts on this article

Sign in to join the conversation