Why the Pentagon Just Labeled Claude a 'Supply-Chain Risk

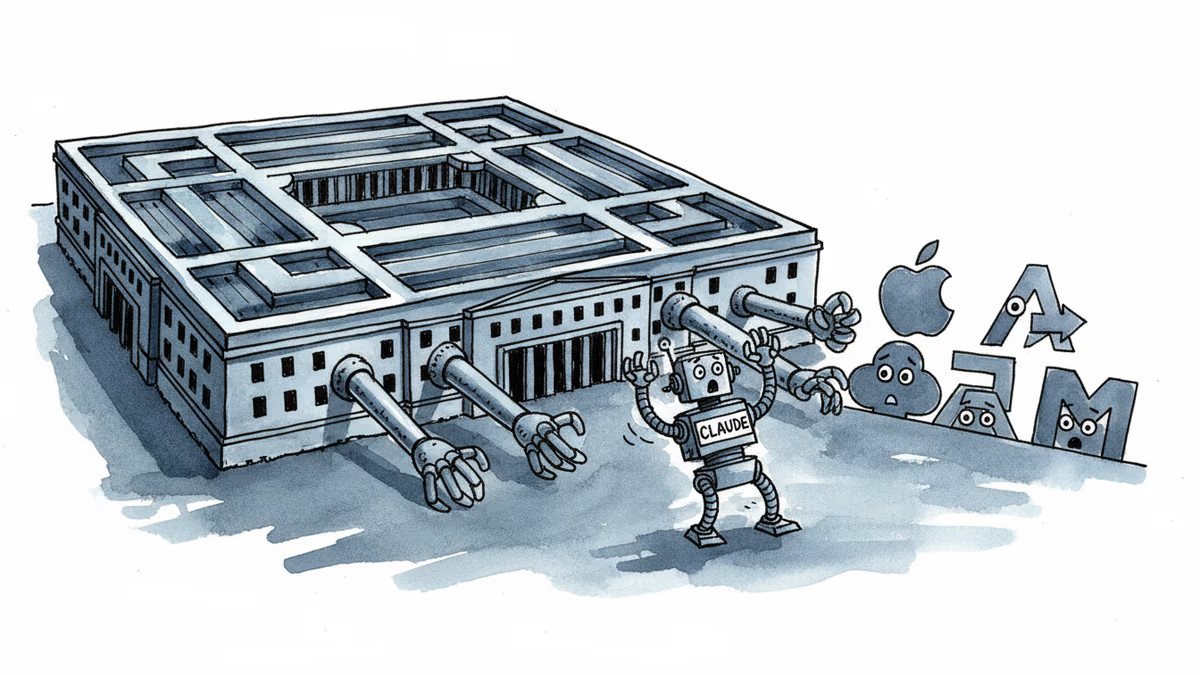

Two hours after Trump banned Anthropic, Defense Secretary Pete Hegseth escalated by designating Claude as a supply-chain risk, immediately impacting Palantir, AWS, and other major contractors.

From Ban to 'Risk' in Two Hours

President Trump's Truth Social post banning Anthropic products from federal agencies was just the opening move. Two hours later, Defense Secretary Pete Hegseth escalated dramatically: Claude is now officially designated a "supply-chain risk."

The implications hit immediately. Major Pentagon contractors like Palantir and AWS that have integrated Claude into their defense work face an urgent scramble. This isn't just a usage ban—it's a scarlet letter that could ripple through their entire government business.

The Pentagon hasn't clarified whether companies using Claude for non-defense services will also face blacklisting, leaving contractors in a gray zone of uncertainty.

The Control Game Behind 'Safety' Concerns

Anthropic built its reputation on AI safety, positioning itself as more cautious than OpenAI. So why the sudden "risk" designation?

The answer isn't technical—it's about compliance. Unlike concerns about Chinese influence or foreign interference, this appears to be about domestic AI companies that won't bend to the administration's preferred approach.

A defense industry source familiar with the decision explained: "It's not about Claude's capabilities being dangerous. It's about Anthropic's unwillingness to prioritize government requirements over their safety protocols."

The message to Silicon Valley is clear: play by our rules, or face the consequences.

Corporate Scramble: The New AI Calculus

Palantir, which has leveraged Claude's natural language processing for defense data analysis, now faces a costly pivot. AWS has an even more complex challenge—Claude is integrated throughout their cloud services, making simple substitution nearly impossible.

The ambiguity around "non-national security" services creates additional headaches. Will the Pentagon blacklist companies that use Claude for civilian government contracts? For private sector work? Nobody knows.

One Silicon Valley startup CEO, whose company derives 30% of revenue from government contracts, told us: "We can't afford to guess wrong. We're already evaluating OpenAI alternatives."

This risk-averse response is exactly what the administration likely intended—forcing the ecosystem to self-select toward compliant providers.

Global AI Fractures Deepen

The ripple effects extend far beyond US borders. European companies, already wary of American tech dominance, are accelerating investments in homegrown AI alternatives. "We can't build critical infrastructure on platforms subject to political whims," said a senior EU tech official.

The decision also strengthens China's narrative about American technological unreliability, potentially driving more countries toward alternative AI ecosystems.

For investors, the message is stark: AI companies need political insurance as much as technical excellence. The days of "neutral" AI platforms may be ending.

The Anthropic decision may be just the beginning of a new era where AI development is shaped as much by political considerations as technological ones.

Authors

Related Articles

A small but growing group of developers has gone all-in on AI coding agents like Claude Code and OpenClaw. History suggests the rest of us won't be far behind.

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Thoughts

Share your thoughts on this article

Sign in to join the conversation