When AI Becomes a Disease Detective

Illinois health officials deployed an AI chatbot to investigate a salmonella outbreak linked to a county fair. But questions remain about whether artificial intelligence can truly revolutionize epidemic tracking.

The Jury Pool That Sparked an Investigation

13 people fell sick, and an AI chatbot helped solve the case. Or did it?

The story began in Brown County, Illinois, when the local sheriff noticed something odd last August. Potential jurors kept calling in sick with stomach bugs, forcing postponements of an upcoming trial. A week later, the state health department confirmed what officials suspected: Salmonella enterica serotype Agbeni.

What happened next marked a potential turning point in disease investigation. For the first time, health officials turned to an AI chatbot to help track the outbreak's source.

Digital Sherlock Holmes Meets Microbiology

The investigation revealed a clear pattern. Seven confirmed cases and six probable cases spread across five counties. Despite the geographic spread, every victim shared one common experience: they'd all attended the Brown County fair.

But here's where the story gets interesting—and murky. According to this week's CDC report, while officials did use an AI chatbot in their investigation, "whether it was actually helpful or not remains unclear."

That's a remarkably honest admission from public health authorities. It suggests either the traditional epidemiological methods would have solved this case just as effectively, or the AI's contribution was so minimal it barely registered.

The Promise and the Reality

AI evangelists have long promised that machine learning could revolutionize disease tracking. The theory is compelling: AI can process vast amounts of data 24/7, identify patterns humans might miss, and potentially spot outbreaks before they spread.

Google's flu tracking algorithm and IBM Watson's cancer diagnosis capabilities have shown glimpses of this potential. But real-world deployment often reveals a gap between laboratory success and field effectiveness.

The Brown County case exemplifies this challenge. Traditional epidemiological investigation—interviewing patients, mapping their movements, identifying common exposures—led investigators straight to the county fair. Did the AI chatbot accelerate this process, or was it merely along for the ride?

What Epidemiologists Really Think

Public health professionals are divided. Dr. Sarah Chen, an epidemiologist at Johns Hopkins, sees potential: "AI could help us spot subtle patterns in real-time surveillance data that might take days for human analysts to identify."

But others urge caution. "Disease investigation requires nuanced judgment calls," says Dr. Michael Rodriguez, who's tracked outbreaks for 15 years. "When lives are at stake, do we really want to rely on algorithms we can't fully explain?"

The Brown County report doesn't specify exactly how the AI was used. Did it help organize interview data? Suggest potential exposure sources? Generate hypotheses about transmission patterns? The lack of detail is telling.

The Transparency Problem

This opacity reveals a fundamental challenge in AI-assisted public health: the black box problem. If an AI system suggests a particular source for an outbreak, can health officials explain why to concerned citizens? Can they defend their conclusions in court if litigation follows?

OpenAI's ChatGPT and similar systems can produce remarkably human-like responses, but their reasoning processes remain largely opaque. In clinical settings, this "explainability gap" has slowed AI adoption despite impressive performance metrics.

Authors

Related Articles

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

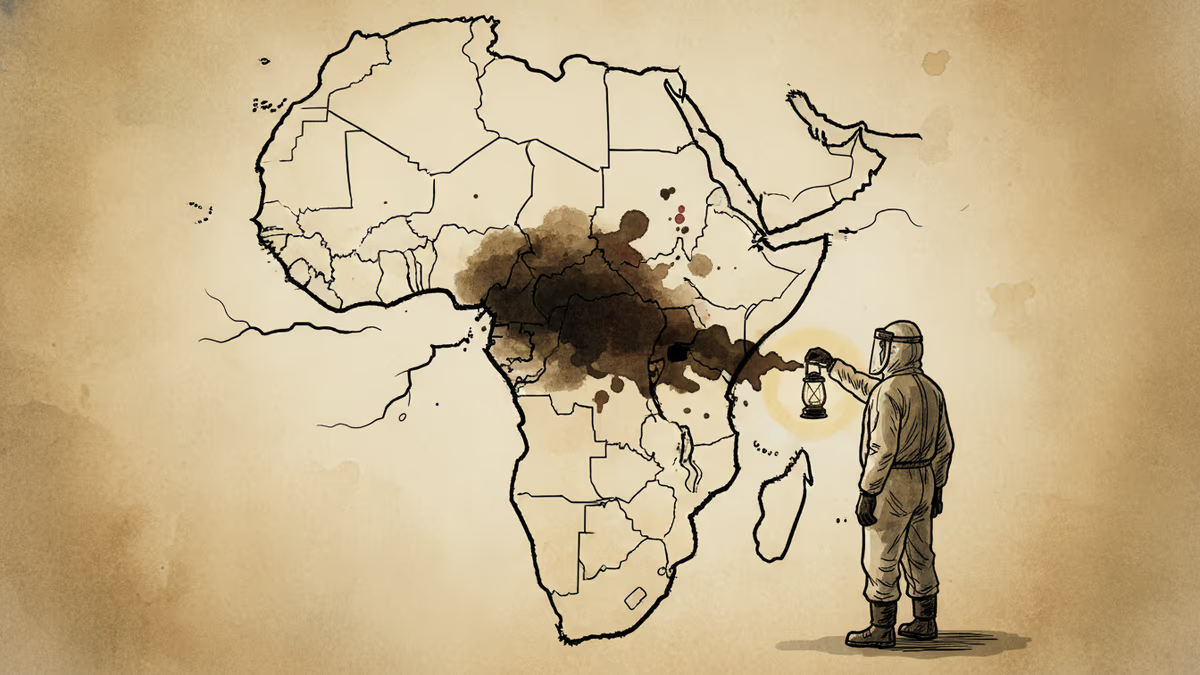

The DRC Ebola outbreak has already crossed into Uganda and earned a WHO emergency declaration. The case count isn't the alarming part—the gaps in detection are.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Thoughts

Share your thoughts on this article

Sign in to join the conversation