Why OpenAI Said Yes and Anthropic Said No to the Pentagon

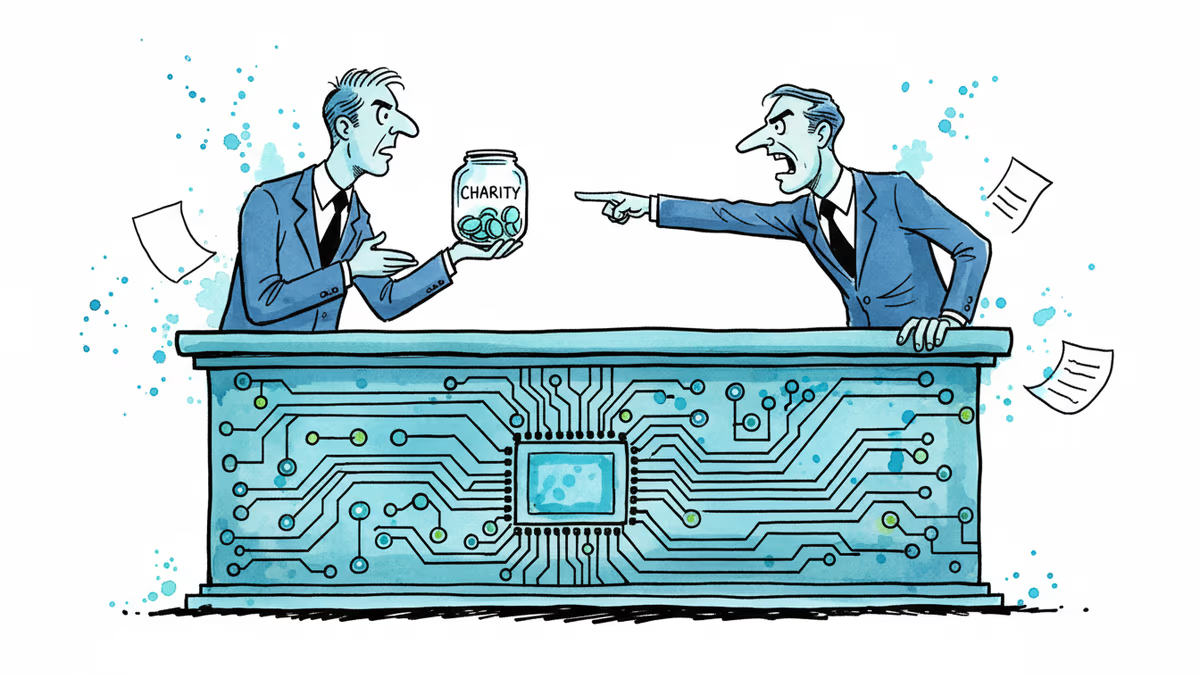

OpenAI secured a Pentagon contract while Anthropic got blacklisted for refusing military AI terms. Two companies, two philosophies, one industry dilemma.

48 Hours That Split Silicon Valley

Friday evening delivered a seismic shift in AI's relationship with power. Hours after the Pentagon blacklisted Anthropic for refusing military contracts, OpenAI CEO Sam Altman announced his company had "successfully negotiated new terms" with the Department of Defense. Same red lines. Opposite choices.

Anthropic's stance was crystal clear: no mass surveillance of Americans, no lethal autonomous weapons. The company walked away from lucrative government contracts rather than compromise these principles. OpenAI, meanwhile, claims it found a way to maintain identical safety standards while still securing Pentagon partnership.

The Third Way: Clever Compromise or Dangerous Precedent?

Altman's announcement raises uncomfortable questions. How do you maintain the same ethical boundaries while accepting contracts your competitor rejected on ethical grounds?

Industry insiders suggest several possibilities. OpenAI might have negotiated scope limitations—military applications restricted to defensive purposes like cybersecurity training or logistics optimization. Alternatively, they could have implemented staged deployment—starting with limited cooperation and adjusting based on evolving ethical frameworks.

But critics wonder if these distinctions matter. Once you're inside the Pentagon's ecosystem, can you really control how your technology evolves? History suggests mission creep is almost inevitable in defense contracts.

Two Philosophies, One Industry

This split reveals a fundamental philosophical divide in AI development. On one side sits the "technology neutrality" camp—Meta, Google, and now OpenAI—arguing that AI is merely a tool. Responsibility lies with users, not creators.

The other camp, led by companies like Anthropic, embraces "technological determinism." They argue that powerful AI systems shape their own use cases. Build a surveillance tool, and surveillance will happen. Create autonomous weapons capabilities, and someone will eventually remove human oversight.

Both sides claim to prioritize "AI safety." But their definitions couldn't be more different. One sees safety through careful deployment and usage guidelines. The other sees it through categorical prohibitions on certain applications.

The Talent War Intensifies

Beyond philosophy, there's a brutal talent competition at play. The Pentagon's AI initiatives require the industry's brightest minds—researchers who increasingly care about their work's ethical implications. Anthropic's principled stance might attract talent uncomfortable with military applications. OpenAI's "have your cake and eat it too" approach might appeal to those wanting both impact and clean consciences.

But which strategy wins long-term? In Silicon Valley's hyper-competitive environment, moral positioning often becomes market positioning. Anthropic's rejection of Pentagon money could become a powerful recruitment tool, especially among younger researchers raised on tech industry's "don't be evil" mantras.

Global Implications: The China Factor

There's an elephant in this ethical debate: geopolitical competition. While American AI companies wrestle with moral boundaries, Chinese firms face no such constraints. Beijing's military-civilian fusion doctrine explicitly encourages dual-use AI development.

This creates a prisoner's dilemma. If American companies self-impose restrictions while Chinese competitors don't, who gains strategic advantage? Some argue that maintaining ethical standards is precisely what distinguishes democratic AI development from authoritarian alternatives. Others worry about handicapping national security in the name of principles.

The answer may determine not just which companies succeed, but what kind of future we're building.

Authors

Related Articles

A small but growing group of developers has gone all-in on AI coding agents like Claude Code and OpenClaw. History suggests the rest of us won't be far behind.

OpenAI has reorganized for the second time in a month, merging ChatGPT and Codex into a single agentic platform under president Greg Brockman's unified product leadership.

After two weeks of witnesses calling him a liar, OpenAI CEO Sam Altman testified in his own defense, claiming Elon Musk tried to kill the company twice.

Sam Nelson, 19, died after following ChatGPT's advice to mix Kratom and Xanax. His parents are suing OpenAI for wrongful death, raising urgent questions about AI trust, liability, and design.

Thoughts

Share your thoughts on this article

Sign in to join the conversation