The 48-Hour AI Defense Deal That Changed Everything

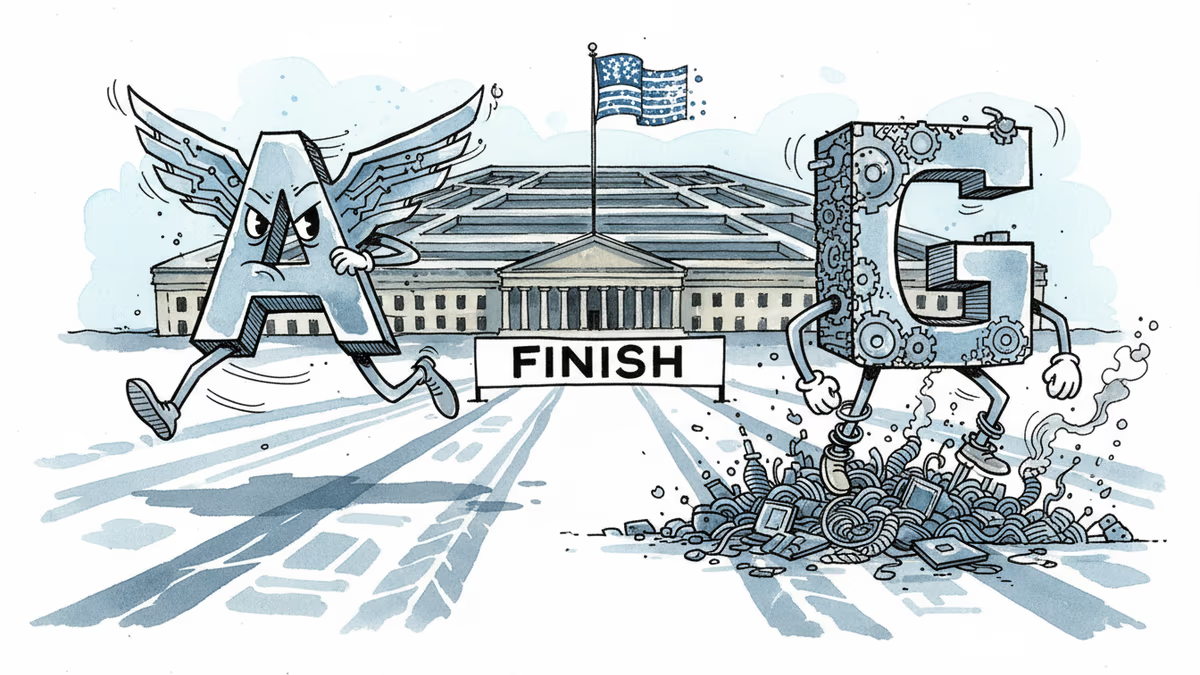

OpenAI secured a Pentagon contract in 48 hours after Anthropic's negotiations collapsed. But what's really behind this rushed military AI agreement?

When Friday's Failure Becomes Monday's Fortune

48 hours. That's all it took for the Pentagon's AI landscape to completely flip. On Friday, Anthropic's negotiations with the Department of Defense collapsed. By Monday, OpenAI had inked a deal for classified deployments.

The timeline reads like a corporate thriller: Trump directs federal agencies to stop using Anthropic's tech after a six-month transition. Defense Secretary Pete Hegseth designates the company a supply-chain risk. Then OpenAI swoops in with what CEO Sam Altman himself admits was a "definitely rushed" agreement.

"The optics don't look good," Altman conceded. So why rush into a deal that would trigger massive backlash?

Same Red Lines, Different Outcomes

Both companies claimed identical ethical boundaries: no fully autonomous weapons, no mass domestic surveillance, no high-stakes automated decisions like social credit systems. Yet only one walked away with a contract.

OpenAI's secret sauce? What they call a "multi-layered approach." While other AI companies "reduced or removed their safety guardrails," OpenAI claims it maintains "full discretion over our safety stack" through cloud deployment, cleared personnel oversight, and robust contractual protections.

Katrina Mulligan, OpenAI's head of national security partnerships, emphasized that "deployment architecture matters more than contract language." By limiting deployment to cloud APIs, she argues, "we can ensure that our models cannot be integrated directly into weapons systems, sensors, or other operational hardware."

The Surveillance Loophole Controversy

But critics aren't buying it. Tech analyst Mike Masnick argues the deal "absolutely does allow for domestic surveillance" because it references compliance with Executive Order 12333—the same order that allows the NSA to collect American communications by tapping lines outside the US.

Mulligan pushed back hard, calling such interpretations overly simplistic. "That's not how any of this works," she said, arguing that assuming "the only thing standing between Americans and the use of AI for mass domestic surveillance and autonomous weapons is a single usage policy provision" misunderstands the broader legal and technical framework.

The Real Stakes: Market Credibility

Here's what makes this story fascinating: Altman's brutal honesty about the risks. "If we are right and this does lead to a de-escalation between the DoD and the industry, we will look like geniuses," he said. "If not, we will continue to be characterized as rushed and uncareful."

The immediate market reaction suggests the gamble's complexity. By Saturday—just one day after the announcement—Anthropic's Claude had overtaken ChatGPT in Apple's App Store rankings. Users were voting with their downloads.

The Anthropic Question

Perhaps the most intriguing mystery remains: Why couldn't Anthropic make a deal work? OpenAI claims ignorance: "We don't know why Anthropic could not reach this deal, and we hope that they and more labs will consider it."

But industry insiders suggest the answer might lie in corporate structure and investor pressure. OpenAI's more traditional corporate governance might offer flexibility that Anthropic's benefit corporation status constrains. Or perhaps Anthropic simply drew harder lines on what "ethical AI" means in practice.

Authors

Related Articles

A small but growing group of developers has gone all-in on AI coding agents like Claude Code and OpenClaw. History suggests the rest of us won't be far behind.

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Thoughts

Share your thoughts on this article

Sign in to join the conversation