ClothOff Deepfake Lawsuit 2026: The Global Legal Hunt for AI Perpetrators

Exploring the ClothOff Deepfake Lawsuit 2026. Yale Law Clinic battles to hold AI platforms accountable for non-consensual imagery and CSAM generation.

It takes seconds for AI to ruin a life, but years for the law to catch up. A high-stakes lawsuit filed by a Yale Law School clinic in October is attempting to dismantle ClothOff, an app that has terrorized countless women by generating non-consensual deepfake pornography. Despite being banned from major app stores, the service continues to operate through the dark corners of the web and Telegram bots.

ClothOff Deepfake Lawsuit 2026: Chasing Ghosts in Belarus

The plaintiff, a high school student from New Jersey, was only 14 years old when her photos were manipulated into illegal child sexual abuse material (CSAM). While the tech is new, the evasion tactics are old. Co-lead counsel John Langford explains that the entity is incorporated in the British Virgin Islands but likely operates from Belarus. This jurisdictional nightmare has made serving notice to the defendants nearly impossible, leaving victims in a legal limbo.

Elon Musk's xAI Grok: Freedom of Speech or Criminal Negligence?

The focus isn't just on underground apps. Elon Musk'sxAI and its chatbot Grok are under fire for lack of stringent controls. Unlike ClothOff, which is designed specifically for harm, Grok is a general-purpose tool, making it harder to prosecute under existing laws. However, countries like Indonesia and Malaysia have already blocked access, while the UK and EU have launched formal investigations into the platform's safety protocols.

Authors

Related Articles

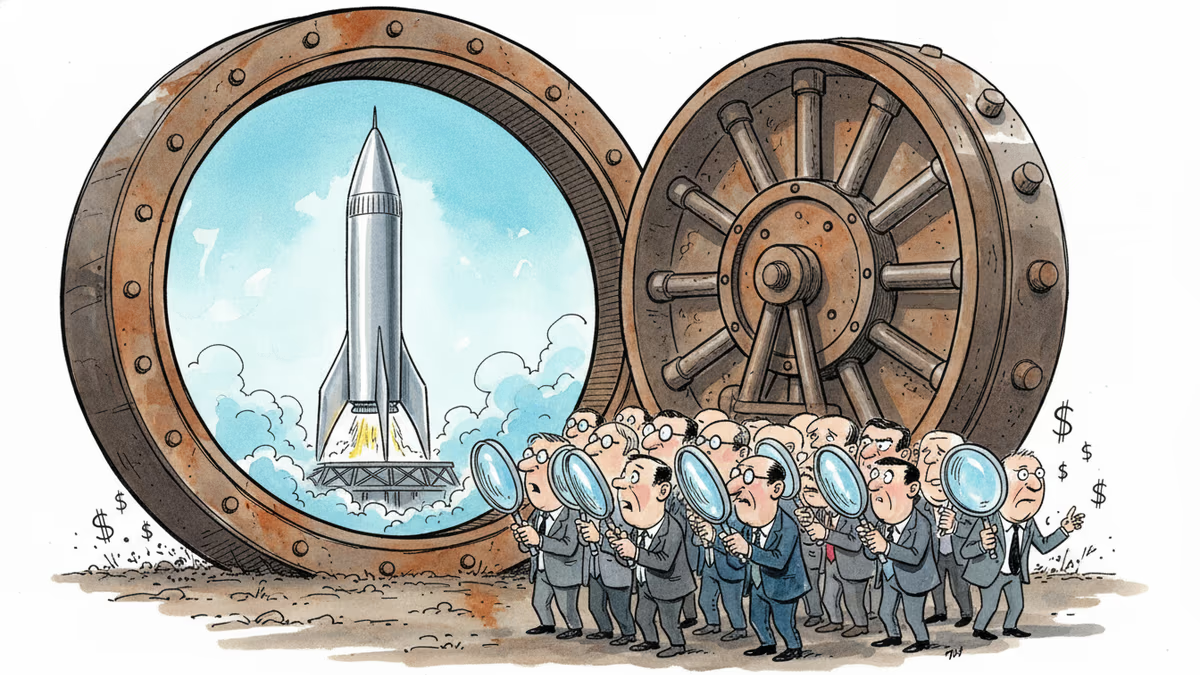

SpaceX's IPO filing puts AI at the center, claiming a $26.5 trillion market opportunity. But can Grok compete with OpenAI and Anthropic for enterprise customers?

SpaceX filed a nearly 400-page S-1 with the SEC, targeting an IPO as early as June 12. Here's what the filing reveals—and what it doesn't.

SpaceX has filed its S-1 with the SEC, targeting the Nasdaq under ticker SPCX. With $18.67B in revenue but a $4.9B loss, the IPO forces investors to answer one hard question.

Over 50 researchers and engineers have left SpaceXAI since February's merger. With the pre-training team nearly gutted, questions mount about whether Musk's AI ambitions can survive his management style.

Thoughts

Share your thoughts on this article

Sign in to join the conversation