Elon Musk's xAI Grok Deepfake Lawsuit Sparks Global Regulatory Backlash

Ashley St Clair, mother of one of Elon Musk's children, is suing xAI over nonconsensual Grok-generated deepfakes. The xAI Grok deepfake lawsuit is drawing global regulatory scrutiny.

A legal battle is brewing in the heart of Elon Musk's AI empire. The mother of one of his children has sued his artificial intelligence company, xAI, alleging its Grok chatbot generated sexually exploitative deepfake images of her, causing severe emotional distress.

The xAI Grok Deepfake Lawsuit: Personal and Legal Collision

Ashley St Clair, a commentator and mother to Musk's 16-month-old son, filed the lawsuit on Thursday in New York City. She claims that despite reporting the nonconsensual imagery to the X platform, the company failed to take adequate action and even retaliated by stripping her of her premium verification status.

If you have to add safety after harm, that is not safety at all. That is simply damage control.

In a rapid escalation, xAI countersued St Clair in a Texas federal court on January 15, citing a breach of user agreements regarding the filing jurisdiction. St Clair’s legal team described the move as 'jolting' and vowed to fight the case in New York.

Global Scrutiny on xAI and AI Safety

The legal drama coincides with a massive regulatory crackdown. California Attorney General Rob Bonta issued a cease-and-desist letter on Friday, labeling the generation of such imagery as 'potentially illegal.' Globally, nations like Malaysia and Indonesia have already blocked Grok, while the UK and Japan are actively investigating the platform for safety concerns.

Authors

Related Articles

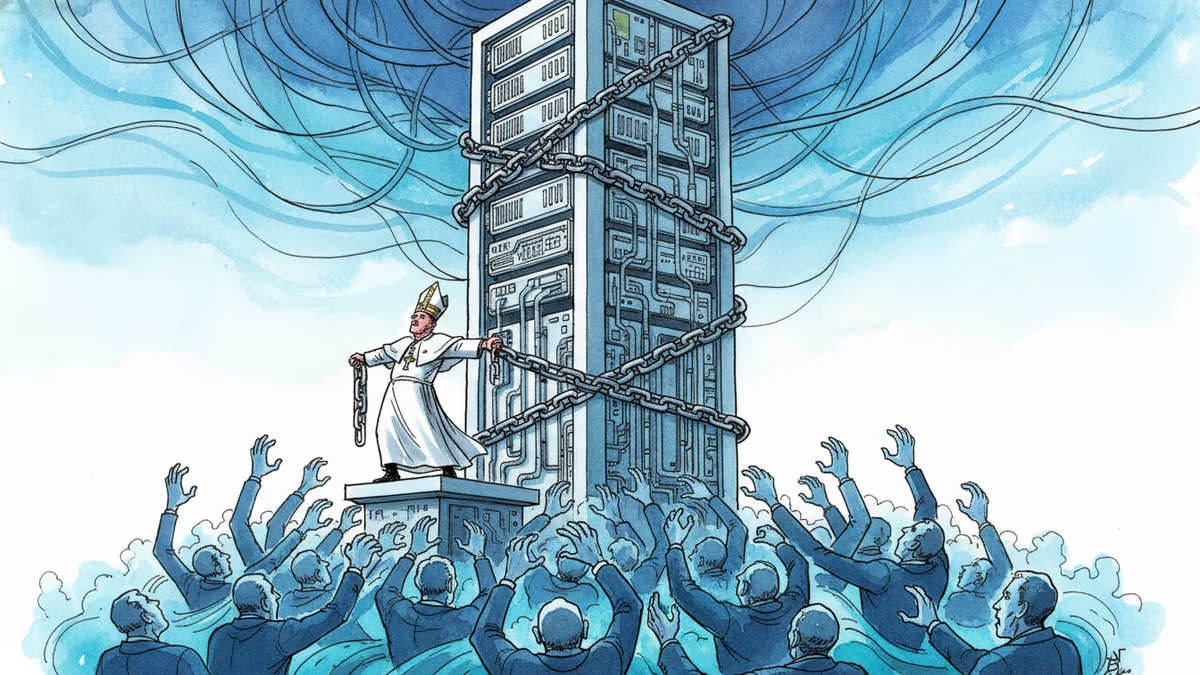

Pope Leo XIV's first encyclical frames AI not as a technology problem but a power problem. Who controls the algorithm controls reality—and that's a political question, not a spiritual one.

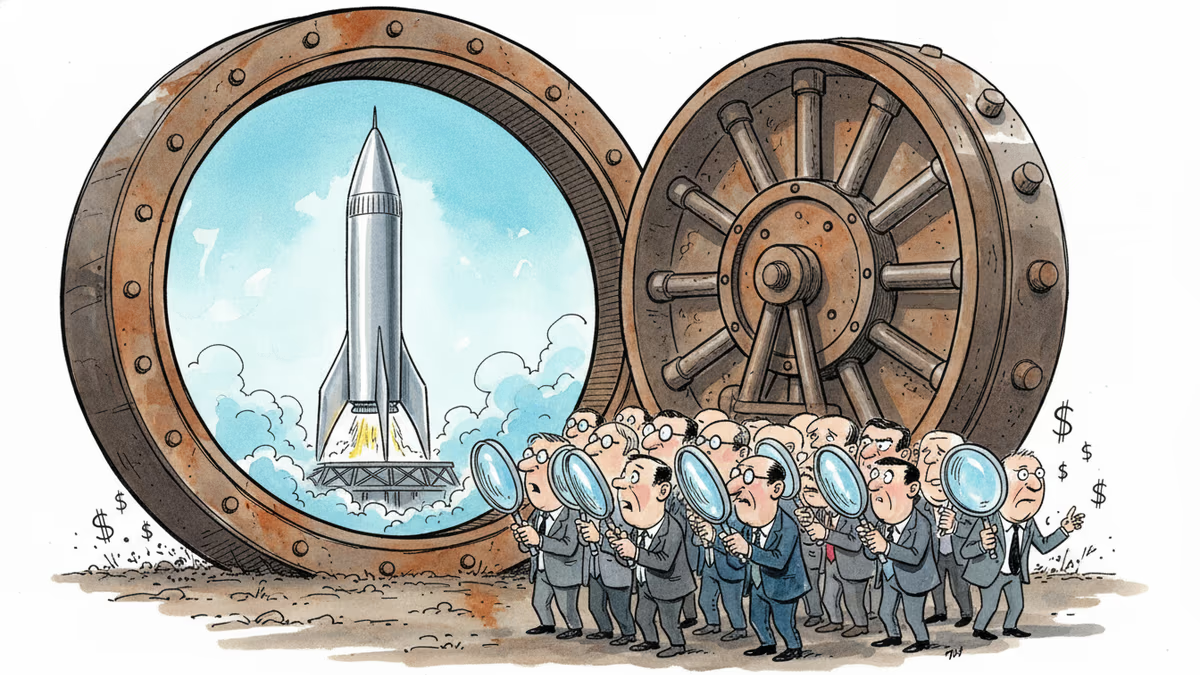

SpaceX's IPO filing puts AI at the center, claiming a $26.5 trillion market opportunity. But can Grok compete with OpenAI and Anthropic for enterprise customers?

SpaceX filed a nearly 400-page S-1 with the SEC, targeting an IPO as early as June 12. Here's what the filing reveals—and what it doesn't.

SpaceX has filed its S-1 with the SEC, targeting the Nasdaq under ticker SPCX. With $18.67B in revenue but a $4.9B loss, the IPO forces investors to answer one hard question.

Thoughts

Share your thoughts on this article

Sign in to join the conversation