When the Pentagon Treats an AI Startup Like an Enemy State

The Defense Department designated Anthropic as a supply-chain risk, but Microsoft and Google confirmed they'll keep offering Claude to customers. A new chapter in Silicon Valley's military AI tensions.

Not China, Not Russia—An American AI Startup Gets the 'Enemy' Treatment

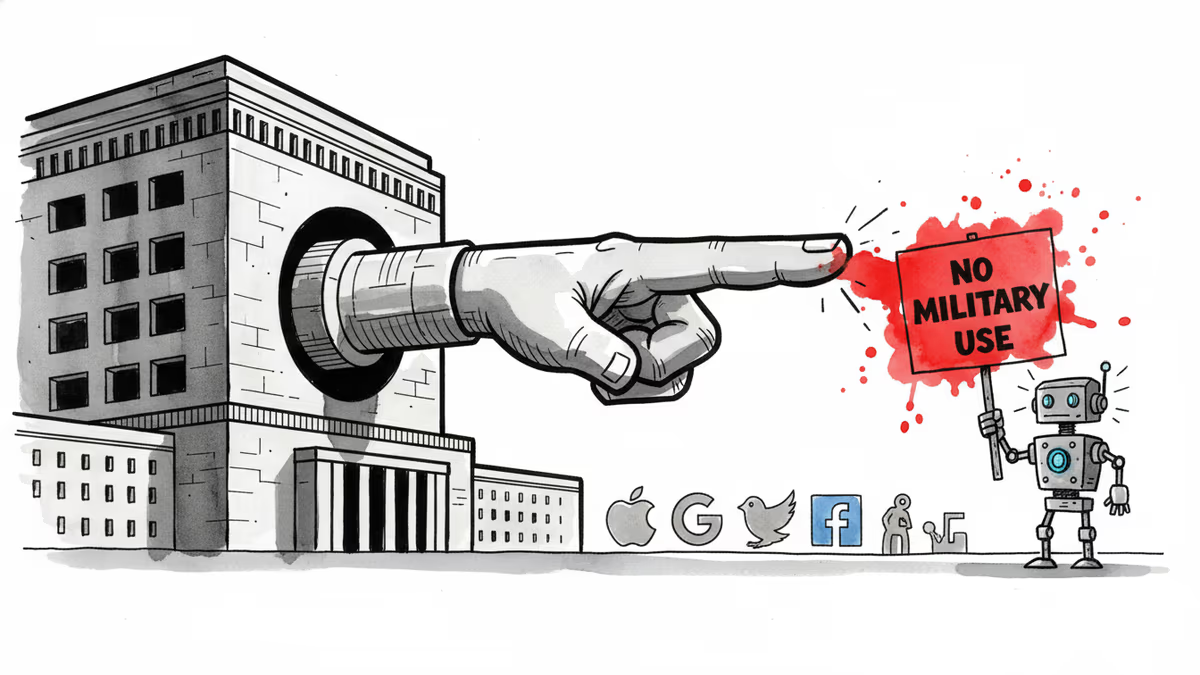

On Thursday, the Pentagon slapped Anthropic with a supply-chain risk designation—a label typically reserved for foreign adversaries like China or Russia. It's the first time an American AI startup has received this treatment.

The trigger was straightforward: The Defense Department demanded unrestricted access to Claude AI models, and Anthropic said no. The company argued it couldn't safely support uses like mass surveillance and fully autonomous weapons.

The Pentagon's response was nuclear. It banned Anthropic products from its systems and required any company or agency working with the Defense Department to certify they don't use Anthropic's models either. CEO Dario Amodei vowed to fight the designation in court.

Big Tech's Calculated Gamble: Customers vs. Pentagon

But here's where it gets interesting. Microsoft and Google quickly announced they'd continue offering Claude to their customers anyway.

A Microsoft spokesperson said their lawyers concluded that "Anthropic products, including Claude, can remain available to our customers—other than the Department of War—through platforms such as M365, GitHub, and Microsoft's AI Foundry." Google echoed this, confirming Claude would stay available through Google Cloud for non-defense projects.

AWS customers can reportedly keep using Claude for non-defense workloads too.

Silicon Valley's Fundamental Dilemma Exposed

This standoff reveals a deeper tension brewing in Silicon Valley. When AI companies refuse military applications in the name of "ethical AI," how far can the state push back?

Anthropic's refusal wasn't just business—it was philosophical. The founders left OpenAI partly over disagreements about AI safety. But the Pentagon's supply-chain designation is essentially declaring them a national security threat.

Ironically, Claude's consumer growth has continued surging even amid the Pentagon feud. If anything, being the "AI the Defense Department doesn't want" might be a marketing advantage.

The Enterprise Calculation

For businesses using Claude through Microsoft or Google, this creates an interesting dynamic. They can keep their AI workflows intact while technically staying compliant with Pentagon requirements—as long as those uses aren't defense-related.

But the designation raises thorny questions. What counts as "defense-related"? If a contractor uses Claude for internal HR tasks while also working on Pentagon projects, does that violate the rules?

Authors

Related Articles

A small but growing group of developers has gone all-in on AI coding agents like Claude Code and OpenClaw. History suggests the rest of us won't be far behind.

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Thoughts

Share your thoughts on this article

Sign in to join the conversation