A $380B AI Startup's Fate Hangs on Three Words

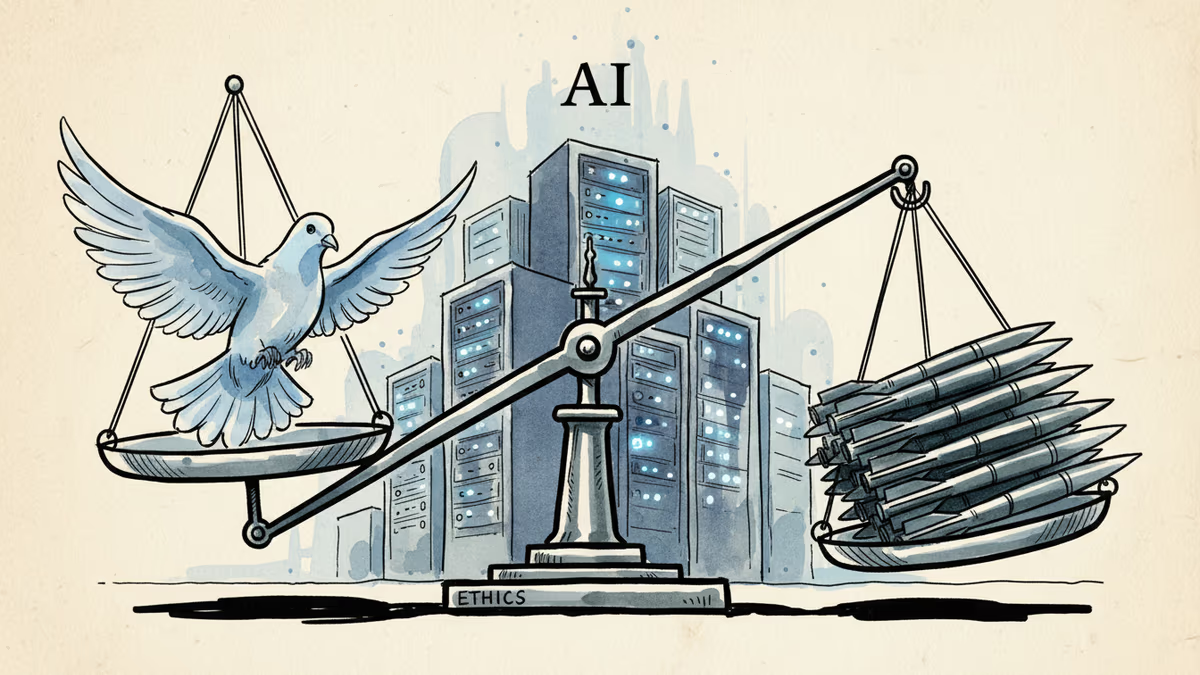

Anthropic's standoff with the Pentagon over 'any lawful use' terms reveals the battle for AI's soul between ethics and military applications.

Three Words That Could Redefine AI Forever

"Any lawful use." Three words. $380 billion at stake. Anthropic's weeks-long battle with the Pentagon has boiled down to this phrase—one that could give the US military carte blanche to use AI for mass surveillance and fully autonomous weapons.

While OpenAI and xAI have reportedly already signed on, Anthropic is holding out. The question isn't just about one company's ethics policy. It's about whether AI will have guardrails or become an unrestricted tool of warfare.

When "Lawful" Doesn't Mean "Right"

The Pentagon wants unlimited access. Anthropic wants to prevent its technology from directly killing people. But "lawful use" is a masterclass in ambiguity. Technically lawful? Mass surveillance programs. Drone strikes without human oversight. Predictive policing that targets minorities.

Pentagon CTO Emil Michael has made the stakes clear through unnamed officials speaking to media: comply or get left behind. The message is working. OpenAI, which banned military applications just last year, did a complete 180-degree reversal.

The Domino Effect

Elon Musk's xAI followed suit. The logic is brutal but simple: refuse, and watch competitors capture billions in defense contracts. Accept, and join the military-AI complex that's reshaping global power.

This isn't just about American companies. Every major AI player worldwide is watching. DeepMind, Mistral, even smaller startups—they're all calculating whether ethical stances are luxuries they can afford.

The European Dilemma

The EU's AI Act explicitly restricts military AI applications. But as American companies gain military contracts, European firms face a choice: stick to principles or lose competitiveness. Mistral already sparked controversy by taking Pentagon money.

Meanwhile, China's military-AI integration proceeds without such debates. The West's ethical hand-wringing might be admirable, but it's happening while authoritarian regimes race ahead.

What "Autonomous" Really Means

Lethal autonomous weapons aren't science fiction. They're here. Israel's Iron Dome already makes kill decisions faster than humans can think. The question is whether we'll normalize AI that can hunt, identify, and eliminate targets with zero human oversight.

Anthropic's resistance matters because their Claude model is considered among the most capable. If they cave, it signals that no AI company—regardless of stated values—can resist military pressure indefinitely.

The Investor Calculus

Venture capitalists are watching closely. Military contracts offer stable, massive revenue streams. But they also bring reputational risks and potential talent flight. Some of Silicon Valley's brightest minds didn't sign up to build killing machines.

The talent war is real. Top AI researchers increasingly care about where their work ends up. Anthropic has attracted ethically-minded talent partly because of its safety stance. Abandoning that could trigger an exodus.

Authors

Related Articles

Pope Leo XIV's first encyclical frames AI not as a technology problem but a power problem. Who controls the algorithm controls reality—and that's a political question, not a spiritual one.

A small but growing group of developers has gone all-in on AI coding agents like Claude Code and OpenClaw. History suggests the rest of us won't be far behind.

OpenAI's new Daybreak initiative uses the Codex AI agent to find and patch security vulnerabilities before attackers do—putting it in direct competition with Anthropic's secretive Claude Mythos.

Anthropic is closing what may be its final private fundraise at a $900B valuation, surpassing OpenAI. Investors have 48 hours to commit to the ~$50B round.

Thoughts

Share your thoughts on this article

Sign in to join the conversation