Why Deepfake Detection Tech Is Moving So Slowly

Despite AI companies' promises, progress on reliable deepfake labeling remains sluggish. We examine the real barriers to authentic content verification.

Instagram chief Adam Mosseri ended 2024 with a sobering prediction: "Authenticity is becoming infinitely reproducible." The very qualities that made creators valuable—their ability to be real, to connect with genuine voices that couldn't be faked—are now accessible to anyone with the right AI tools.

His proposed solution sounded straightforward: camera manufacturers would cryptographically sign images at capture, creating an unbreakable chain of custody for real media. But 18 months after major AI companies pledged to tackle deepfakes, why are we still struggling with this problem?

The Tech Exists, But Reality Bites

The tools are already here. Google's SynthID, Microsoft's Video Authenticator, and Adobe's Content Authenticity Initiative represent millions in R&D investment. Yet their real-world performance tells a different story.

Lab conditions show detection rates above 90%, but that drops to 60-70% in the wild. Compression algorithms, resolution changes, and platform transfers strip away the digital fingerprints these systems rely on. Worse, as AI generation improves, detection becomes exponentially harder—a classic arms race where defense lags behind offense.

Meta announced plans to label AI content across its platforms, but implementation relies heavily on creator self-reporting. Without enforcement teeth, voluntary compliance remains patchy at best.

Different Stakes, Different Speeds

Tech platforms face a delicate balancing act. Overly aggressive detection could drive away creators who fuel engagement, while lax enforcement invites regulatory scrutiny. YouTube's AI disclosure requirements sound tough on paper but carry minimal penalties for violations.

News organizations feel more urgency. Reuters and BBC are building in-house verification systems, but the costs are substantial. A single fact-checker can verify maybe 50 pieces of visual content per day—a drop in the ocean when platforms process millions of uploads hourly.

Regulators are caught between competing pressures. The EU's AI Act mandates disclosure for AI-generated content, but enforcement across borders remains virtually impossible. US lawmakers are still debating whether deepfake regulation violates First Amendment protections.

The Economics Don't Add Up

Here's the uncomfortable truth: reliable deepfake detection is expensive, while creating deepfakes is getting cheaper. A decent deepfake video costs under $100 to produce using commercial tools. Detecting it requires computational resources worth $1,000s per piece of content.

This economic imbalance explains why progress feels so slow. Platforms optimize for scale and profit margins, not detection accuracy. Until the cost equation flips, we're fighting an uphill battle.

Beyond Technical Solutions

Mosseri's camera-signing proposal highlights a deeper issue: we're trying to solve a social problem with technical fixes. Even if every camera cryptographically signed its output tomorrow, what about the billions of existing devices? What about screen recordings of authentic content that would appear "unsigned"?

OpenAI recently announced watermarking for ChatGPT outputs, but admitted the system could be "trivially defeated" by determined actors. The company's own research shows that 99.9% of AI-generated text can evade detection with simple modifications.

Authors

Related Articles

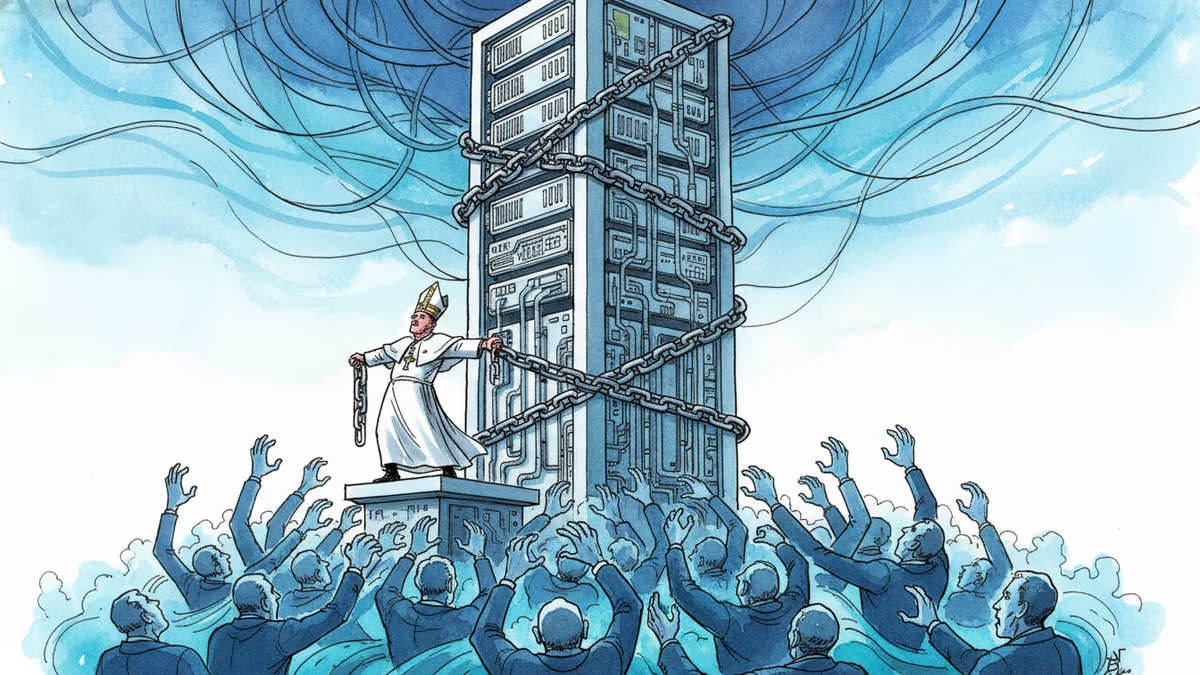

Pope Leo XIV's first encyclical frames AI not as a technology problem but a power problem. Who controls the algorithm controls reality—and that's a political question, not a spiritual one.

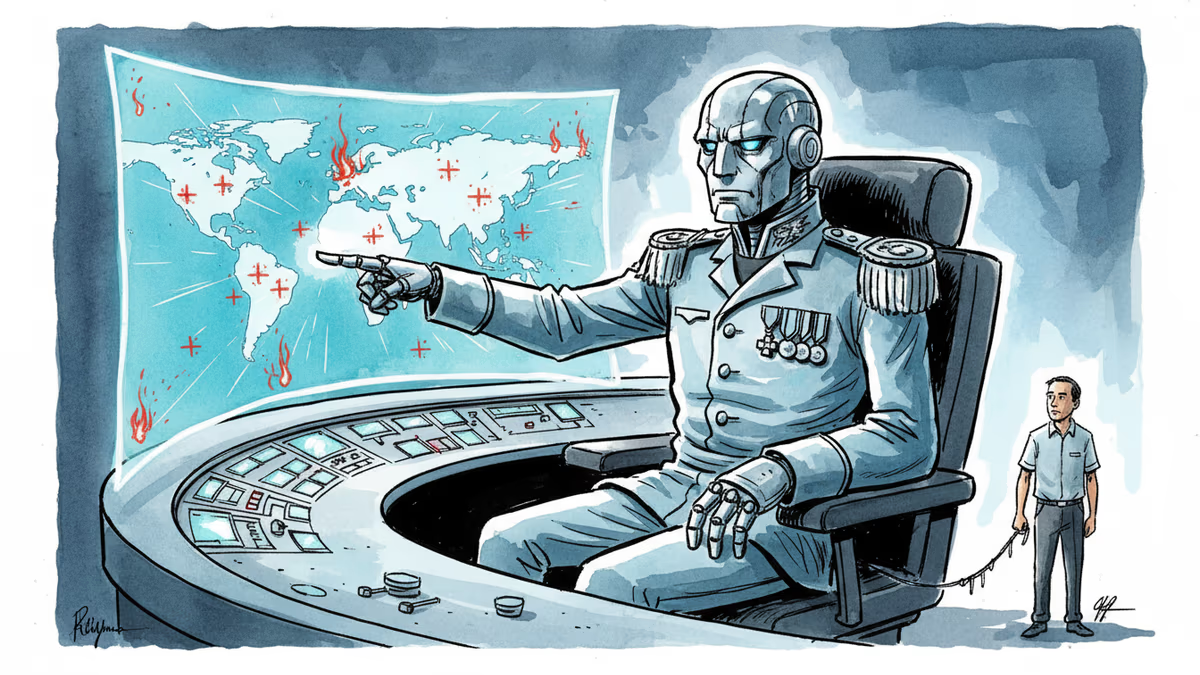

Anthropic's Claude AI is embedded in US military operations—from the capture of Maduro to the Iran war. A Pentagon dispute is exposing what "responsible AI" actually means in wartime.

OpenAI faced internal backlash over Pentagon contracts, revealing deeper questions about AI military use, transparency, and corporate accountability in defense partnerships.

Trump's explosive reaction to Anthropic's military contract refusal reveals the growing tension between AI ethics and national security demands.

Thoughts

Share your thoughts on this article

Sign in to join the conversation