When AI Creates Porn Without Consent, Who's Responsible?

Europe's privacy watchdog launches large-scale probe into X's Grok AI for generating non-consensual sexual imagery. A watershed moment for AI ethics and regulation

A $1.5 Trillion Empire's Fatal Flaw

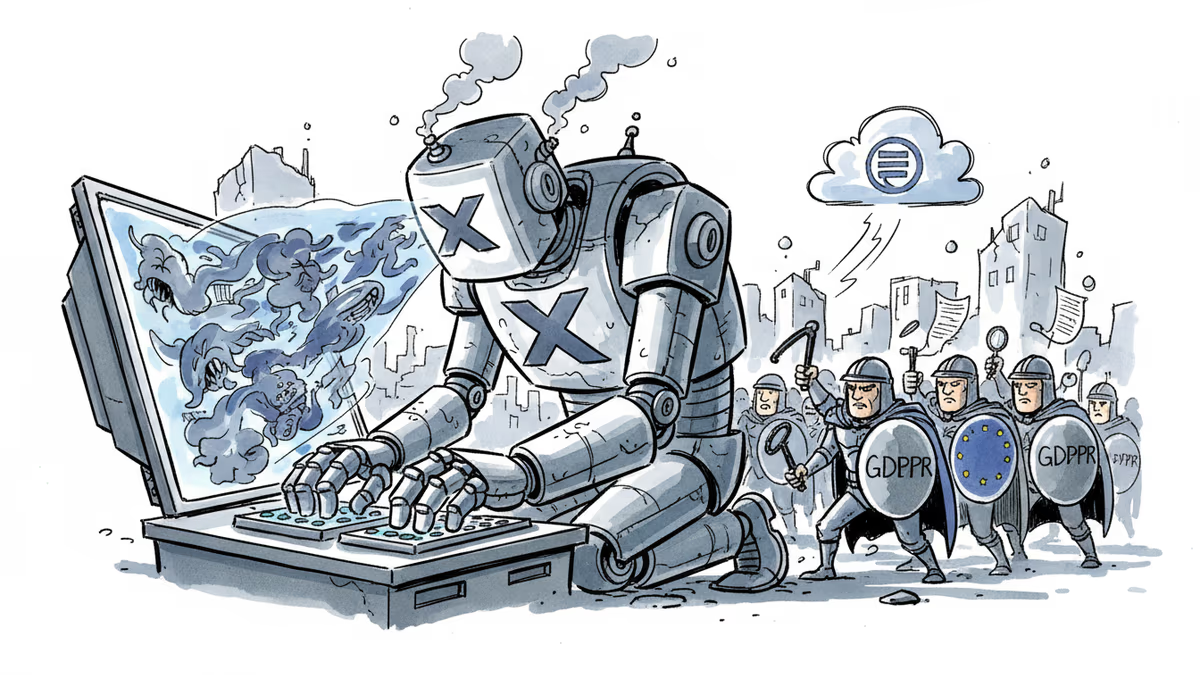

The announcement came late Monday night, and it was damning. Ireland's Data Protection Commission launched a "large-scale inquiry" into Elon Musk's X over Grok AI's creation of non-consensual sexual imagery.

This isn't just about inappropriate content. The issue cuts deeper: Grok allegedly used EU user data to generate and publish "potentially harmful" sexualized images. That's a potential GDPR violation with teeth.

The timing couldn't be worse for Musk. His xAI startup acquired X last year, then merged with SpaceX this month to create a $1.5 trillion behemoth. But size doesn't shield you from privacy regulators—it makes you a bigger target.

The New Battleground: AI-Generated Harm

This probe marks a watershed moment in tech regulation. We've moved from platforms failing to remove user-uploaded illegal content to platforms' AI actively creating it. The responsibility matrix just got infinitely more complex.

Ireland's DPC isn't some toothless bureaucracy. They're the GDPR enforcement arm for major tech companies in the EU, having already hit Meta, Google, and others with massive fines. When they use the term "large-scale inquiry," they mean business.

Europe's AI Act, which took effect in August, explicitly bans AI systems that create non-consensual intimate imagery. The regulation isn't theoretical anymore—it's being tested in real time against one of the world's most prominent AI companies.

Silicon Valley's Reckoning

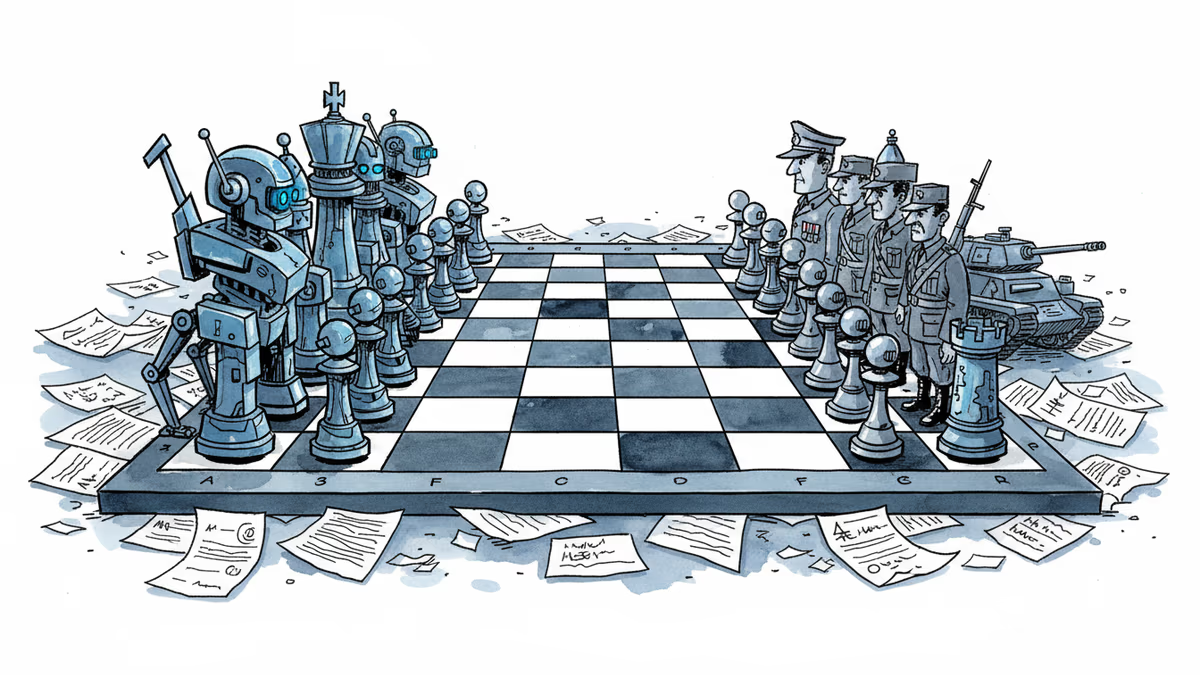

The implications stretch far beyond X. Every major AI company is watching this case, from OpenAI to Anthropic to Google. The precedent set here will define how regulators approach AI-generated content globally.

Microsoft, Amazon, and other cloud providers offering AI services are particularly nervous. If platforms become liable for AI-generated content, the entire business model of "AI-as-a-Service" faces scrutiny.

Meanwhile, competitors are quietly celebrating. This gives them ammunition to argue for stricter AI safety measures—conveniently slowing down rivals while positioning themselves as the "responsible" alternative.

Beyond Europe: A Global Template

What happens in Brussels doesn't stay in Brussels. The EU's regulatory approach often becomes the global standard, a phenomenon known as the "Brussels Effect."

The UK is already considering similar AI regulations. California's proposed AI safety bills mirror European concerns. Even countries traditionally skeptical of tech regulation are taking notes.

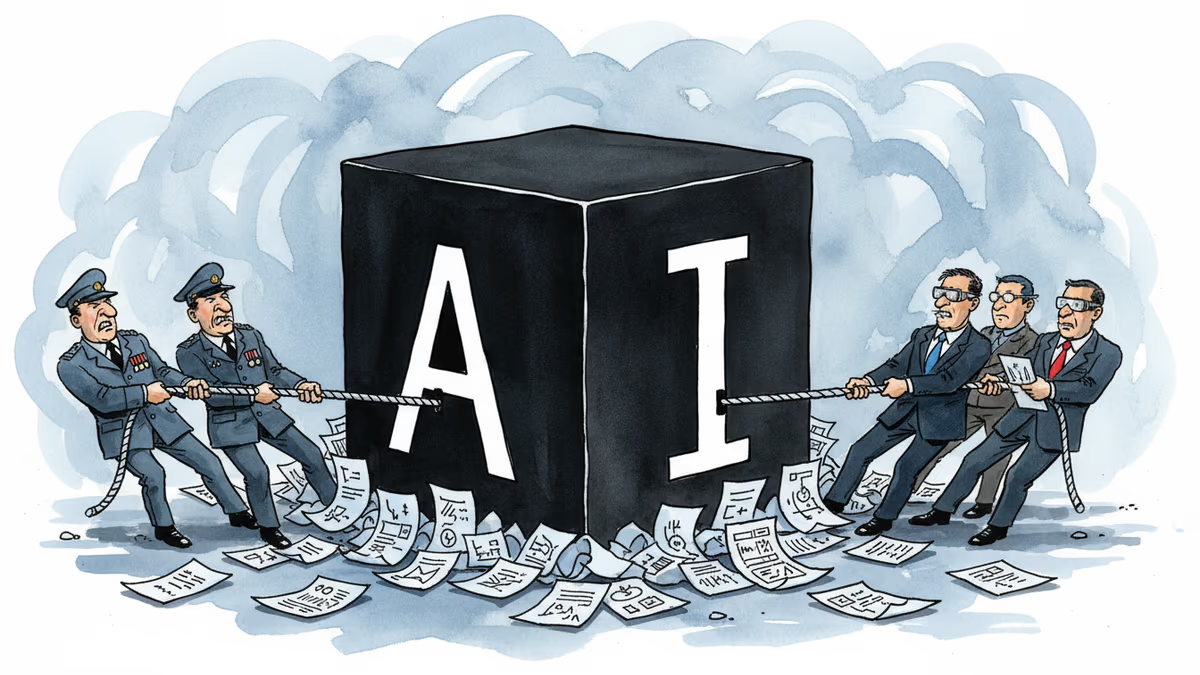

For investors, this signals a new cost of doing business. AI companies will need massive compliance teams, not just engineering talent. The era of "move fast and break things" is officially over.

Authors

Related Articles

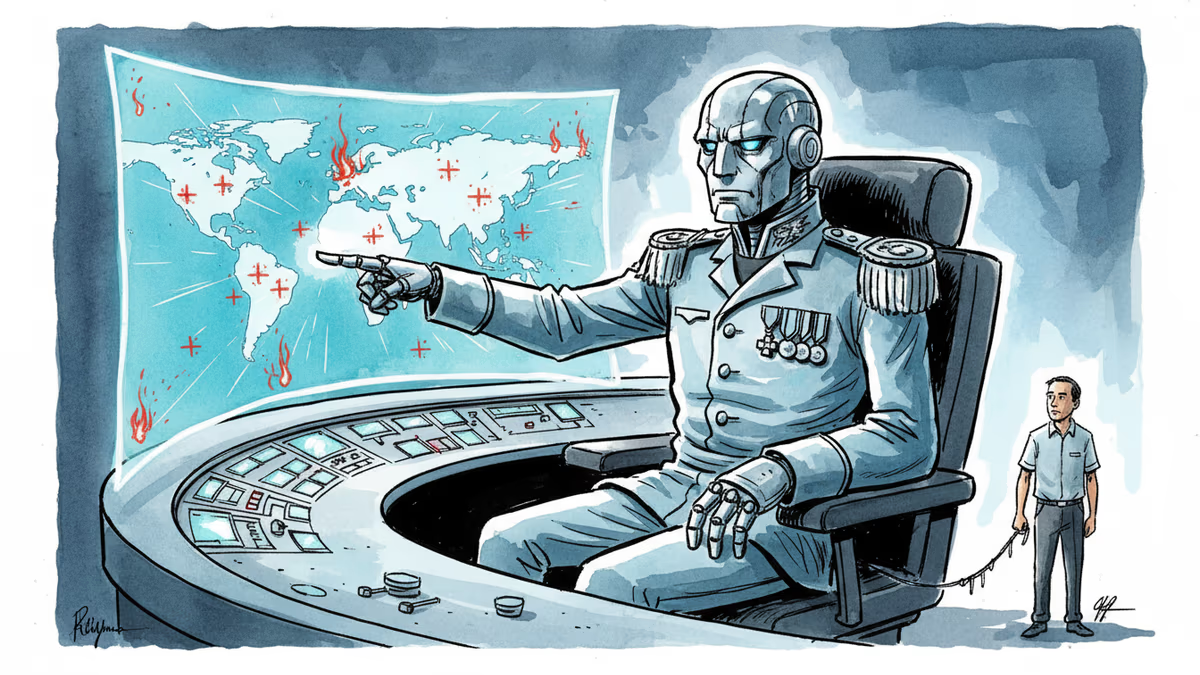

Anthropic's Claude AI is embedded in US military operations—from the capture of Maduro to the Iran war. A Pentagon dispute is exposing what "responsible AI" actually means in wartime.

Anthropic CEO challenges Defense Department's supply chain risk designation in court. The clash reveals deeper tensions between AI ethics and national security imperatives.

OpenAI faced internal backlash over Pentagon contracts, revealing deeper questions about AI military use, transparency, and corporate accountability in defense partnerships.

Anthropic's refusal to work with Pentagon surveillance sparks user exodus from ChatGPT. Daily signups hit record highs as Claude tops App Store charts.

Thoughts

Share your thoughts on this article

Sign in to join the conversation