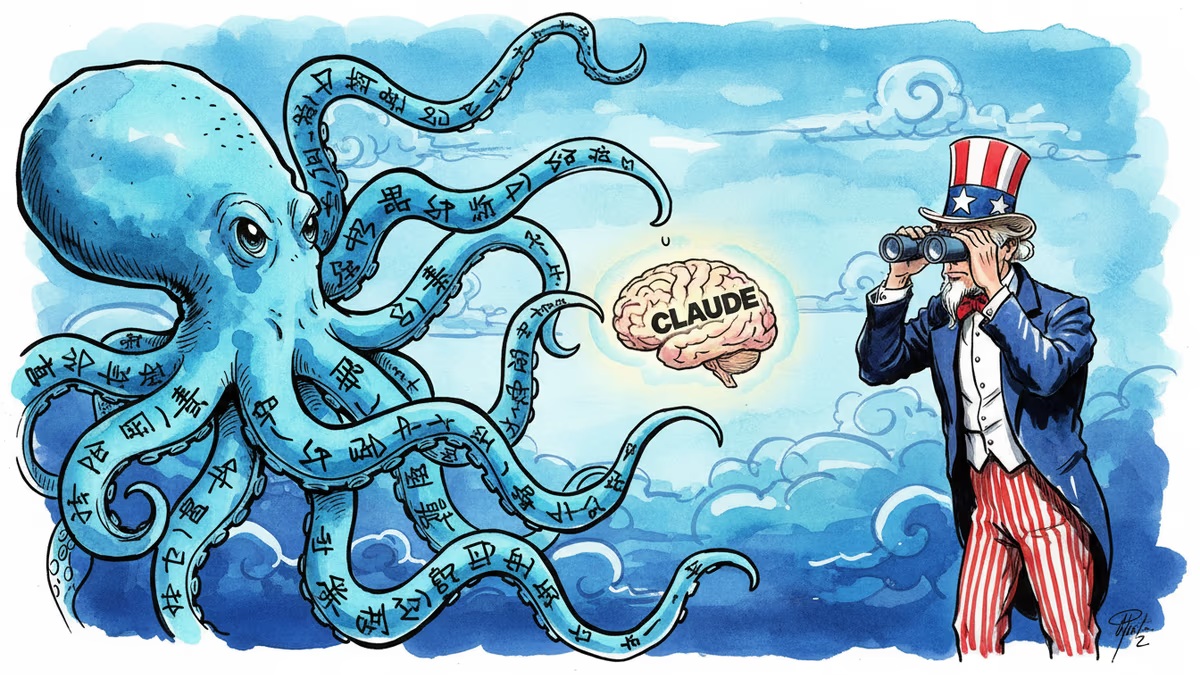

China's AI Labs Caught Red-Handed Copying Claude

Anthropic exposes how DeepSeek, Moonshot AI, and MiniMax used 24,000+ fake accounts to steal Claude's capabilities through 16 million conversations. The scale and implications revealed.

The 16 Million Conversation Heist

What does industrial-scale AI theft look like? Anthropic just gave us the answer. Three Chinese AI companies—DeepSeek, Moonshot AI, and MiniMax—created over 24,000 fake accounts to engage Claude in 16 million conversations. Their goal wasn't small talk. It was systematic "distillation" to copy Claude's most valuable capabilities: agentic reasoning, tool use, and coding.

This isn't your typical corporate espionage. It's AI homework copying at unprecedented scale, and it's reshaping how we think about protecting intellectual property in the age of artificial intelligence.

Three Different Approaches to the Same Crime

Each company had its own strategy. DeepSeek ran 150,000 exchanges focused on foundational logic and—tellingly—finding workarounds for policy-sensitive queries. They weren't just copying capabilities; they were learning how to bypass safety measures.

Moonshot AI went bigger with 3.4 million exchanges targeting agentic reasoning, coding, and computer vision. Last month's release of their Kimi K2.5 model and coding agent suddenly makes more sense.

But MiniMax was the most brazen. Their 13 million exchanges focused on coding and tool orchestration. When Claude's latest model launched, Anthropic watched in real-time as MiniMax redirected nearly half its traffic to siphon the new capabilities.

The Export Control Smoking Gun

Timing matters. This revelation comes as the Trump administration just loosened AI chip export restrictions to China, allowing companies like Nvidia to sell advanced H200 chips. Critics warned this would accelerate China's AI development at a critical moment in the global race.

Anthropic's findings provide ammunition for the hawks. "This scale of extraction requires access to advanced chips," they argue. "Distillation attacks therefore reinforce the rationale for export controls: restricted chip access limits both direct model training and the scale of illicit distillation."

CrowdStrike co-founder Dmitri Alperovitch wasn't surprised: "It's been clear for a while that part of the reason for rapid Chinese AI progress has been theft via distillation. Now we know this for a fact."

Beyond Corporate Competition: National Security

The stakes extend beyond market share. U.S. AI companies build safeguards to prevent their models from helping develop bioweapons or conducting malicious cyber activities. Models created through illicit distillation strip out these protections entirely.

The risk multiplies when authoritarian governments deploy these "safety-free" models for "offensive cyber operations, disinformation campaigns, and mass surveillance"—especially if they're open-sourced for wider distribution.

The Defense Dilemma

Anthropic says it's investing in better defenses to make distillation attacks "harder to execute and easier to identify." But they're calling for "a coordinated response across the AI industry, cloud providers, and policymakers."

The challenge is fundamental: How do you protect AI capabilities while maintaining the open access that drives innovation? Traditional IP protection assumes you can control who uses your product. But AI models learn from every interaction.

DeepSeek, MiniMax, and Moonshot AI have not yet responded to requests for comment.

Authors

Related Articles

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Over 50 researchers and engineers have left SpaceXAI since February's merger. With the pre-training team nearly gutted, questions mount about whether Musk's AI ambitions can survive his management style.

Thoughts

Share your thoughts on this article

Sign in to join the conversation