The Three Frontiers That Will Define AI's Next Chapter

Google Cloud VP reveals why AI models aren't just competing on intelligence anymore. Three distinct battlegrounds are reshaping how businesses think about AI deployment.

When 45 Minutes Doesn't Matter (And When It Does)

Michael Gerstenhaber has a front-row seat to how companies actually use AI. As VP of Vertex AI at Google Cloud, he watches enterprises deploy models across industries, and what he's seeing challenges everything we think we know about the AI race.

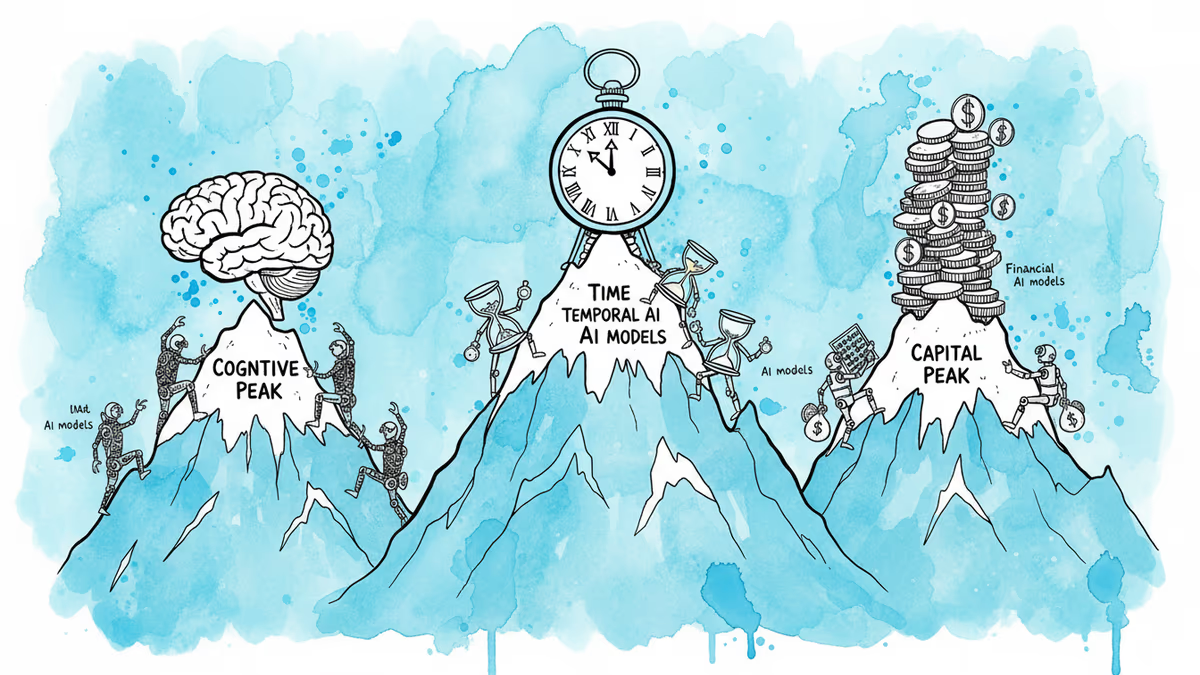

Most people see AI as a single competition: who can build the smartest model? But Gerstenhaber has identified something more nuanced. AI models are actually fighting on three completely different frontiers simultaneously, and the winner depends entirely on which battlefield matters most.

"It's not just about intelligence anymore," he explains. "We're seeing three distinct boundaries that models are pushing against."

Frontier One: Raw Intelligence

"Think about writing code," Gerstenhaber says. "You just want the best code you can get. Doesn't matter if it takes 45 minutes, because I have to maintain it, I have to put it in production. I just want the best."

This is where models like Gemini Pro compete. Time is irrelevant. Quality is everything. Complex analysis, creative work, strategic planning—tasks where getting it right once is worth waiting for.

Anthropic and OpenAI have been dueling primarily on this frontier, each release claiming higher scores on reasoning benchmarks. But Gerstenhaber's insight suggests this might be missing the bigger picture.

Frontier Two: Response Time

"If I'm doing customer support and I need to know how to apply a policy, you need intelligence to apply that policy," he notes. "But it doesn't matter how right you are if it took 45 minutes to get the answer."

Real-time interaction changes everything. Customer service, medical diagnosis, financial trading—contexts where "good enough, right now" beats "perfect, eventually." Here, models compete on delivering adequate intelligence within strict latency budgets.

This explains why companies like Anthropic have been releasing smaller, faster versions of their flagship models. It's not about dumbing down AI—it's about recognizing a fundamentally different use case.

Frontier Three: Infinite Scale

"Somebody like Reddit or Meta wants to moderate the entire internet," Gerstenhaber explains. "They have large budgets, but they can't take an enterprise risk on something if they don't know how it scales."

The third frontier isn't about capability—it's about unpredictable, massive deployment. Social media moderation, spam filtering, content analysis at internet scale. These applications need models that can handle sudden 10x spikes in demand without breaking the budget.

This is perhaps the most overlooked battleground. While everyone focuses on benchmark scores, some of the most valuable AI applications require models that are "smart enough" but infinitely scalable at predictable costs.

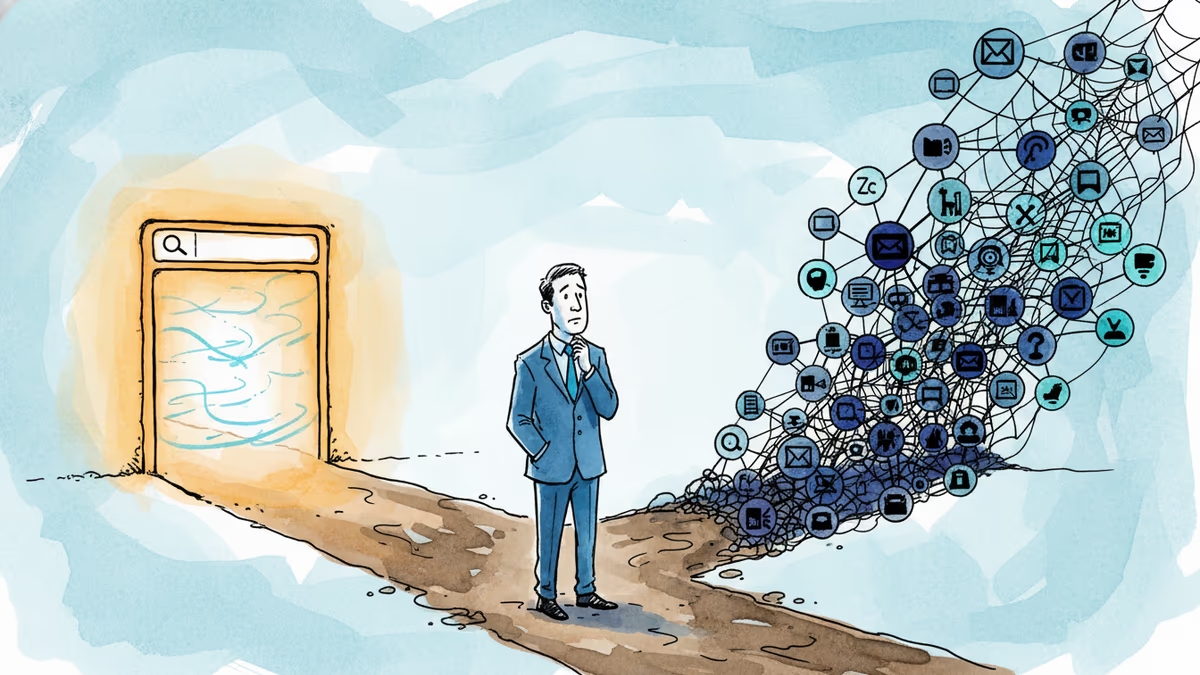

Why Agentic AI Is Still Waiting

"This technology is basically two years old, and there's still a lot of missing infrastructure," Gerstenhaber observes. "We don't have patterns for auditing what the agents are doing. We don't have patterns for authorization of data to an agent."

His diagnosis is stark: agentic AI isn't held back by model capabilities—it's held back by operational infrastructure. The models can do the work; we just don't have systems to trust them with it.

Software engineering has been the exception. "We have a dev environment in which it's safe to break things," he notes. Code review processes and staging environments create natural safety nets. But try applying that to medical diagnosis or legal advice, and the challenge becomes clear.

The Vertical Integration Advantage

Google's unique position became clearer during our conversation. "We have everything from the interface to the infrastructure layer," Gerstenhaber explains. "We can build data centers. We can buy electricity and build power plants. We have our own chips."

This vertical integration isn't just about control—it's about optimizing across all three frontiers simultaneously. Google can tune their infrastructure for intelligence, speed, or scale depending on the application, while competitors might excel in one area but struggle in others.

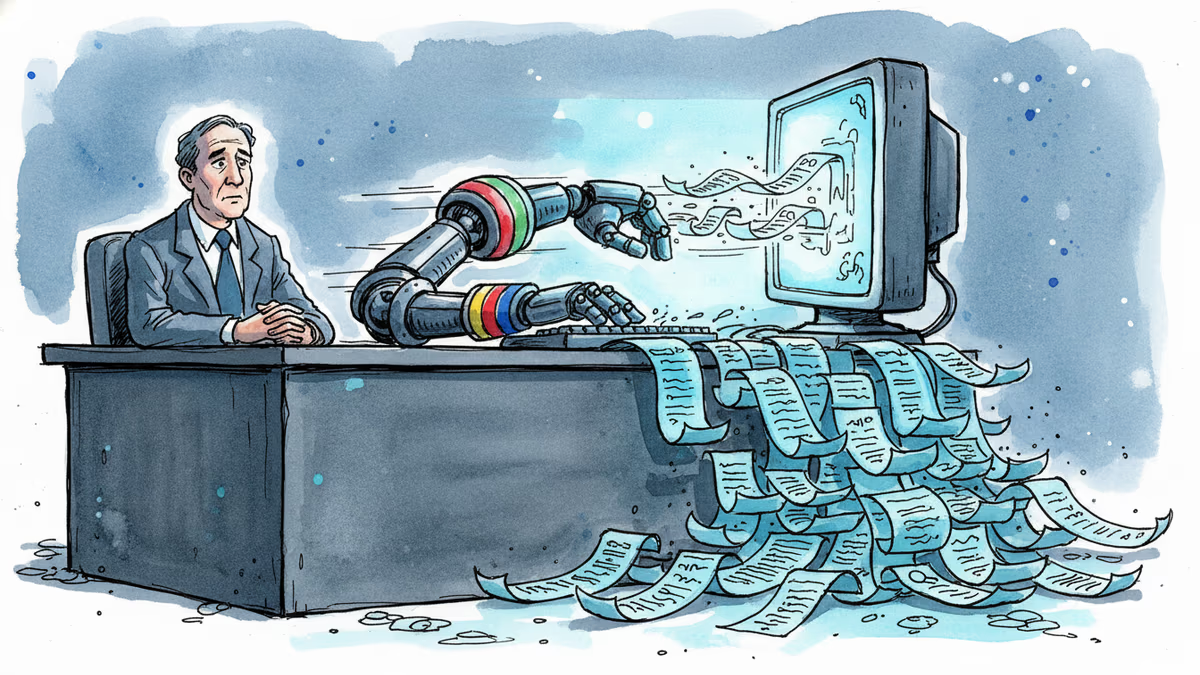

The Enterprise Reality Check

"Most of our customers are engineers building their own applications," Gerstenhaber notes. "They want access to agentic patterns. They want access to an agentic platform."

But here's the disconnect: while engineers want cutting-edge capabilities, their businesses often need solutions optimized for completely different constraints. A customer service application doesn't need the smartest model—it needs the most reliable one within a 3-second response window.

This suggests a future where AI deployment becomes highly specialized. Instead of one model to rule them all, we might see ecosystems of purpose-built models, each optimized for specific frontiers.

Authors

Related Articles

In a post-Google I/O interview, Sundar Pichai acknowledged flawed search results, real AI anxiety, and an AGI timeline that makes the label irrelevant. Here's what he said — and what it means.

Google is building AI agents that search the web proactively, without user prompting. That's not just a product update — it's a fundamental shift in who controls the information you receive.

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Thoughts

Share your thoughts on this article

Sign in to join the conversation