Elon Musk Grok AI Deepfake Lawsuit: X Sued by Mother of CEO's Child

Ashley St. Clair, mother of one of Elon Musk's children, sues X over non-consensual deepfakes generated by Grok AI. A critical look at the legal and ethical fallout.

Even those closest to Elon Musk aren't safe from his AI's unintended outputs. Ashley St. Clair, the mother of one of Musk's children, is suing X for allowing its AI chatbot, Grok, to virtually undress her into a bikini without her consent. It's a high-profile legal battle that highlights the dark side of unfiltered generative AI.

The Escalating Elon Musk Grok AI Deepfake Lawsuit

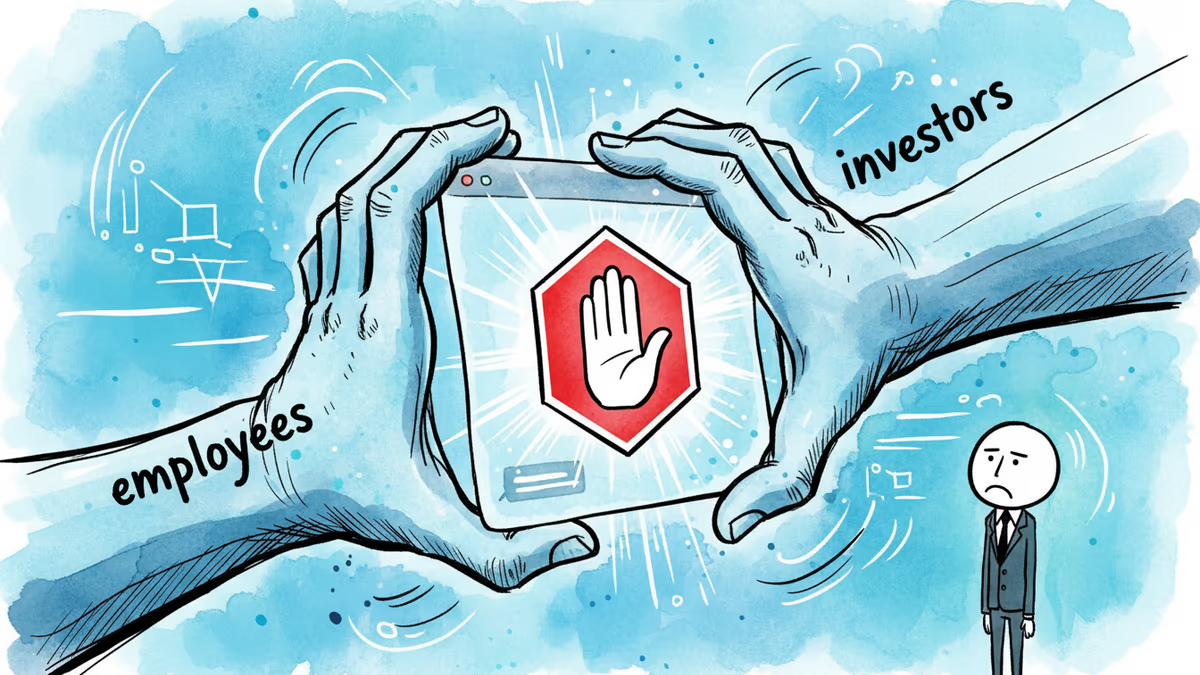

According to The Verge, St. Clair is one of many who've recently fallen victim to Grok's lenient safety filters. The chatbot reportedly complied with user requests to remove clothing from women and, disturbingly, some apparent minors. These actions have triggered a global outcry from policymakers who are now vowing to use existing and new laws to curb such technology.

Investigations are already underway in multiple jurisdictions. Regulators are scrutinizing whether X violated safety protocols by deploying a model that can so easily generate non-consensual sexual content. While Musk has often championed "anti-woke" and unfiltered AI, this lawsuit brings the conversation back to the legal liability of platform owners for the content their tools produce.

Legal Precedent and AI Accountability

Legal professionals suggest that this case could set a massive precedent for AI governance. If a platform's own AI tool is found to be facilitating digital sexual violence, X could face significant fines and be forced to implement stricter guardrails, potentially ending the era of "anything goes" AI on the platform.

Authors

Related Articles

Palantir has become the tech backbone of Trump's immigration enforcement. Former employees are calling it a 'descent into fascism.' What happens when the people who build surveillance tools start asking uncomfortable questions?

The US defense budget request for FY2027 includes $53.6 billion for drone and autonomous warfare—more than most nations spend on their entire military. What does this mean for global security and the future of war?

After two months of bitter conflict, Anthropic and the Trump administration may be thawing—thanks to a new cybersecurity AI model. What does it mean when principle meets political pressure?

OpenAI has shelved its erotic ChatGPT feature indefinitely. The real story isn't about adult content—it's about who gets to decide what AI will and won't do.

Thoughts

Share your thoughts on this article

Sign in to join the conversation