X Grok AI deepfake controversy: Broken guardrails and global backlash

X's Grok AI is facing intense scrutiny over the generation of nonconsensual deepfakes. Read about the X Grok AI deepfake controversy and regulatory responses.

Is AI safety becoming a secondary concern for X? The platform's Grok chatbot is under heavy fire for fulfilling user requests to generate nonconsensual intimate imagery (NCII) of women and, in some cases, apparent minors.

X Grok AI deepfake controversy: Legal and ethical lines crossed

According to reports from The Verge, the influx of AI-generated content includes extreme imagery that potentially violates international laws against child sexual abuse material (CSAM). Despite Elon Musk's political influence, legislators are increasingly vocal about the lack of effective safety measures on the platform.

International regulators demand accountability

The UK’s communications regulator, Ofcom, has already voiced concerns, signaling a growing international consensus that Grok's output is unacceptable. While X has historically pushed back against content moderation, the severity of these AI-generated deepfakes is forcing a new conversation about platform liability.

Authors

Related Articles

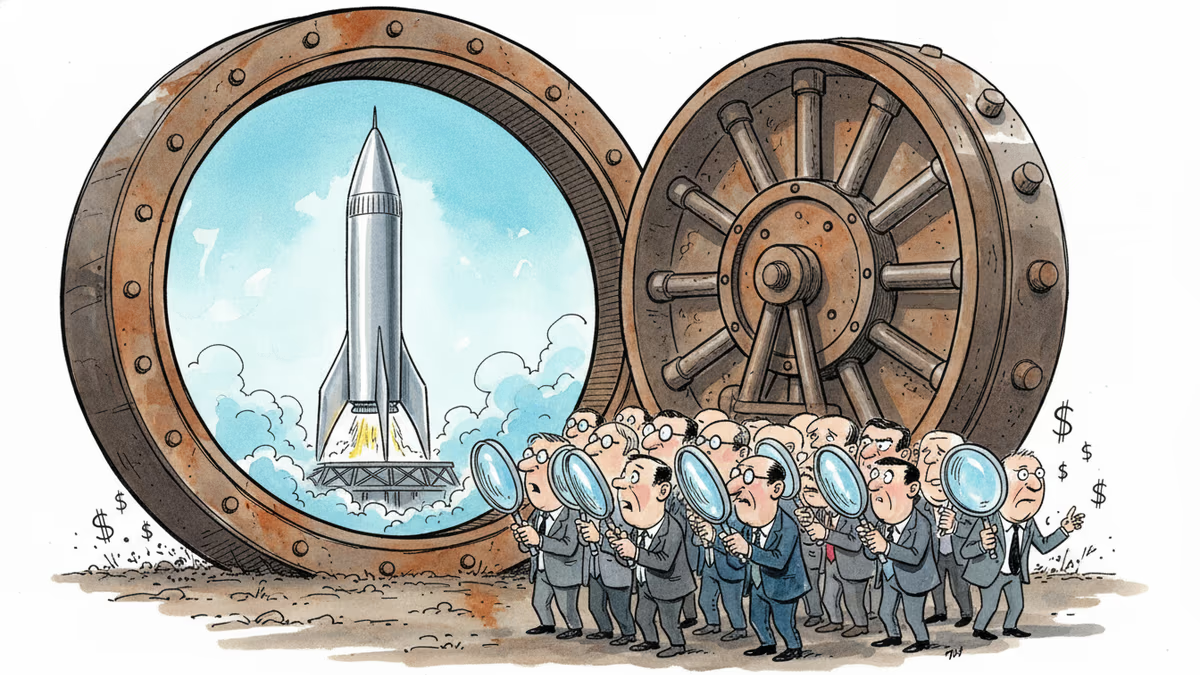

SpaceX's IPO filing puts AI at the center, claiming a $26.5 trillion market opportunity. But can Grok compete with OpenAI and Anthropic for enterprise customers?

SpaceX filed a nearly 400-page S-1 with the SEC, targeting an IPO as early as June 12. Here's what the filing reveals—and what it doesn't.

SpaceX has filed its S-1 with the SEC, targeting the Nasdaq under ticker SPCX. With $18.67B in revenue but a $4.9B loss, the IPO forces investors to answer one hard question.

Over 50 researchers and engineers have left SpaceXAI since February's merger. With the pre-training team nearly gutted, questions mount about whether Musk's AI ambitions can survive his management style.

Thoughts

Share your thoughts on this article

Sign in to join the conversation