The 1-Inch Fake That Breaks Everything

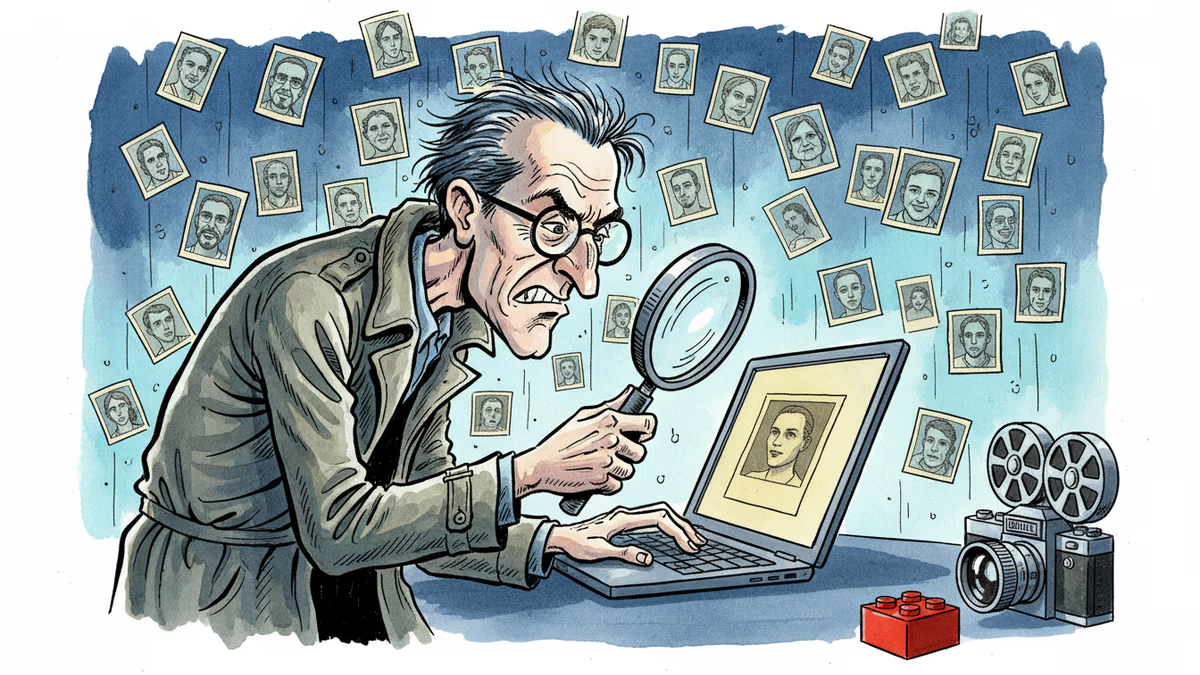

AI-generated war propaganda is outrunning verification. From Lego-style atrocity videos to single-pixel manipulations, the line between real and synthetic is collapsing—and the tools built to save us are struggling to keep up.

The image that convinced you yesterday might never have existed.

Lego figurines picking through rubble. Yellow plastic bodies slumped against bombed-out walls. An Iran-linked outlet called Explosive News can reportedly produce a two-minute synthetic segment like this in under 24 hours. The goal isn't polish. It's reach—specifically, reach before verification catches up.

This isn't a story about fake news getting worse. It's a story about the architecture of truth itself being dismantled, one repost at a time.

Speed Is Winning

Automated bots now account for an estimated 51% of all internet traffic, scaling eight times faster than human traffic according to the 2026 State of AI Traffic & Cyberthreat Benchmark Report. These systems don't just distribute content—they're optimized to prioritize low-quality virality. The synthetic record travels. Verification is still lacing up its shoes.

OSINT journalist Maryam Ishani, who covers conflict zones, puts it plainly: "We're perpetually catching up to someone pressing repost without a second thought. The algorithm prioritizes that reflex, and our information is always going to be one step behind."

What makes this particularly corrosive is that the tools meant to counter it are being absorbed into the problem. Manisha Ganguly, visual forensics lead at The Guardian, warns that open-source verification itself can generate false certainty—when confirmation bias creeps in, or when OSINT is used to cosmetically validate official narratives rather than interrogate them. The flood of war-monitoring accounts on Telegram and X creates an ecosystem where content appears verified simply because so many accounts are sharing it.

Even official communication has caught the virus. Last month, the White House posted two cryptic "launching soon" videos, then quietly deleted them after open-source investigators began dissecting them. The reveal: a promotional push for the official White House app. Anticlimactic—but revealing. When official accounts adopt the aesthetics of a leak, the visual grammar of intrigue and virality, the question of what's real and what's staged becomes genuinely unanswerable from the outside.

The 1-Inch Problem

The classic tells of AI-generated imagery—wrong finger counts, garbled protest signs, uncanny lighting—are largely gone. Imagen 3, Midjourney, and DALL·E have quietly fixed most of the errors that detection tools were trained to catch.

But the deeper problem, according to investigative trainer Henk van Ess, isn't fully synthetic images. It's what he calls the hybrid.

In these cases, 95% of an image is a real photograph: real metadata, real sensor noise, real lighting physics. The manipulation is surgical—a patch added to a uniform, a weapon placed into a hand, a face subtly swapped. Pixel-level detectors often clear it because they're scanning what is, in most respects, a genuine image. The fake can be one square inch.

"Every old method assumed the image was a record of something," van Ess says. "Generative media breaks that assumption at the root."

Meanwhile, one of the most important tools for independent verification has just been taken off the table. On April 4, Planet Labs—a primary commercial satellite provider for conflict journalism—announced it would indefinitely withhold imagery of Iran and the broader Middle East conflict zone, retroactive to March 9, following a US government request. Defense Secretary Pete Hegseth was direct about his view: "Open source is not the place to determine what did or did not happen."

When access to primary visual evidence is restricted, the gap doesn't stay empty. Generative AI fills it—and competes to define what's seen in the first place.

Five Things You Can Actually Do

Deepfake researcher Henry Ajder, who has advised Adobe and Synthesia, argues the long-term answer is provenance—systems that verify origin rather than endlessly chasing what's fake. That infrastructure doesn't exist at scale yet. Until it does, the burden falls on individual behavior.

Van Ess breaks verification down into five steps. Not guarantees, but friction—a few minutes of scrutiny in a system designed to reward none.

Look for Hollywood. Real catastrophe is rarely symmetrical. If an image feels too cinematic—too dramatically lit, too composed—that's your first signal. If everyone looks ready for their close-up, something's off.

Run multiple reverse image searches.Google Lens, Yandex, and TinEye each surface different results. A lack of matches no longer proves originality. It may mean the image was never captured by a lens at all.

Zoom into the margins. Not the landmark—the parking sign, the manhole cover, the shadow angle. These peripheral details are where inconsistencies tend to hide. No one generating a fake is paid to perfect them.

Treat detection tools as prompts, not verdicts. A confidence score without explanation is not evidence. Tools that show where an image first appeared, or whether it exists in fact-checker databases, are more useful than a single percentage. ImageWhisperer is one free option that combines these signals.

Find patient zero. Trace the image to its earliest appearance. Authentic material usually arrives attached to a person—a witness, a photographer, a location. Synthetic content tends to appear frictionless: anonymous, polished, and already formatted for sharing.

Authors

Related Articles

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Over 50 researchers and engineers have left SpaceXAI since February's merger. With the pre-training team nearly gutted, questions mount about whether Musk's AI ambitions can survive his management style.

Thoughts

Share your thoughts on this article

Sign in to join the conversation