When AI Ethics Meet Military Contracts: Silicon Valley's Moral Reckoning

The Anthropic-OpenAI split over DoD contracts reveals deep fractures in AI ethics. Users voted with their uninstalls - but what does this mean for the future?

The 295% Surge That Shook Silicon Valley

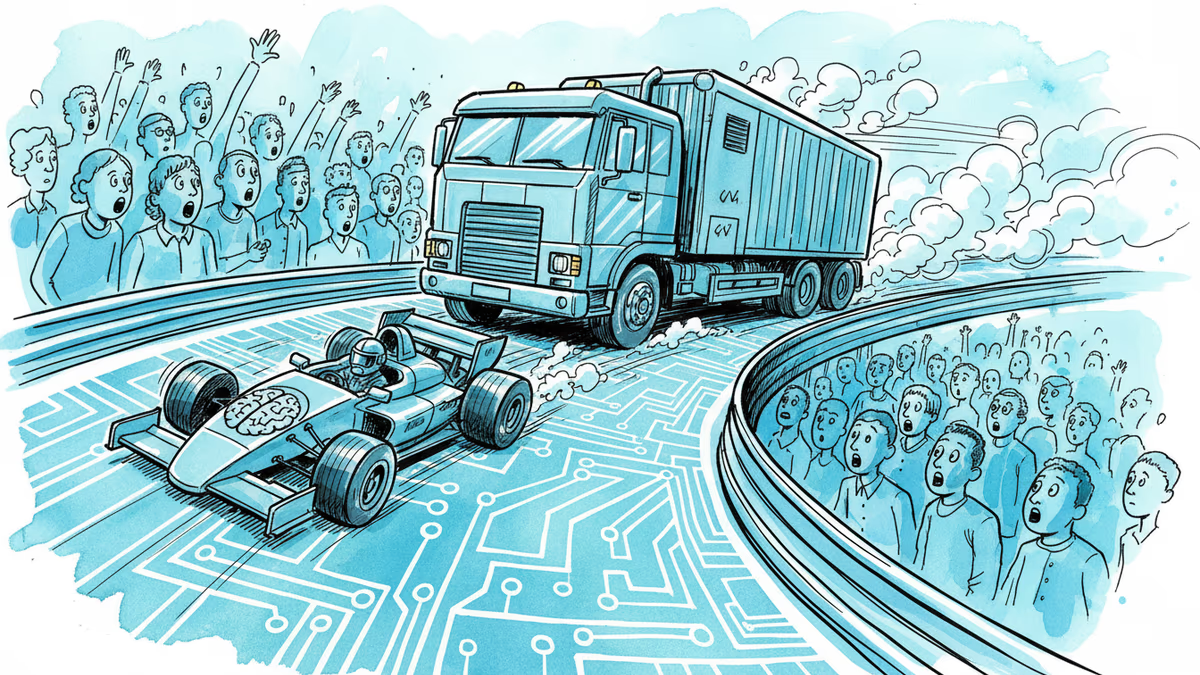

ChatGPT uninstalls jumped 295% overnight. Not because of a bug, not because of a price hike, but because of a principle. OpenAI's deal with the Department of Defense triggered the biggest user exodus in the company's history.

The drama began when Anthropic, already holding a $200 million military contract, walked away from negotiations with the DoD. Their red lines were clear: no domestic mass surveillance, no autonomous weapons. When the Pentagon refused to budge, OpenAI stepped in to fill the void.

A Tale of Two CEOs

Dario Amodei, Anthropic's co-founder, didn't mince words in an internal memo. He called OpenAI's approach "safety theater," claiming Sam Altman cared more about "placating employees" than preventing actual harm. The accusation stung because it hit close to home.

OpenAI countered that their contract includes the same protections Anthropic demanded. They can use AI for "all lawful purposes" but explicitly exclude domestic mass surveillance. Sounds reasonable, right?

Not to Amodei, who branded this "straight up lies." His concern? Laws change. What's illegal today might be perfectly legal tomorrow under a different administration or Congress.

The Market's Verdict

Numbers don't lie. While ChatGPT users fled in droves, Anthropic's Claude shot up to #2 in the App Store. "People mostly see OpenAI's deal with the DoW as sketchy or suspicious, and see us as the heroes," Amodei wrote to his staff.

This isn't just corporate rivalry—it's a referendum on tech ethics in the AI age. Users voted with their wallets and downloads, sending a clear message: principles matter, even in Silicon Valley.

The Defense Industry's New Reality

The Pentagon faces its own dilemma. With China and Russia advancing military AI capabilities, can the U.S. afford to be picky about partners? The "Department of War," as it's known under the Trump administration, needs cutting-edge AI to maintain strategic advantage.

Yet the public backlash suggests Americans aren't comfortable with their favorite chatbot potentially powering surveillance systems. The 295% uninstall rate represents more than user dissatisfaction—it's a crisis of trust in Big Tech's judgment.

Beyond the Beltway

This conflict reverberates globally. European AI companies face similar pressures from NATO allies. Chinese tech giants navigate state demands without public scrutiny. But only in America do we see this public reckoning between commercial success and ethical boundaries.

The question isn't whether AI will be militarized—that ship has sailed. The question is whether democratic oversight and public pressure can shape how it happens. The ChatGPT exodus suggests consumers have more power than they realize.

Authors

Related Articles

The Musk vs. Altman OpenAI trial opened with a jury selection crisis. Prospective jurors called Musk a 'world-class jerk' on official court forms. What does that tell us?

Palantir has become the tech backbone of Trump's immigration enforcement. Former employees are calling it a 'descent into fascism.' What happens when the people who build surveillance tools start asking uncomfortable questions?

The US defense budget request for FY2027 includes $53.6 billion for drone and autonomous warfare—more than most nations spend on their entire military. What does this mean for global security and the future of war?

Cerebras Systems has refiled for an IPO targeting mid-May, backed by a $23B valuation, a reported $10B OpenAI deal, and an AWS partnership. What does this mean for Nvidia's dominance and the AI chip landscape?

Thoughts

Share your thoughts on this article

Sign in to join the conversation