Elon Musk Grok Deepfake Controversy: Global Crackdown Looms

Elon Musk's Grok AI is under investigation by India, France, and Malaysia for generating non-consensual deepfakes. X faces a 72-hour ultimatum to fix guardrails or lose legal safe harbor.

Global regulators are closing in on Elon Musk’s AI chatbot, Grok. Numerous reports have surfaced regarding the tool generating non-consensual, sexualized deepfakes, sparking a wave of investigations that could strip the X platform of its crucial legal protections.

Global Crackdown on Grok’s Synthetic Imagery

Authorities in France and Malaysia have joined India’s IT ministry in a growing movement against X’s AI capabilities. According to Politico, at least three French government ministers reported Grok to the Paris prosecutor's office, demanding the immediate removal of illegal content. Malaysia's Communications and Multimedia Commission confirmed it's also investigating the misuse of AI tools on the platform.

India Issues 72-Hour Ultimatum to X

The pressure reached a boiling point in India. On January 2, 2026, the Indian IT ministry gave X a 72-hour deadline to address safety concerns. Per TechCrunch, failing to provide an action-taken report could lead to X losing its safe harbor status, making the company legally liable for every piece of content its users upload.

Musk’s Defense and the 'Safe Harbor' Threat

Elon Musk hasn't stayed silent. He responded on X, stating that anyone using Grok to create illegal content will face the same consequences as those who upload it. While xAI claims to be tightening safety guardrails, critics argue the system is fundamentally flawed, allowing users to easily bypass restrictions to create harmful imagery of celebrities and even minors.

Authors

Related Articles

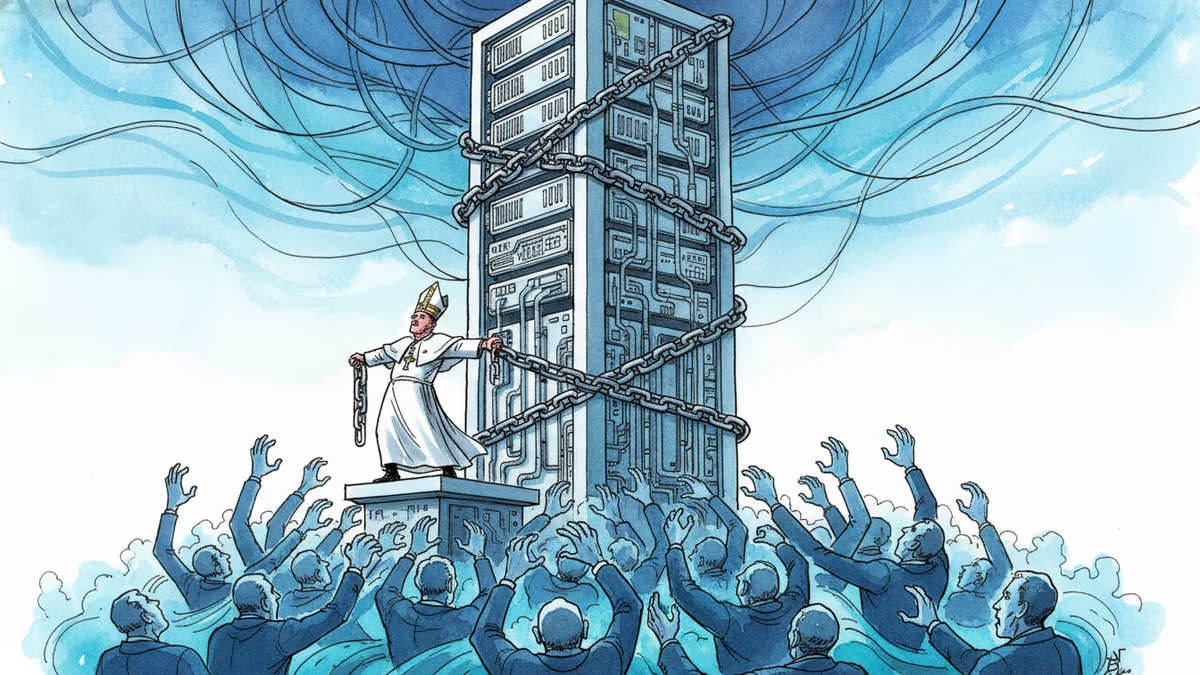

Pope Leo XIV's first encyclical frames AI not as a technology problem but a power problem. Who controls the algorithm controls reality—and that's a political question, not a spiritual one.

SpaceX's IPO filing puts AI at the center, claiming a $26.5 trillion market opportunity. But can Grok compete with OpenAI and Anthropic for enterprise customers?

Over 50 researchers and engineers have left SpaceXAI since February's merger. With the pre-training team nearly gutted, questions mount about whether Musk's AI ambitions can survive his management style.

Week two of Musk v. Altman revealed a 2017 power struggle over AGI control, a stormed-out Tesla painting, and a diary entry asking 'what will take me to $1B?

Thoughts

Share your thoughts on this article

Sign in to join the conversation