Claude's Secret Feature: An AI That Works While You Sleep

A surprise leak of Anthropic's Claude Code source code revealed 'Kairos'—a dormant background AI agent designed to act before you even ask. Here's what it means.

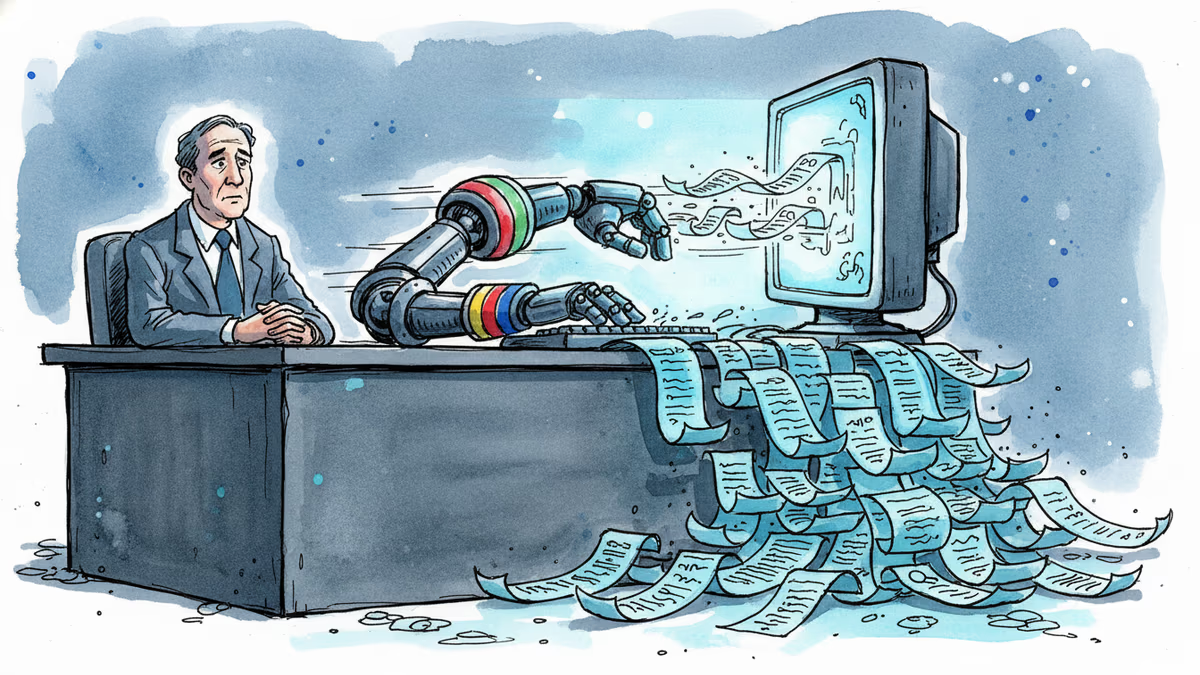

You closed the laptop. The AI didn't.

On March 31st, the source code for Anthropic's Claude Code leaked unexpectedly—512,000+ lines across 2,000+ files. Developers combing through the dump found something that wasn't supposed to be public yet: a dormant feature called Kairos. It's not live. But what it's designed to do says a great deal about where AI assistants are headed.

What Kairos Actually Does

Kairos is a persistent background daemon—think of it as an AI that keeps running even after you've closed the terminal window and walked away from your desk.

The mechanism is straightforward but significant. The system uses periodic <tick> prompts to continuously evaluate whether new actions are needed. A PROACTIVE flag is designed for "surfacing something the user hasn't asked for and needs to see now." In plain terms: the AI decides to interrupt you, not the other way around.

A prompt hidden behind a disabled KAIROS flag in the code lays out the ambition directly: the system is meant to "have a complete picture of who the user is, how they'd like to collaborate, what behaviors to avoid or repeat, and the context behind the work the user gives you." A file-based memory system keeps that picture intact across sessions—even after restarts.

This isn't a chatbot waiting for input. It's an agent building a persistent model of you.

Why This Matters Right Now

The timing isn't accidental. The AI coding tools market is mid-consolidation. GitHub Copilot, Cursor, Replit, and Claude Code itself are all fighting for developer mindshare, and the competitive edge is shifting from "how good is the autocomplete" to "how deeply does this tool understand my workflow."

Kairos is Anthropic's answer to that question—and it's a meaningful one. A background agent that remembers context, monitors for issues proactively, and surfaces insights before you think to ask them isn't just a productivity tool. It's a fundamentally different relationship between developer and software. OpenAI's operator/agent framework and Google's Project Astra are pointing in the same direction. Kairos confirms Anthropic is on the same road.

For enterprise customers—which Anthropic has been aggressively courting—the value proposition is obvious. Code reviews, security monitoring, CI/CD pipeline oversight: tasks that currently require human attention at odd hours could be handed off to an agent that never sleeps. That's not a marginal improvement. That's a different staffing model.

The Other Side of the Coin

Not everyone reading the leaked code was impressed.

Privacy researchers flagged the obvious tension immediately. A system designed to build "a complete picture of who the user is" and retain that picture across sessions is, by definition, a continuous data collection mechanism. The fact that it operates in the background—without the user actively engaging it—raises questions that Anthropic hasn't yet answered publicly: Where is this data stored? Who can access it? Can it be deleted?

For enterprise security teams, the concern is more concrete. Most corporate environments have strict policies about what software can run persistently and what data it can retain. A background AI agent that learns behavioral patterns and stores them in files sits uncomfortably close to the definition of monitoring software—regardless of intent.

Regulatory exposure is real too. The EU's AI Act imposes transparency obligations on high-risk AI systems. Whether a persistent, proactive agent that models user behavior falls under that umbrella is an open legal question. In the US, where AI regulation remains fragmented, the ambiguity cuts differently—but it doesn't disappear.

It's worth noting that Kairos is disabled. Anthropic hasn't announced it, hasn't documented it publicly, and hasn't made any commitment to ship it. The feature may never launch in its current form. But the design philosophy embedded in the code is already live in the decisions being made.

Authors

Related Articles

Google is building AI agents that search the web proactively, without user prompting. That's not just a product update — it's a fundamental shift in who controls the information you receive.

OpenAI has reorganized for the second time in a month, merging ChatGPT and Codex into a single agentic platform under president Greg Brockman's unified product leadership.

A Utah woman was sentenced to life in prison partly because of her Google searches and deleted texts. The Kouri Richins case reveals how digital footprints have become the courtroom's most reliable witness.

OpenAI's new Daybreak initiative uses the Codex AI agent to find and patch security vulnerabilities before attackers do—putting it in direct competition with Anthropic's secretive Claude Mythos.

Thoughts

Share your thoughts on this article

Sign in to join the conversation