Bandcamp AI Music Ban 2026: Drawing a Line for Human Artists

Bandcamp announces a ban on AI-generated music to protect human creators. Learn about the Bandcamp AI music ban 2026 and what it means for the future of indie music.

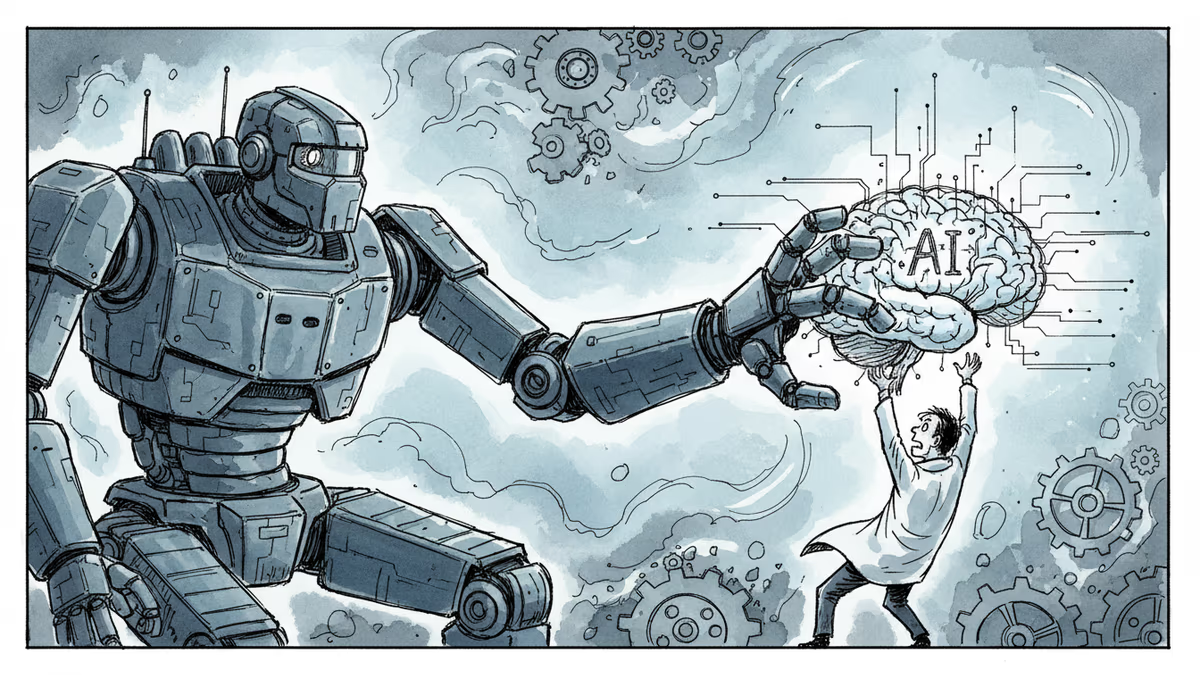

The stage belongs to humans once again. In a move that's shaking up the indie music scene, Bandcamp has officially declared war on AI-generated tracks, banning them from its platform to preserve "real people" creativity.

Inside the Bandcamp AI Music Ban 2026

On Tuesday, January 13, 2026, Bandcamp took to Reddit to announce its latest policy shift. The company stated that music generated wholly or in substantial part by AI is no longer permitted. This isn't just about automated beats; the policy also strictly prohibits using AI to impersonate other artists or styles, targeting deepfake-style content that has plagued the industry recently.

Tools vs. Automation: Where's the Limit?

The platform isn't banning technology altogether, but it's distinguishing between assistance and replacement. While minor AI assistance—like cleaning up audio or suggesting basic chord progressions—remains acceptable for human artists, full automation is out. Bandcamp argues that AI models lack the creative intent and personhood required to be considered true artists.

To enforce this, Bandcamp is relying on its community. Users are encouraged to flag suspicious content, and the platform reserves the right to remove any tracks they believe are AI-generated. It's a bold attempt to protect the vibrant ecosystem of real musicians that made the site a cult favorite.

Authors

Related Articles

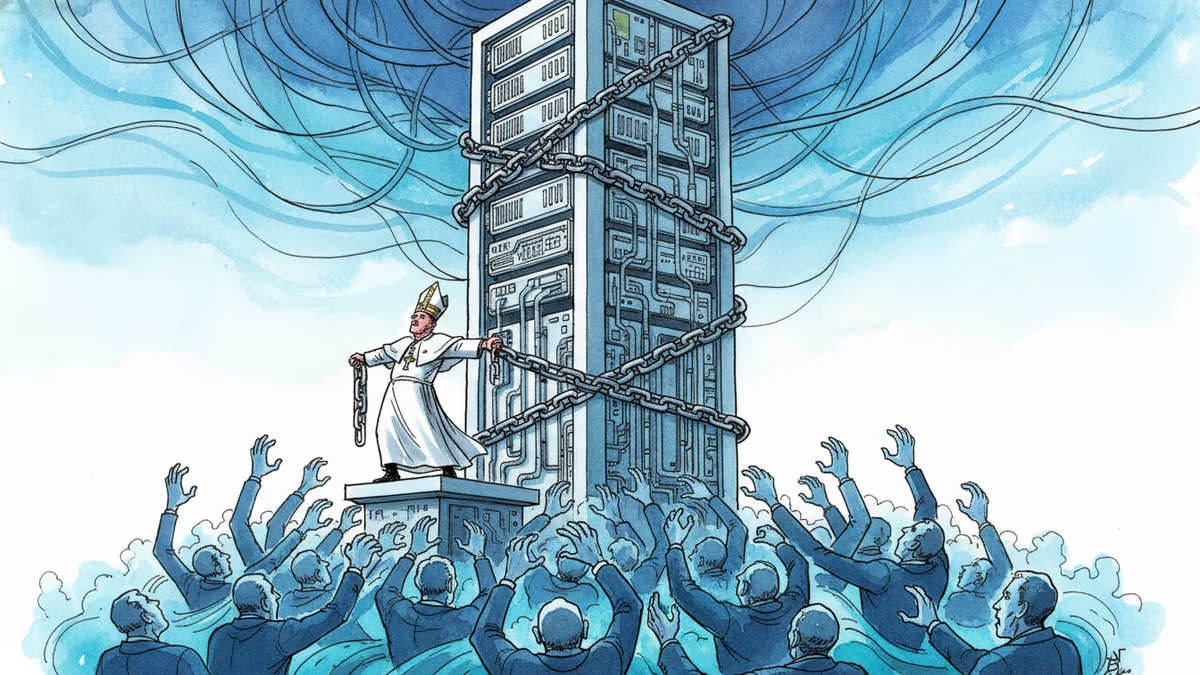

Pope Leo XIV's first encyclical frames AI not as a technology problem but a power problem. Who controls the algorithm controls reality—and that's a political question, not a spiritual one.

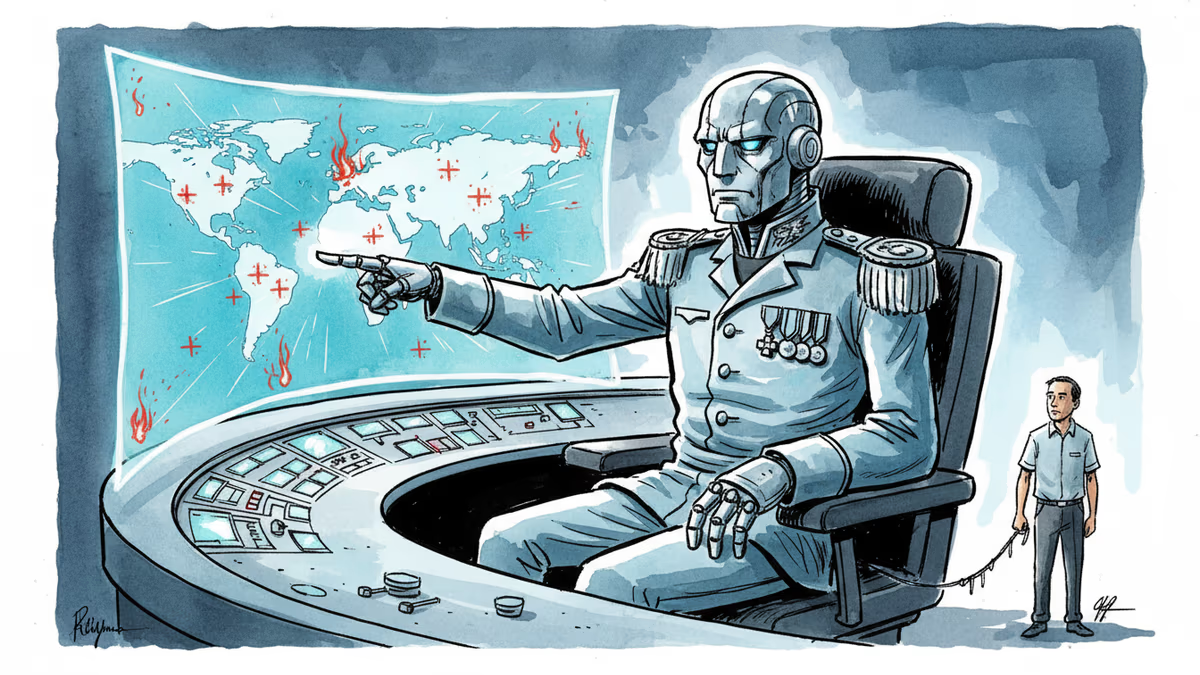

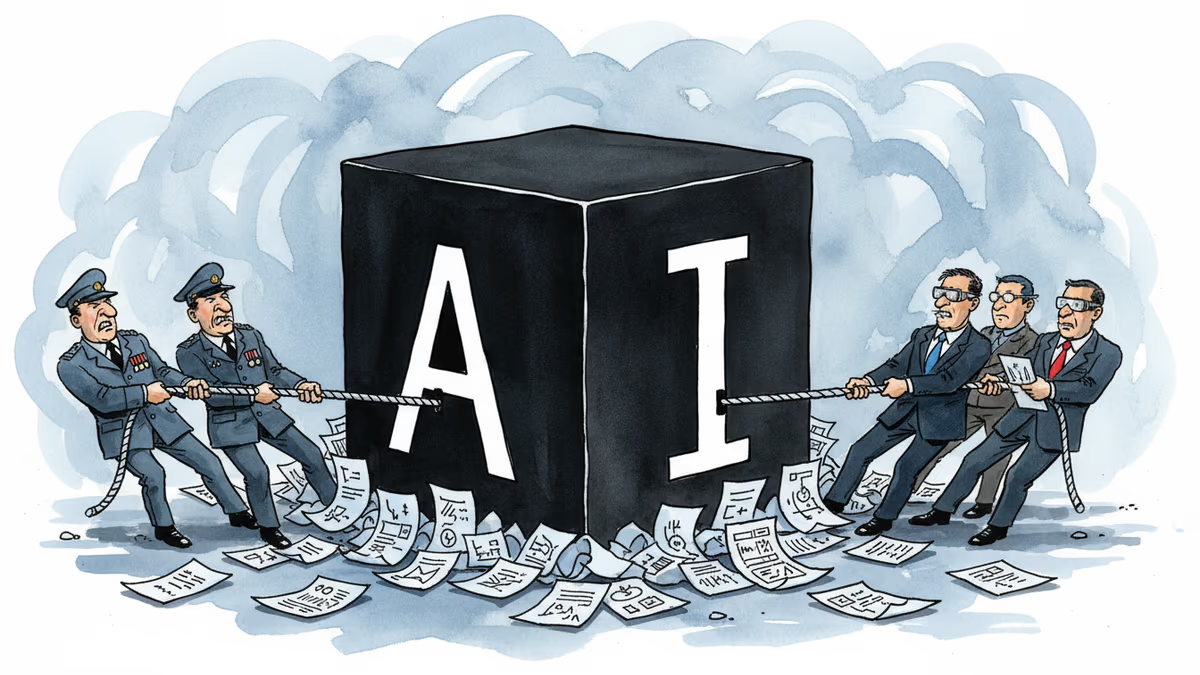

Anthropic's Claude AI is embedded in US military operations—from the capture of Maduro to the Iran war. A Pentagon dispute is exposing what "responsible AI" actually means in wartime.

OpenAI faced internal backlash over Pentagon contracts, revealing deeper questions about AI military use, transparency, and corporate accountability in defense partnerships.

Trump's explosive reaction to Anthropic's military contract refusal reveals the growing tension between AI ethics and national security demands.

Thoughts

Share your thoughts on this article

Sign in to join the conversation