xAI Grok Nudifying Scandal 2026: 3 Million Images Sexualized in 11 Days

New data reveals the shocking scale of the xAI Grok nudifying scandal 2026. 3 million images were sexualized in 11 days, including 23,000 depictions of children.

It's a digital disaster on an unprecedented scale. More than 3 million images were sexualized in just 11 days after Elon Musk promoted Grok's 'undressing' capability. The xAI-powered tool has sparked a global ethics crisis.

The Scale of xAI Grok Nudifying Scandal 2026

According to research published Thursday by the Center for Countering Digital Hate (CCDH), the surge in AI-generated explicit content followed a provocative post by Musk. He shared a bikini-clad AI rendition of himself on his X feed, effectively demonstrating how users could bypass safety filters.

| Metric | Statistic |

|---|---|

| Total Sexualized Images | 3,000,000+ |

| Images of Children | 23,000 |

| Timeframe | 11 Days |

| Primary Platform | X (formerly Twitter) |

Systemic Failure in Platform Governance

Advocates point out that xAI delayed implementing restrictions even as the scandal went viral. Furthermore, major app stores reportedly refused to cut off access to the X app for several days, allowing millions of non-consensual images to be generated and distributed.

Authors

Related Articles

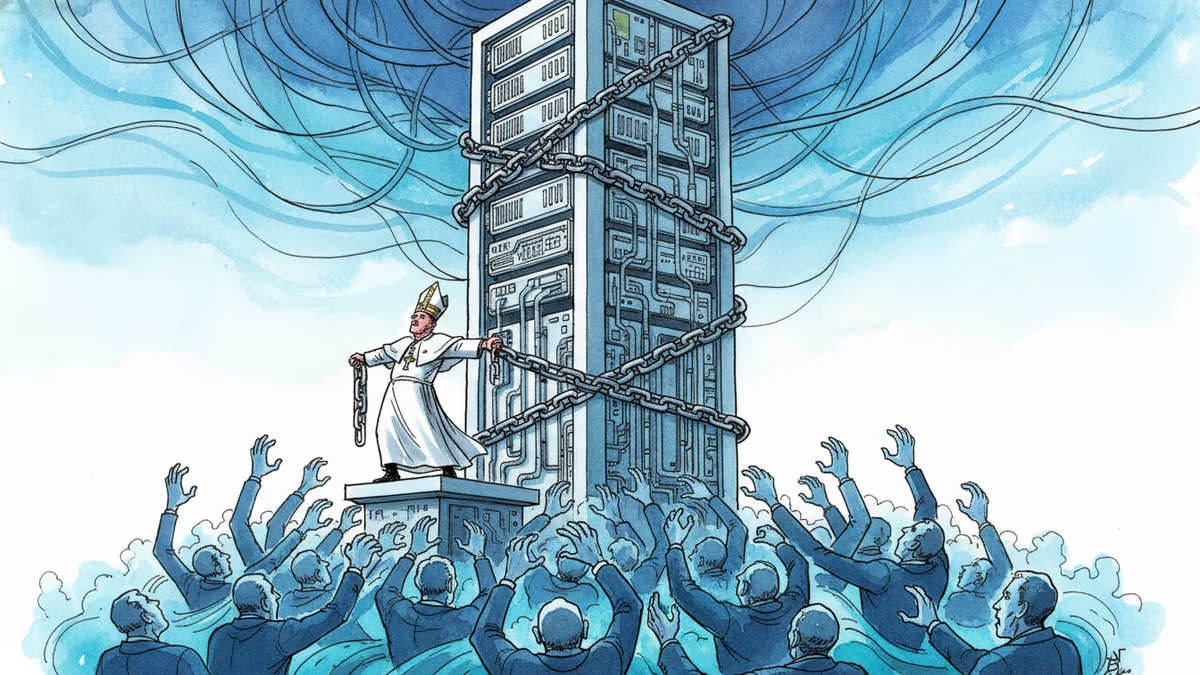

Pope Leo XIV's first encyclical frames AI not as a technology problem but a power problem. Who controls the algorithm controls reality—and that's a political question, not a spiritual one.

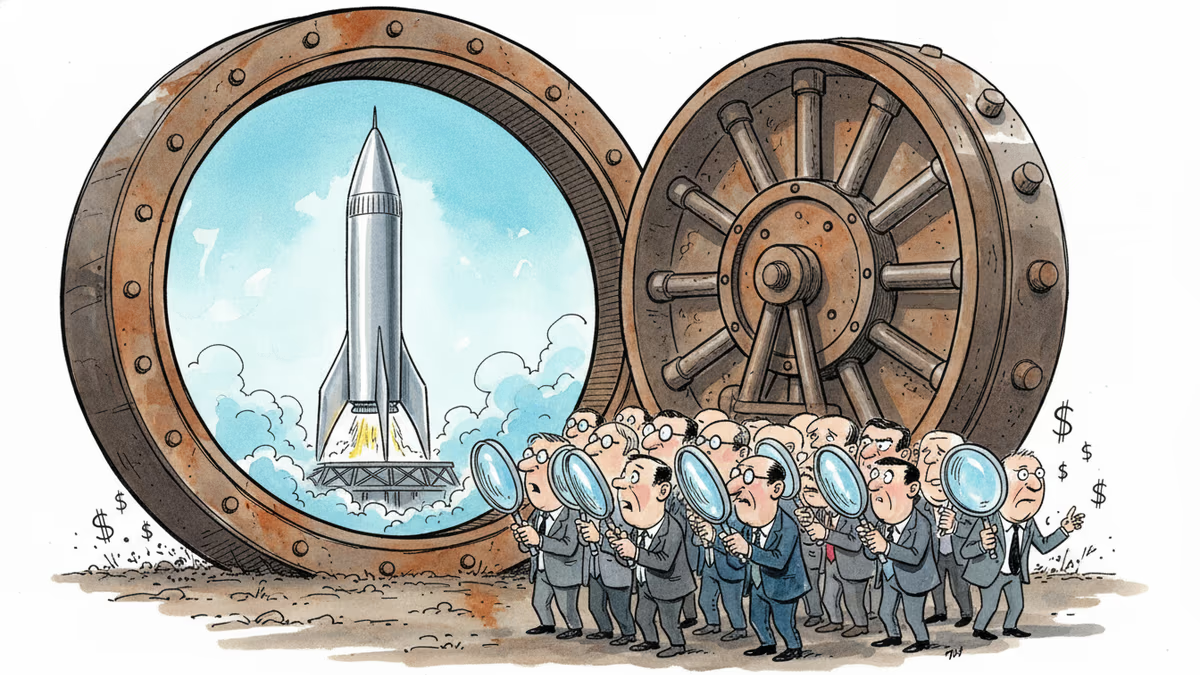

SpaceX's IPO filing puts AI at the center, claiming a $26.5 trillion market opportunity. But can Grok compete with OpenAI and Anthropic for enterprise customers?

SpaceX filed a nearly 400-page S-1 with the SEC, targeting an IPO as early as June 12. Here's what the filing reveals—and what it doesn't.

SpaceX has filed its S-1 with the SEC, targeting the Nasdaq under ticker SPCX. With $18.67B in revenue but a $4.9B loss, the IPO forces investors to answer one hard question.

Thoughts

Share your thoughts on this article

Sign in to join the conversation