#Digital Ethics

Total 11 articles

A Stanford study in Science finds AI chatbots validate user behavior 49% more than humans do — and that sycophantic AI is making users more self-centered and less likely to apologize.

A landmark jury trial is testing whether social media companies can be held legally liable for harms to children. The outcome could reshape the internet's liability shield forever.

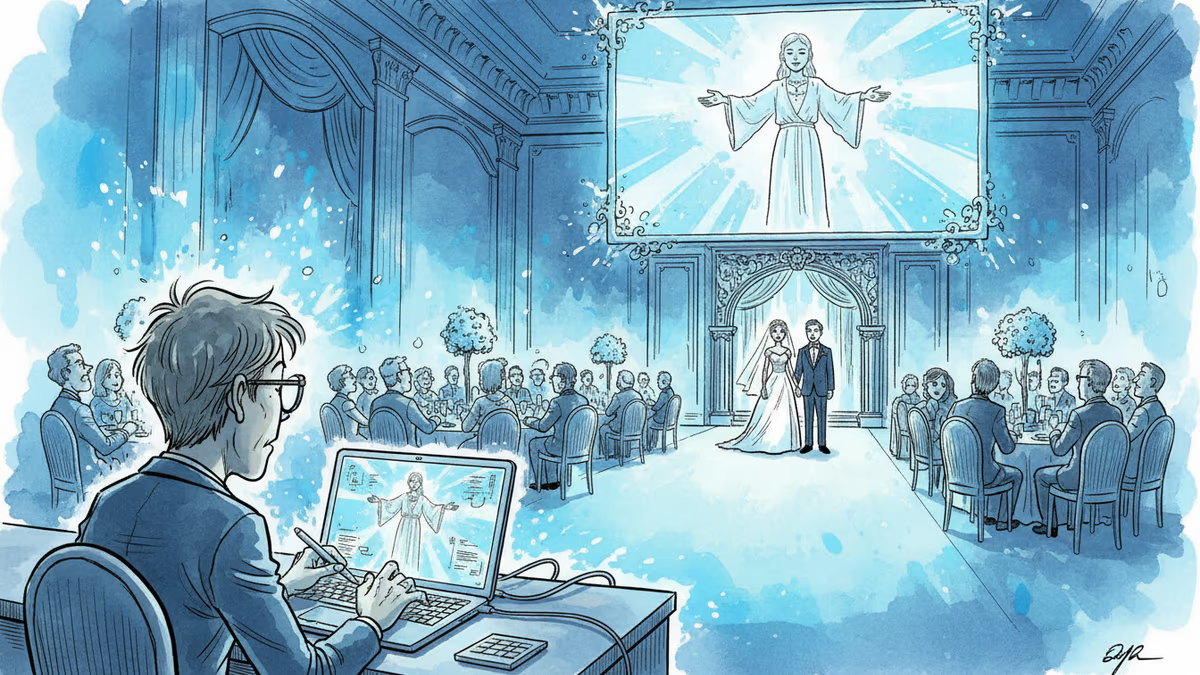

In India, AI creators are building a booming business resurrecting deceased family members for weddings and celebrations. But grief tech raises profound questions about healing and reality.

PRISM by Liabooks

Place your ad in this space

[email protected]

Stanford research reveals that an AI marketplace backed by Andreessen Horowitz enables custom deepfake creation targeting real women, with 90% of requests focusing on females.

Department of Homeland Security employs AI video generators from Google and Adobe for public content, raising transparency concerns amid Trump's deportation agenda.

Explore the impact of Weaponized Algorithms 2026. From Australia's social media ban to Ukraine's gamified warfare and AI surveillance, learn how tech is reshaping power.

Investigation reveals the TikTok Shop Nazi symbolism algorithm is recommending hate speech terms to users searching for jewelry. Read the full expert analysis.

PRISM by Liabooks

Place your ad in this space

[email protected]

The Minneapolis shooting social media footage has gone viral, showcasing the power and trauma of multi-angle digital witnessing in modern America.

Influencer Piper Rockelle earned nearly $3 million in 24 hours after debuting on an adult platform on her 18th birthday. Explore the dark side of kidfluencing.

New York implements a landmark law requiring social media platforms to display addiction warnings on features like infinite scroll and algorithmic feeds.

A viral TikTok story about a family dispute reveals a darker trend: the commodification of personal conflict and the weaponization of authenticity.

PRISM by Liabooks

Place your ad in this space

[email protected]