Butterfly Clips in the Hallway: Big Tech Faces Its Tobacco Moment

A landmark jury trial is testing whether social media companies can be held legally liable for harms to children. The outcome could reshape the internet's liability shield forever.

About a dozen parents stood in a dim courthouse hallway in February, clutching paper lottery tickets, waiting to find out if they'd get a seat inside. On their coats and bags: butterfly clips. Not flowers, not photos — just small, quiet symbols chosen deliberately so as not to sway the jury. The jury that would decide whether the platforms they blame for their children's deaths could be held legally responsible.

What's Actually on Trial

This isn't a criminal case, and no executive is facing prison. What's being tested in a U.S. courtroom is something arguably more consequential for the long term: whether Meta, TikTok, Snapchat, and other platforms can be sued for the way their algorithms are designed — not just for specific pieces of harmful content.

For decades, Section 230 of the Communications Decency Act has functioned as a near-impenetrable legal shield for platforms. It says that online services generally can't be held liable for content posted by their users. But the plaintiffs in this case are making a different argument: they're not suing over content. They're suing over product design. The claim is that algorithmically recommending harmful content to vulnerable teenagers — and engineering feeds to maximize engagement at the expense of wellbeing — is a defective product, not a free speech issue.

This trial is one of the first of its kind to reach a jury. Across the U.S., thousands of similar lawsuits have been filed. What happens in this courtroom could set the template for all of them.

The Tobacco Playbook, Revisited

The legal strategy here isn't new — it's borrowed. For years, tobacco companies successfully argued that smoking was a personal choice. It took internal documents proving executives knew about addiction and cancer risks to shift the legal tide. Meta's own internal research, leaked by whistleblower Frances Haugen in 2021, showed the company was aware Instagram was harmful to teenage girls' mental health. That document is now Exhibit A in the court of public opinion — and increasingly, in actual courts.

The plaintiffs' lawyers are leaning hard into this framing: these are not neutral platforms. They are products, engineered for compulsion, deployed on children.

Platforms push back with a constitutional argument. Deciding what content to recommend, they say, is an editorial act — protected under the First Amendment. Force a platform to change its algorithm, and you're effectively compelling speech. It's a legally serious argument, and courts have split on it.

Who's Watching, and Why It Matters to Them

Parents and advocacy groups see this as a generational reckoning. The tobacco comparison isn't hyperbole to them — it's a roadmap. If liability can be established, it changes the economics of building addictive products for teenagers overnight.

The platforms themselves have been quietly repositioning. Meta announced default restrictions on teen accounts. TikTok introduced screen time limits. Critics read these moves less as genuine reform and more as litigation insurance — demonstrating good faith before a jury.

Advertisers are the quieter stakeholders. Teen-targeted advertising is a significant revenue stream across all major platforms. If courts begin treating algorithmic amplification as a product liability issue, the entire business model of engagement-driven advertising faces scrutiny.

Mental health researchers offer a note of caution on the legal framing. The correlation between heavy social media use and adolescent mental health decline is well-documented. But legal causation — proving that this platform caused this harm to this child — is a far harder standard to meet in a courtroom. The science is suggestive; the law demands specificity.

Regulators in Europe are watching with particular interest. The EU's Digital Services Act already imposes risk assessment obligations on large platforms regarding minors. A U.S. liability verdict would give European regulators significant political momentum to tighten enforcement.

The Bigger Shift Underneath

For most of the internet's history, platforms have operated under an implicit bargain: they provide the infrastructure, users provide the content, and liability lives with the users. That bargain was written into law in 1996, when the internet was a different place — before algorithmic feeds, before infinite scroll, before A/B-tested notification systems designed to pull teenagers back to their screens at 2 a.m.

The question this trial is really asking is whether a legal framework designed for bulletin boards still makes sense for systems that actively shape what billions of people see, feel, and do every day.

Authors

Related Articles

Apple names John Ternus, its hardware engineering chief, as the next CEO. The shift from operator to product person signals where Apple thinks its next decade of growth will come from — and raises real questions about what comes next.

After 14 years and a run that turned Apple into a $4 trillion company, Tim Cook steps down as CEO. Hardware chief John Ternus takes over September 1. Here's what changes—and what doesn't.

Florida's AG is investigating OpenAI over a campus shooting, child safety risks, and national security concerns. What it means for AI regulation in America.

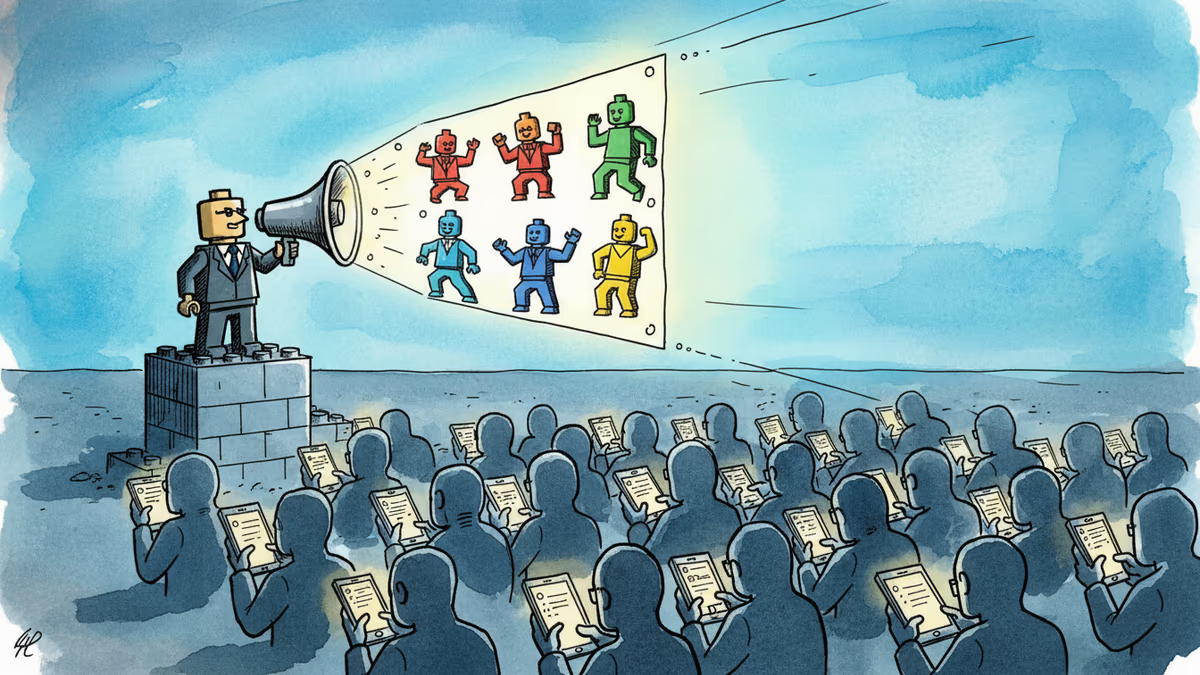

A small pro-Iran team is racking up millions of views with AI-generated Lego videos that mock Trump — and Americans are sharing them. What does that tell us about information warfare?

Thoughts

Share your thoughts on this article

Sign in to join the conversation