The 10-Year Promise Finally Becomes Reality

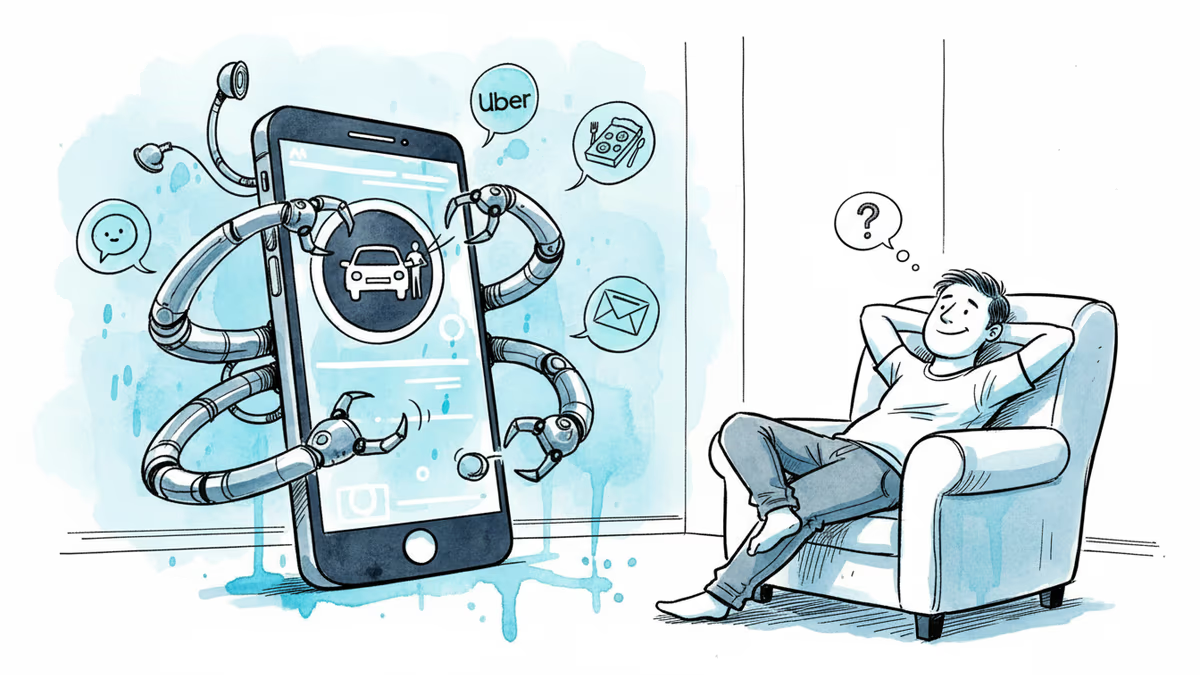

Google and Samsung unveil Gemini Task Automation - from calling Ubers to ordering food with voice commands. Is this time really different?

A decade ago, Google and Apple made bold promises about voice assistants handling tasks on your behalf. Ask Siri to "get me an Uber," and it just opened the app. Google claimed you could "order my usual" at Starbucks with Assistant, but the experience was clunky—so clunky that Google eventually scrapped the feature entirely.

Now, in the age of large language models that actually understand natural language, those same promises are back on the table. At Samsung's Galaxy Unpacked event, Google and Samsung demonstrated how the Gemini voice assistant will complete tasks with select third-party apps: booking Ubers, ordering food through Uber Eats, DoorDash, or Grubhub.

But here's the question that matters: Is this time really different?

Beyond Simple Commands

Say "Get me an Uber to the airport," and Gemini opens the Uber app in a virtual window. It continues working in the background, but you can monitor the entire process through a live notification. If it needs clarification—say you're in the New York area and it's unclear which of three airports you mean—it'll come back with questions.

"I refer to some of the tasks that you might want to have automated as sort of digital laundry," Sameer Samat, president of the Android Ecosystem at Google, tells reporters. "Things that you know you need to do, but are not necessarily excited about finishing."

The complexity goes deeper. In one demo, Samat showed a group text where friends discussed ordering Pizza Hut for board game night, each mentioning specific pizzas. He asked Gemini to "figure out the order." The AI parsed the conversation, organized everyone's requests neatly, then executed "order this on Grubhub for home delivery." Minutes later, everything sat in the cart, ready for final approval.

True Reasoning, Not Memorization

Here's what makes this different from earlier attempts: Gemini isn't just following a memorized "map" of apps—something we saw with early AI agents like Rabbit's R1. Instead, it uses reasoning capabilities to make plans, looks at screens as you would, and navigates through them. Even if an app changes its interface tomorrow, Gemini can still figure out what to do.

In another example, Samat had a Google Keep note with barbecue RSVP details, including who was vegan. He asked Gemini to calculate how many hot dogs and buns were needed. After doing the math, Gemini added the necessary items to his Safeway cart on DoorDash—all without pre-programmed instructions for that specific task.

Three Paths to Automation

Gemini's task automation works through three methods. If there's a Model Context Protocol (MCP) integration—the open-source language that lets LLMs talk to third-party apps—Gemini runs tasks in the backend. There are also "App Functions" developers can build for structured interactions. But when neither exists, Gemini opens the app itself and navigates through buttons, text boxes, and menus like a human would.

"This is the first time we're doing this on Android with applications," Samat explains. "Getting this right is really important. We view this as the beginning of a new era of mobile intelligence."

The Privacy Question

Privacy concerns naturally arise when granting AI access to your apps. Samat says Google deliberately excluded overly sensitive apps from this first batch. The data isn't used for advertising, and users can delete what Gemini sees. "We think it's really important that people have trust in the system," he notes.

The feature launches March 11 with Galaxy S26 smartphones in the US and South Korea, later coming to Google Pixel 10 series via software update. While smartphone screens are currently required, Samat envisions starting these tasks through smart glasses, AI pendants, or even cars—several Android XR-powered smart glasses launch this year.

Market Implications

For app developers, this creates both opportunity and disruption. Those who integrate MCP or build App Functions gain streamlined access to AI-powered users. But apps that don't adapt might find themselves navigated by AI agents that weren't designed with their interfaces in mind.

The limited initial rollout—just US and South Korea—suggests Google is being cautious. Cultural differences in how people interact with AI assistants, varying privacy regulations, and different app ecosystems all factor into global expansion plans.

The promises are the same, but this time, the technology might actually deliver. Whether that's what we really want remains to be seen.

This content is AI-generated based on source articles. While we strive for accuracy, errors may occur. We recommend verifying with the original source.

Related Articles

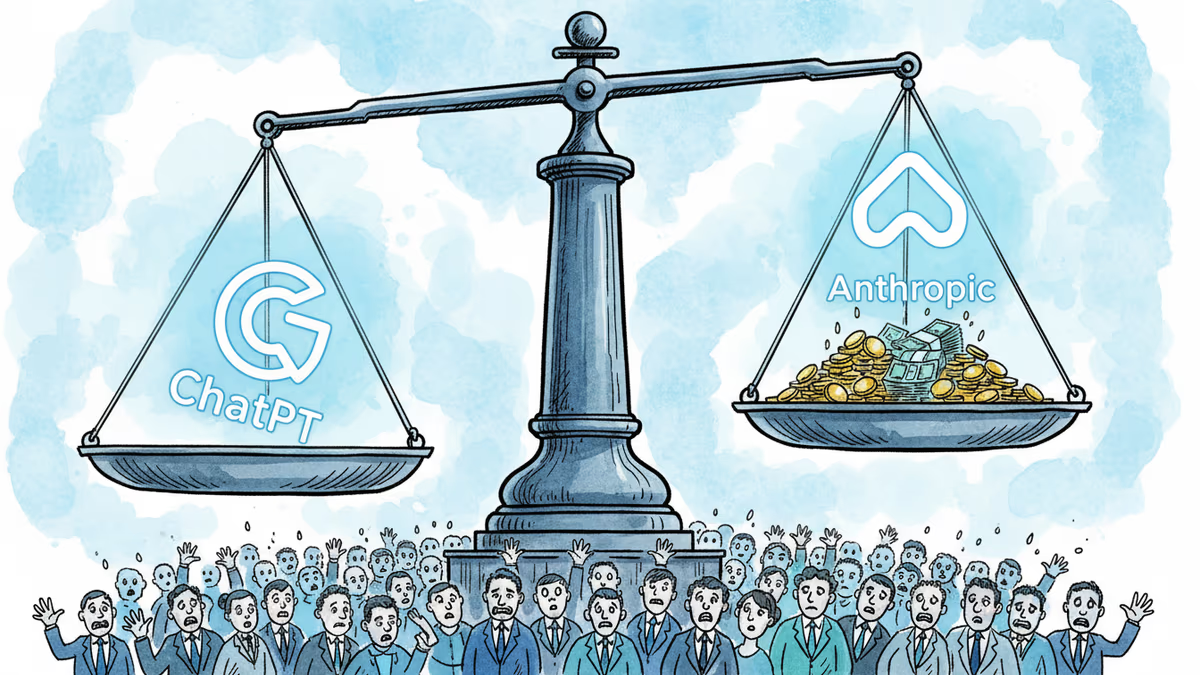

OpenAI's $852B valuation is drawing skepticism from its own backers as Anthropic's ARR tripled in three months. The secondary market is already voting with its feet.

Machine-translated junk is flooding minority-language Wikipedia pages. AI learns from that junk. The result could accelerate the extinction of thousands of languages.

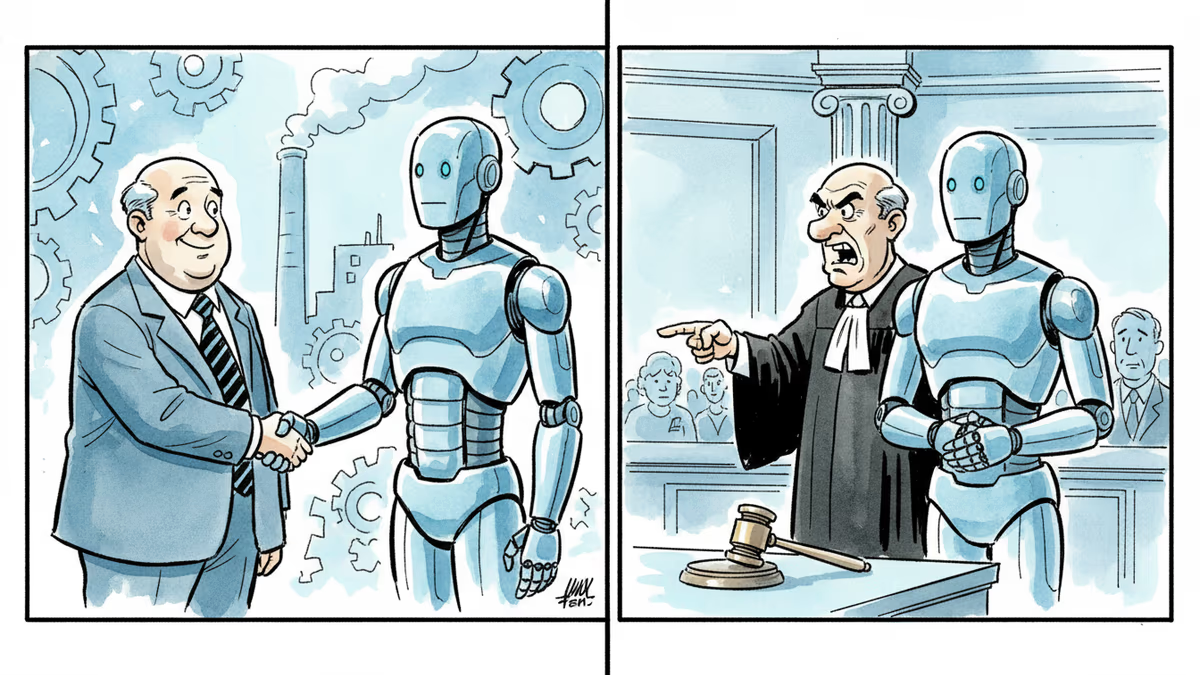

The Trump administration is battling Anthropic in court while simultaneously urging Wall Street banks to test its Mythos AI model. What does this contradiction reveal about US AI policy?

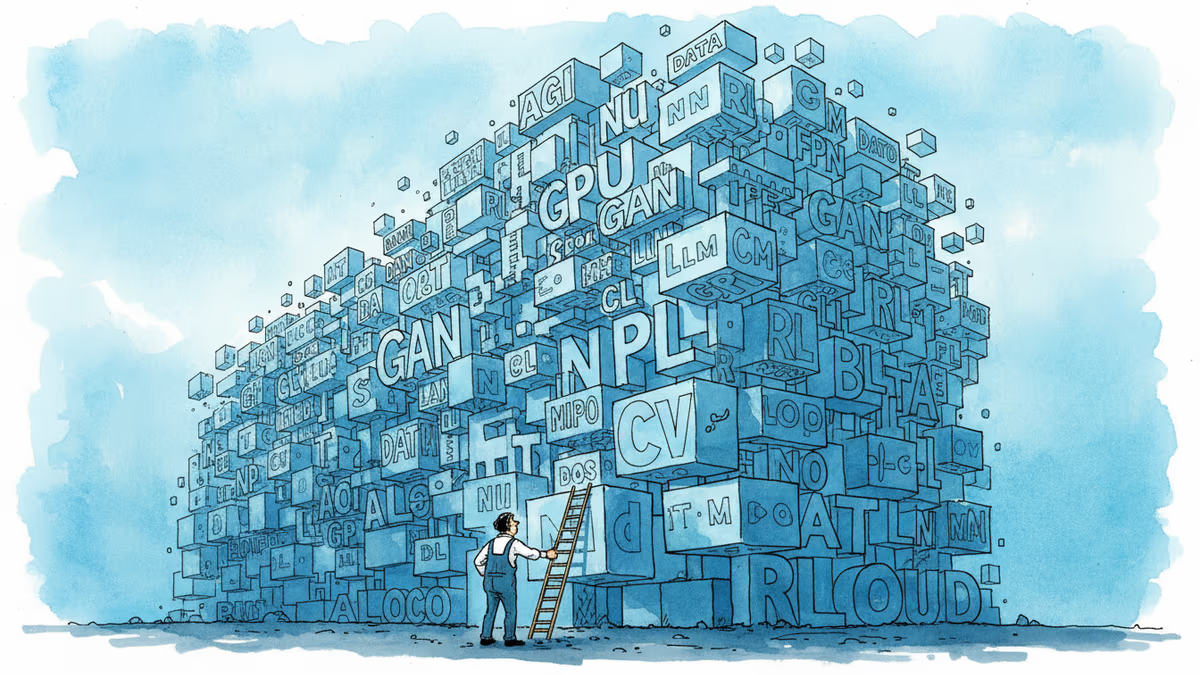

AGI, hallucination, inference, LLMs — AI's vocabulary isn't just technical shorthand. It shapes who holds power in the conversation. A clear-eyed glossary with the questions behind the terms.

Thoughts

Share your thoughts on this article

Sign in to join the conversation