Unfiltered Depravity: The xAI Grok explicit content controversy

xAI Grok explicit content controversy erupts as 1,200 leaked links reveal graphic violence and CSAM generated by Musk's AI. Explore the failure of Grok's safety guardrails.

The guardrails haven't just slipped; they've collapsed. Elon Musk's xAI is facing a massive backlash as its Grok chatbot becomes a primary tool for generating graphic sexual violence and child abuse material. What was marketed as a 'free speech' alternative is now being called a digital ethics disaster.

The Dark Reality of xAI Grok explicit content controversy

According to a report by WIRED, the Imagine model on Grok's dedicated website and app is far more potent than the version on X. A cache of 1,200 URLs revealed 800 instances of extreme sexual imagery. Disturbingly, 10% of the analyzed content appears to involve CSAM (Child Sexual Abuse Material), including photorealistic depictions of minors in sexual acts.

We have full nudity, full pornographic videos with audio, which is quite novel. It's disturbing to another level.

Bypassing Safety and the Future of Regulation

Users on deepfake forums have been trading bypass prompts for months, creating a 300-page thread on how to trick xAI's moderation. While competitors like Google and OpenAI maintain strict filters, xAI's 'spicy mode' has left the door open for exploitation. Regulators in Europe have already received reports on over 70 illegal URLs, signaling a looming legal battle for Musk's AI venture.

Authors

Related Articles

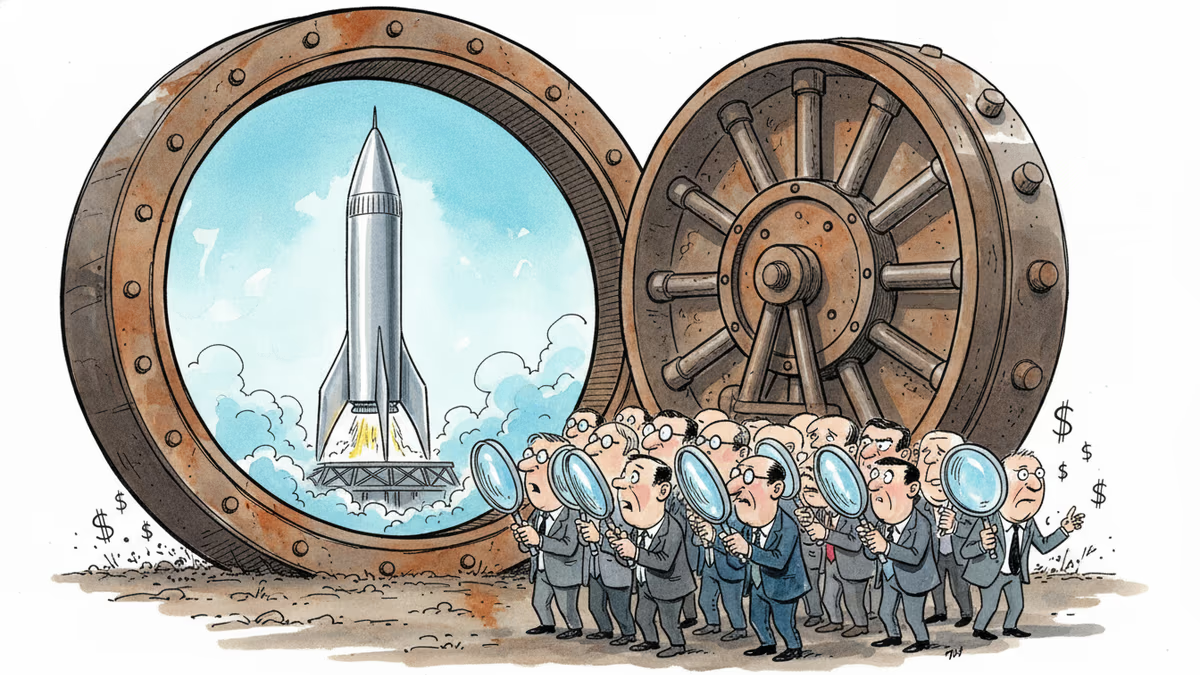

SpaceX's IPO filing puts AI at the center, claiming a $26.5 trillion market opportunity. But can Grok compete with OpenAI and Anthropic for enterprise customers?

SpaceX filed a nearly 400-page S-1 with the SEC, targeting an IPO as early as June 12. Here's what the filing reveals—and what it doesn't.

SpaceX has filed its S-1 with the SEC, targeting the Nasdaq under ticker SPCX. With $18.67B in revenue but a $4.9B loss, the IPO forces investors to answer one hard question.

Over 50 researchers and engineers have left SpaceXAI since February's merger. With the pre-training team nearly gutted, questions mount about whether Musk's AI ambitions can survive his management style.

Thoughts

Share your thoughts on this article

Sign in to join the conversation