The Claude Code Humanizer Plugin: Using Wikipedia Rules to Hide AI Writing

Siqi Chen's new Claude Code Humanizer plugin uses Wikipedia's AI detection rules to help Claude models sound more human and avoid detection. Explore the technical irony.

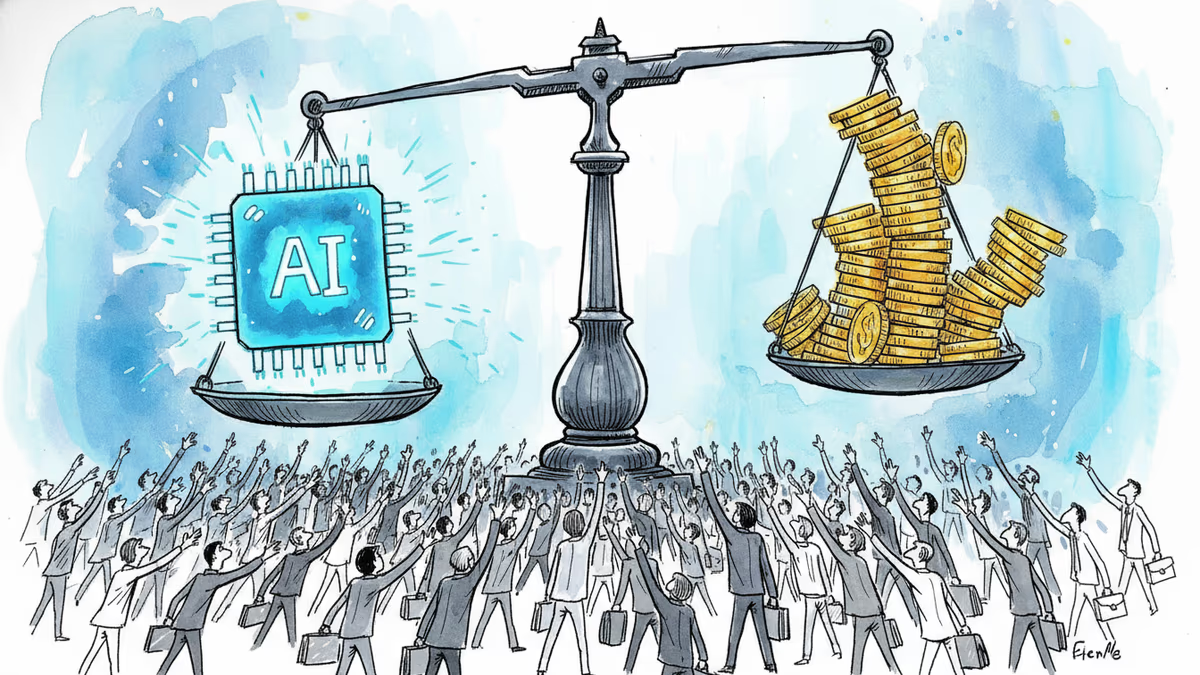

They've shaken hands, but one side is hiding a fist. In an ironic twist of tech development, the rules designed to catch AI are now being used to make it invisible. Tech entrepreneur Siqi Chen just released Humanizer, an open-source plugin for Anthropic's Claude Code assistant that instructs the AI to stop writing like a robot.

Claude Code Humanizer Plugin: The Paradox of Detection

The source material for this 'cloaking device' isn't a secret manual, but a public guide from WikiProject AI Cleanup. Since late 2023, these Wikipedia editors have been hunting AI-generated content. In August 2025, they published a list of 24 language patterns that scream 'chatbot.' Chen's tool feeds these exact patterns back to Claude as a list of things to avoid, gaining over 1,600 stars on GitHub within days.

Swapping Fluff for Opinions

Chatbots love to describe things as "nestled within" or "marking a pivotal moment." The Humanizer plugin tells the AI to ditch this inflated language for plain facts. More interestingly, it encourages the AI to have opinions. Instead of neutrally listing pros and cons, it might say, "I genuinely don't know how to feel about this." By mimicking human indecision, it subverts the common traits of predictable machine output.

| Aspect | Humanizer Plugin Effect |

|---|---|

| Tone | Less precise, more casual/opinionated |

| Language | Removes Wikipedia-flagged patterns |

| Factuality | No improvement; stays the same |

| Cost | Requires paid Claude subscription |

The Risk of Casual Code

It's not all smooth sailing. While the plugin makes text sound more human, it doesn't necessarily make it better. For technical documentation, having an 'opinion' can be a drawback. Furthermore, limited testing suggests it might even harm the model's coding ability. The core issue remains: detection is a losing game. A 2025 study found that while experts spot AI 90% of the time, a 10% false positive rate means high-quality human writing often gets caught in the crossfire.

Authors

Related Articles

A small but growing group of developers has gone all-in on AI coding agents like Claude Code and OpenClaw. History suggests the rest of us won't be far behind.

OpenAI's new Daybreak initiative uses the Codex AI agent to find and patch security vulnerabilities before attackers do—putting it in direct competition with Anthropic's secretive Claude Mythos.

Anthropic is closing what may be its final private fundraise at a $900B valuation, surpassing OpenAI. Investors have 48 hours to commit to the ~$50B round.

Anthropic's tightly restricted Mythos AI—designed to find security flaws—was accessed by Discord sleuths without a single line of exploit code. Meanwhile, North Korean hackers used AI to steal $12M in three months. The security paradox of 2026.

Thoughts

Share your thoughts on this article

Sign in to join the conversation