Open Source AI Assistant Hits 69K GitHub Stars in a Month—But at What Cost?

Austrian developer's Moltbot becomes one of 2026's fastest-growing AI projects, offering proactive personal assistance across messaging platforms—with serious security trade-offs.

69,000 stars in just one month. That's what Austrian developer Peter Steinberger's open-source AI assistant Moltbot (formerly Clawdbot) has achieved on GitHub, making it one of 2026's fastest-growing AI projects. While dozens of unofficial AI bot apps fade into obscurity, Moltbot stands out for one compelling reason: it doesn't wait for you to talk first.

The Assistant That Reaches Out

Unlike traditional AI tools that respond only when prompted, Moltbot takes initiative. It sends morning briefings, calendar reminders, and alerts based on triggers you set. Think of it as the difference between a reactive customer service rep and a proactive personal assistant who anticipates your needs.

What makes this particularly intriguing is platform integration. Moltbot works through messaging apps you already use—WhatsApp, Telegram, Slack, Discord, Google Chat, Signal, iMessage, and Microsoft Teams. No need to download yet another app or learn a new interface. Your AI assistant simply becomes another contact in your existing conversations.

The Jarvis comparisons aren't accidental. Moltbot attempts to actively manage tasks across your digital life, moving beyond the question-and-answer format that defines most AI interactions today.

The Hidden Costs of Convenience

But here's where reality bites: Moltbot isn't truly free to operate. While the organizing code runs locally, effective use requires a subscription to Anthropic or OpenAI for model access. You can run local AI models, but they're currently less capable than commercial options like Claude Opus 4.5, Anthropic's flagship large language model.

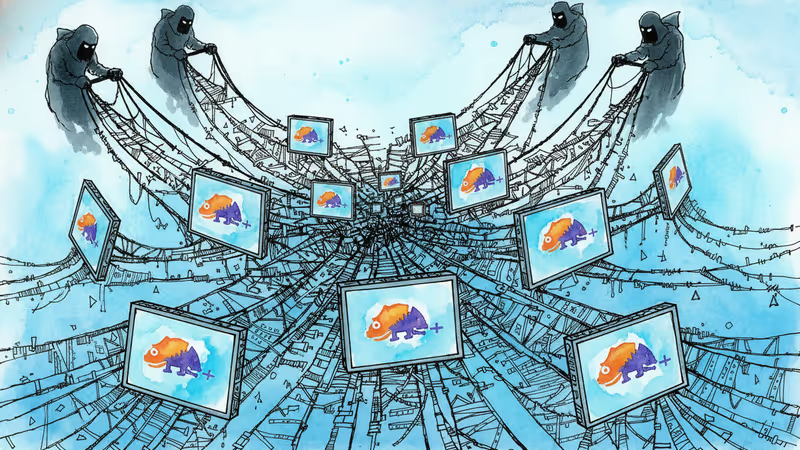

More concerning are the security implications. The developer openly acknowledges "serious security risks" in the current design. When an AI assistant needs access to your personal information, calendar, and messages to function effectively, the potential damage from hacking or data breaches multiplies exponentially.

This raises uncomfortable questions about the trade-offs we're willing to make. How much convenience is worth how much privacy risk? And who's responsible when things go wrong with hobbyist software handling sensitive personal data?

The Open Source Disruption

Yet Moltbot's rapid rise signals something significant: the emergence of viable open-source alternatives to Big Tech's AI assistants. Unlike Google Assistant, Siri, or Alexa, users can modify the code, add features, and understand exactly how their data is processed.

This democratization of AI assistant technology could reshape the market. Smaller companies can build customized solutions without starting from scratch. Privacy-conscious users can audit the code themselves. And developers worldwide can contribute improvements rather than waiting for corporate roadmaps.

The timing isn't coincidental either. As regulatory scrutiny of Big Tech intensifies and privacy concerns grow, open-source alternatives become more attractive. Moltbot offers something rare in today's tech landscape: transparency and user control.

The Security-Convenience Paradox

But transparency doesn't automatically mean security. Open-source code can be audited by anyone—including malicious actors looking for vulnerabilities. The very features that make Moltbot useful—cross-platform integration, proactive communication, access to personal data—also make it a potentially attractive target.

This creates a fascinating paradox. The AI assistant that promises the most personal, integrated experience also requires the highest level of trust. And that trust is being placed in hobbyist software with acknowledged security risks.

For enterprise users, this presents additional challenges. Can companies allow employees to use tools that connect to corporate messaging systems but lack enterprise-grade security certifications? How do IT departments balance innovation with risk management?

Authors

Related Articles

Current AI's open-source device challenges Big Tech's AI monopoly with offline capabilities and 22 Indian languages. Could this spark a new era of decentralized AI?

Indian startup Sarvam unveils 105B-parameter open-source AI models, challenging Google and OpenAI with smaller, efficient alternatives. Can David beat Goliath in AI?

Malicious code in npm and PyPI packages compromised dYdX developers' crypto wallets and backdoored systems. Security researchers warn all applications using infected versions are at risk

Popular text editor Notepad++ was compromised for 6 months by suspected Chinese state hackers who selectively delivered backdoored updates to specific targets, exposing critical vulnerabilities in open-source infrastructure

Thoughts

Share your thoughts on this article

Sign in to join the conversation